Join ML Engineer Interview MasterClass (April Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

ML Engineer MasterClass (April) | 2 seats left

Design a Search Ranking System

Problem Formulation

Before you write a single line of model code, you need to nail down what you're actually building. The interviewer is watching to see if you can translate a vague product goal ("make search better") into a concrete ML system with defined inputs, outputs, and success criteria. Candidates who skip this step and jump straight to "I'd use a two-tower model" almost always get tripped up later.

Start by asking: what kind of search is this? E-commerce search (Amazon, Etsy) optimizes for purchases and revenue. Web search (Google) optimizes for relevance and dwell time. Internal enterprise search optimizes for task completion. Each has fundamentally different signals. A click on Amazon means something very different from a click on a Google result.

Clarifying the ML Objective

ML framing: Given a user query and a corpus of documents, the model predicts a relevance score for each (query, document) pair and ranks candidates to maximize the probability of user satisfaction.

The business goal is something like "increase revenue per search session." That's not directly optimizable. So you proxy it. Clicks are noisy but abundant. Purchases are sparse but high-signal. Dwell time captures engagement but can be gamed by slow-loading pages. In practice, you'll blend these signals into a single training label, but you need to be explicit with your interviewer about what you're actually optimizing and why it's an imperfect proxy for what the business cares about.

The ML task itself is a ranking problem, not a classification problem, even though it superficially looks like one. You're not asking "is this document relevant?" in isolation. You're asking "which ordering of documents maximizes satisfaction?" That distinction matters for your choice of loss function and evaluation metric.

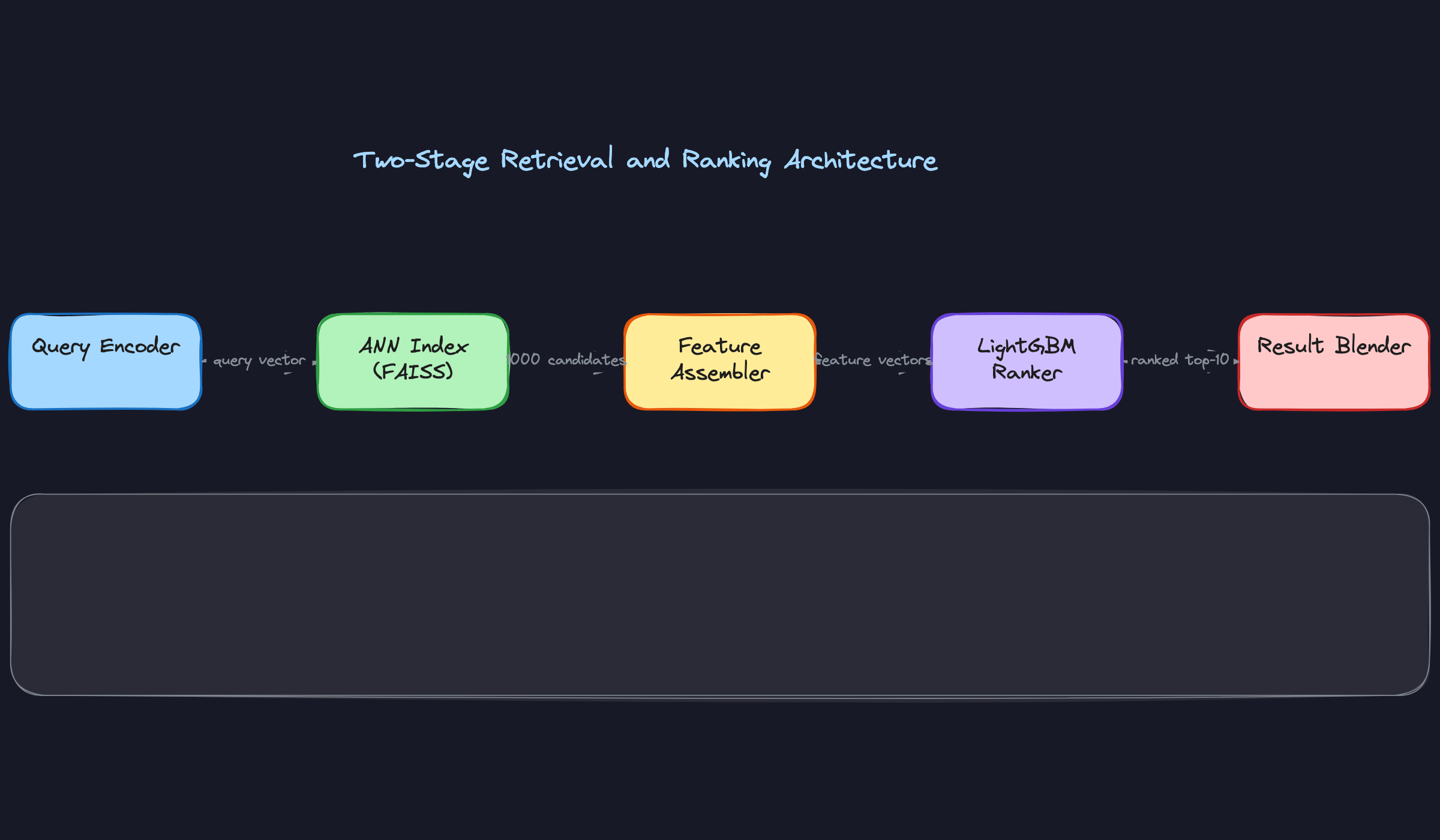

One more thing to clarify upfront: are you building a single model or a pipeline? At any meaningful scale, a single model can't both search 500 million documents and produce a finely-tuned ranked list within 100ms. You need two stages. The first stage retrieves a few thousand plausible candidates fast. The second stage re-ranks those candidates with a richer, slower model. Get this on the whiteboard early.

Functional Requirements

Core Requirements

- Query understanding: The system must parse raw user queries, handle spelling corrections, identify named entities, and classify query intent (navigational, informational, transactional) before retrieval begins.

- Two-stage ranking pipeline: Stage 1 retrieves ~1,000 candidates from a 500M-document corpus using approximate nearest neighbor search on dense embeddings. Stage 2 re-ranks those candidates using a learning-to-rank model with a rich feature set, returning the top 10 results.

- Personalization: The ranking model must incorporate user-level signals (purchase history, browsing behavior, demographic context) to personalize results, with graceful degradation for new users with no history.

- Index freshness: New documents must be retrievable within minutes of being added to the catalog. Ranking features like real-time CTR and inventory status must reflect current state, not yesterday's snapshot.

- Latency SLA: End-to-end p99 latency under 200ms, with the retrieval stage completing in under 30ms to leave budget for feature fetching and re-ranking.

Below the line (out of scope)

- Query auto-complete and search suggestion (separate model, separate latency budget)

- Sponsored result ranking and ad auction mechanics (different optimization objective)

- Multi-modal search over images or video (assumes text corpus for this design)

Metrics

Offline metrics

NDCG@10 (Normalized Discounted Cumulative Gain) is your primary offline metric. It rewards putting the most relevant results at the top of the list and penalizes burying them. The discounting factor matters here: a relevant result at position 1 is worth far more than the same result at position 8. MRR (Mean Reciprocal Rank) is a useful complement when users typically click the first relevant result they see, which is common in navigational queries.

Avoid raw accuracy or AUC as your headline metric. AUC treats all positions equally, which doesn't reflect how users actually interact with ranked lists. A model with 0.92 AUC that puts the best result at position 5 instead of position 1 is a worse product than a model with 0.88 AUC that gets position 1 right.

Online metrics

- Click-through rate (CTR) on the top 3 results, segmented by query intent type

- Purchase conversion rate per search session (the highest-signal metric for e-commerce)

- Revenue per query, which captures both conversion rate and average order value

- Null result rate: the fraction of queries that return zero results or result in immediate session abandonment

Guardrail metrics

- p99 latency must stay under 200ms end-to-end; any model change that breaks this is a non-starter regardless of NDCG improvement

- Result diversity: no more than 3 results from the same seller in the top 10 (prevents monopolization)

- Coverage: the fraction of queries for which the system returns at least 5 results above a relevance threshold; tail query coverage is a common blind spot

Constraints & Scale

For a mid-sized e-commerce platform, assume 10,000 queries per second at peak, a catalog of 500 million documents, and a user base of 100 million monthly actives. Those numbers drive every architectural decision that follows.

The retrieval stage has to be fast enough that the ranking stage has room to breathe. If retrieval takes 80ms, you've already burned 40% of your latency budget before the ranking model sees a single feature. The embedding index needs to live in memory, which means your FAISS index for 500M documents at 128 dimensions (float32) is roughly 256GB. That's a dedicated fleet, not a shared service.

Cold-start is a real constraint. New items have no click history, no purchase signal, nothing. Your ranking model needs to fall back to content-based features (title, description, category) for items with fewer than some minimum interaction threshold. Define that threshold explicitly.

| Metric | Estimate |

|---|---|

| Prediction QPS | 10,000 queries/sec (peak) |

| Training data size | ~5B (query, document, label) pairs from 90 days of logs |

| Model inference latency budget | Retrieval: <30ms, Re-ranking: <50ms, Total: <200ms p99 |

| Feature freshness requirement | Static features: 24hr; Dynamic features (CTR, inventory): <5 min |

| Corpus size | 500M documents, ~256GB embedding index in memory |

| Training cadence | Daily incremental retrain; weekly full retrain |

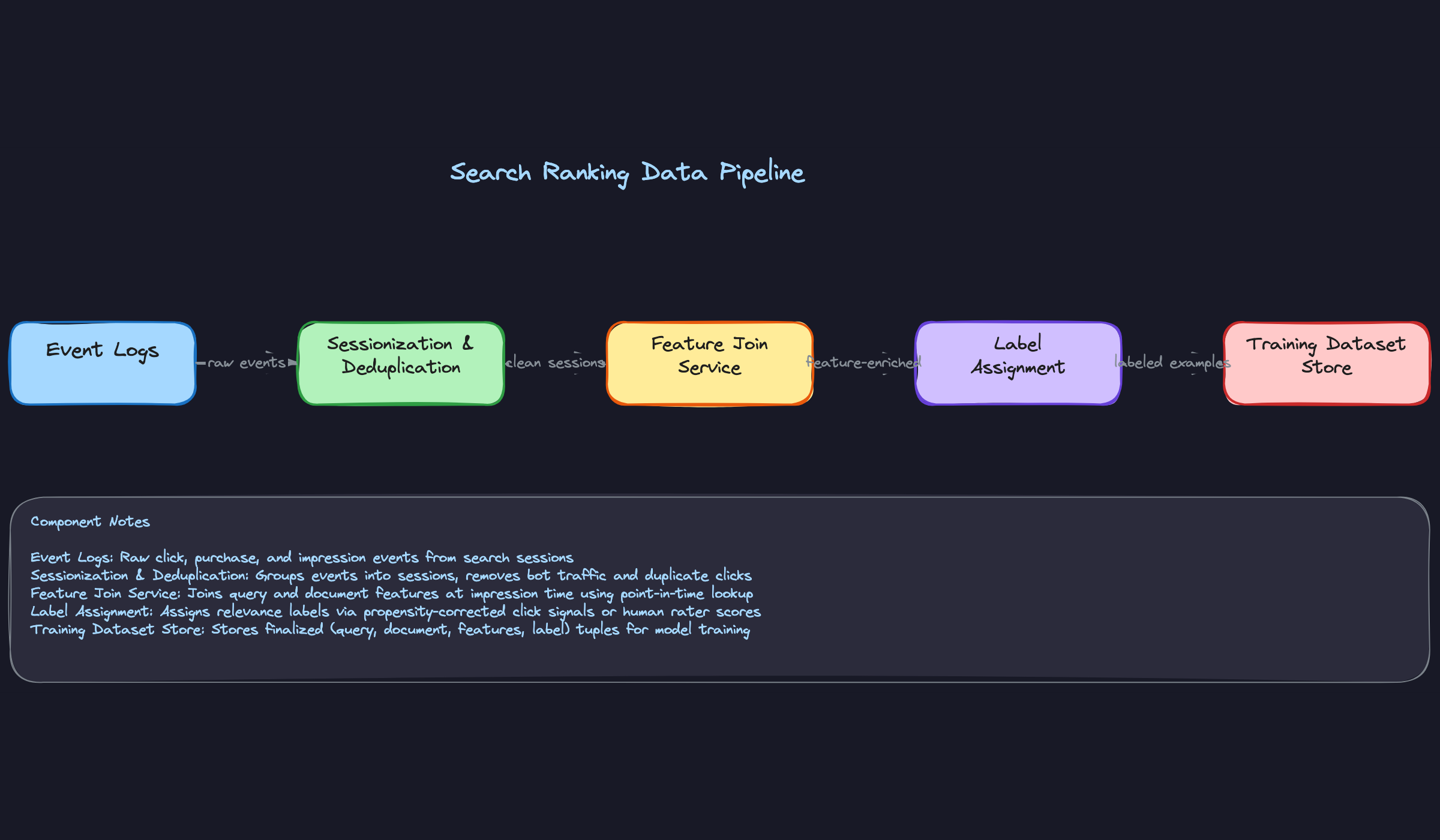

Data Preparation

Your model is only as good as the data you train it on. In search ranking, that means getting three things right: knowing where your training signal comes from, generating labels that aren't lying to you, and splitting your data in a way that reflects how the model will actually be used.

Data Sources

The backbone of any search ranking system is the interaction log. Every time a user submits a query, your system should be recording the query string, the ranked list of documents shown, which positions were clicked, how long the user spent on each result, and whether they converted (purchased, bookmarked, or returned to search). At 10k QPS, you're generating hundreds of millions of events per day. That's your primary training signal.

Here's what you're working with across the major sources:

Query-document interaction logs. Clicks, skips, dwell time, purchases, add-to-cart events. This is your highest-volume source and your noisiest. Volume: billions of events/day at scale. Freshness: near-real-time via Kafka. Reliability: moderate, heavily position-biased.

Item catalog. Product titles, descriptions, categories, price, inventory status, seller metadata. Static features that change infrequently. Volume: tens to hundreds of millions of documents. Freshness: updated in batch (hourly to daily). Reliability: high, but descriptions can be low-quality or spammy.

Session-level behavioral signals. Query reformulations, pogo-sticking (user clicks a result, immediately returns, clicks another), session abandonment. These give you a richer picture of satisfaction than a single click. Volume: lower than raw events, but high signal density.

Human relevance judgments. A small set of (query, document) pairs rated by trained annotators on a 5-point scale (Perfect, Excellent, Good, Fair, Bad). Volume: tens of thousands of queries, not millions. Freshness: slow to produce, updated quarterly. Reliability: highest quality signal you have, but expensive and doesn't scale to tail queries.

Contextual signals. User location, device type, time of day, session context (what did the user search for before this query?). These are features more than labels, but they inform how you segment your training data.

For your event logging schema, you want something like this at minimum:

1{

2 "event_type": "impression",

3 "timestamp": "2024-01-15T14:23:01.123Z",

4 "session_id": "sess_abc123",

5 "user_id": "usr_xyz789",

6 "query": "waterproof hiking boots",

7 "query_id": "qry_def456",

8 "results": [

9 {

10 "doc_id": "item_001",

11 "position": 1,

12 "clicked": true,

13 "click_timestamp": "2024-01-15T14:23:08.456Z",

14 "dwell_time_seconds": 45,

15 "converted": false

16 },

17 {

18 "doc_id": "item_002",

19 "position": 2,

20 "clicked": false,

21 "dwell_time_seconds": null,

22 "converted": false

23 }

24 ],

25 "device_type": "mobile",

26 "locale": "en-US"

27}

28Log everything at impression time. You cannot go back and reconstruct what was shown at position 3 if you didn't record it. This is a schema decision you make once and live with for years.

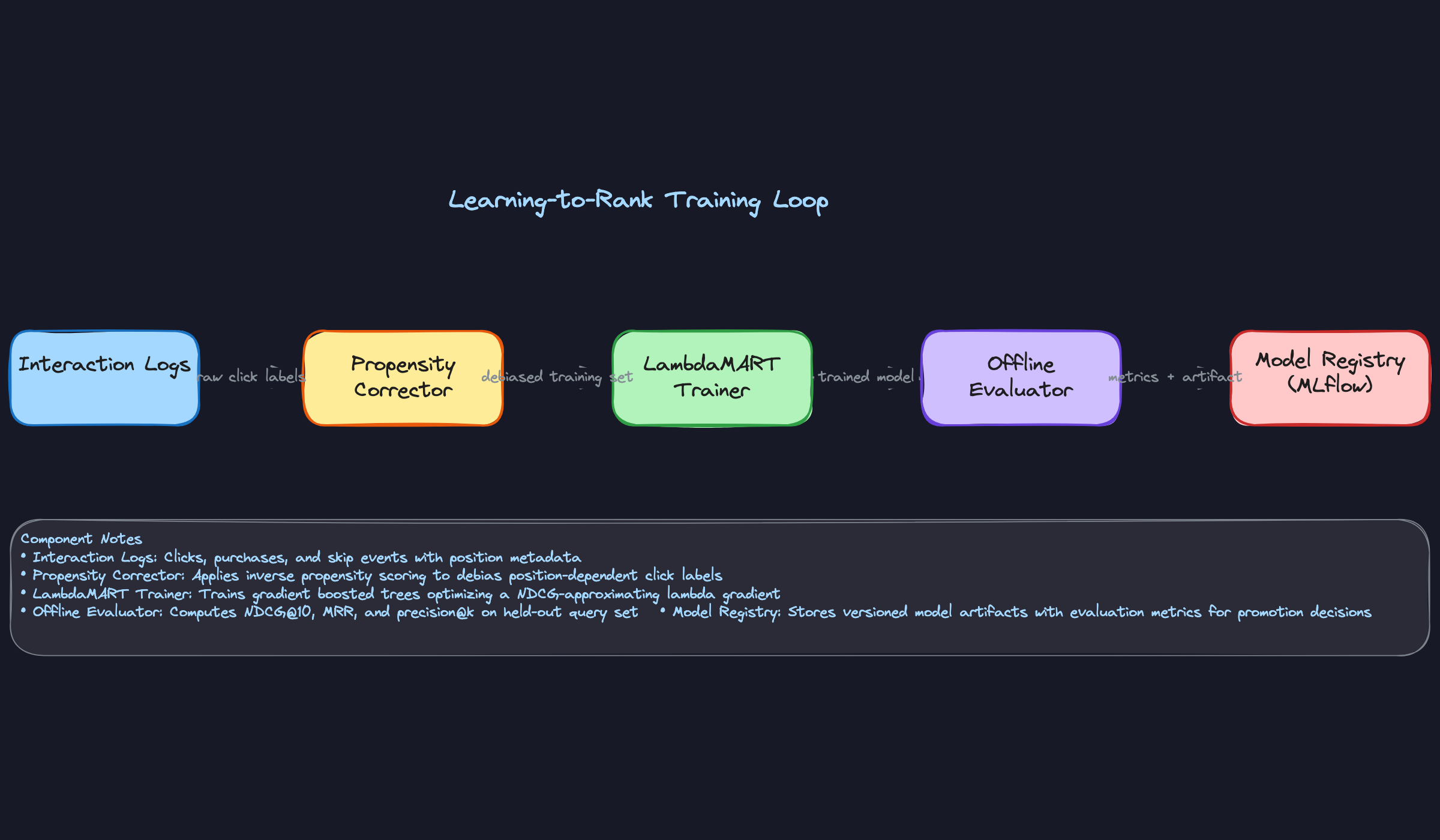

Label Generation

The central challenge in search ranking is that you don't have ground truth relevance scores. You have noisy proxies for relevance, and your job is to turn those proxies into training labels without baking in systematic biases.

Implicit labels from clicks are your workhorse. The simplest approach: clicked = relevant (label 1), shown but not clicked = not relevant (label 0). This breaks immediately because of position bias. A result at position 1 gets clicked far more often than an equally relevant result at position 8, purely because of where it appears. If you train on raw clicks, your model learns to rank things to the top because they were at the top, not because they're relevant.

The fix is inverse propensity scoring (IPS). You estimate the probability that a document at position $k$ would be clicked if it were relevant, $p_k$, and reweight each training example accordingly:

1def compute_ips_weight(position: int, propensity_scores: dict) -> float:

2 """

3 propensity_scores: {position -> P(click | relevant, position)}

4 Estimated from randomized experiments or EM-based propensity models.

5 """

6 p_k = propensity_scores.get(position, propensity_scores[max(propensity_scores)])

7 return 1.0 / p_k

8

9# Example: position 1 has propensity 0.8, position 5 has propensity 0.2

10# A click at position 5 gets weight 5x a click at position 1

11propensity_scores = {1: 0.8, 2: 0.6, 3: 0.4, 4: 0.3, 5: 0.2}

12

13training_examples = []

14for impression in session_impressions:

15 for result in impression["results"]:

16 label = 1 if result["clicked"] else 0

17 weight = compute_ips_weight(result["position"], propensity_scores)

18 training_examples.append({

19 "query_id": impression["query_id"],

20 "doc_id": result["doc_id"],

21 "label": label,

22 "ips_weight": weight

23 })

24Purchase signals are sparse but far more reliable than clicks. A purchase is a strong positive signal; treat it as a higher-weight label. One practical approach is a graded label scheme: purchase = 3, click with long dwell time = 2, click = 1, impression without click = 0. This maps cleanly to the NDCG label scale used in LambdaMART training.

Delayed feedback is a real problem for purchases. A user might click a product, think about it for three days, and then buy it. If you close your training window too early, you miss the conversion and mislabel that click as non-converting. For e-commerce, use a 7-day attribution window before finalizing purchase labels.

Human judgments don't suffer from position bias, but they have their own issues. Annotators disagree on borderline cases, and their judgments reflect the query's meaning at annotation time, which may drift. Use inter-annotator agreement (Cohen's kappa) to filter out low-quality judgments, and treat human labels as a calibration signal rather than the sole training source.

The label generation pipeline looks like this:

1Raw impression events

2 → Filter: remove bot traffic, duplicate sessions

3 → Attribution join: attach purchase events within 7-day window

4 → IPS weighting: assign propensity weights by position

5 → Grade assignment: purchase=3, long-dwell click=2, click=1, skip=0

6 → Human judgment merge: override grades for judged (query, doc) pairs

7 → Output: (query_id, doc_id, grade, ips_weight, timestamp)

8

Data Processing and Splits

Cleaning first. Bot traffic is a significant contamination source. Filter sessions where click rate is suspiciously high (>80% of results clicked), inter-click time is implausibly fast (<200ms), or the user agent matches known crawler patterns. Deduplicate at the session level, not just the event level: a user refreshing the page generates duplicate impressions that should count once.

Outlier removal matters too. Queries with zero impressions in your training window, documents with no interaction history, and sessions with more than 500 events (almost certainly bots or crawlers) should be excluded.

Handling imbalance. Purchases are rare relative to clicks, which are rare relative to impressions. For a typical e-commerce search, you might see a 0.3% purchase rate and a 5% CTR. If you train on raw data, the model sees overwhelmingly negative examples and learns to predict "not relevant" for everything. Downsample negatives to a 1:10 or 1:20 positive-to-negative ratio, but keep the IPS weights intact so the gradient signal reflects the true data distribution.

Time-based splits are non-negotiable. Don't use random splits. If you randomly shuffle your data and split 80/10/10, your validation set contains future data that leaked into training through shared query patterns, item popularity trends, or seasonal effects. Your offline metrics will look great, and your online metrics will disappoint you.

Instead, split by time:

1Training set: Days 1–60 (oldest data)

2Validation set: Days 61–75 (tune hyperparameters here)

3Test set: Days 76–90 (evaluate final model, touch once)

4Within each split, stratify by query frequency bucket. Head queries (top 1% by volume) behave differently from torso and tail queries. If your test set happens to contain more head queries than your training set, your NDCG numbers are inflated. Stratified sampling ensures each split has the same distribution of head/torso/tail queries.

Data versioning. Every training dataset should be a versioned artifact. Tag it with the date range it covers, the feature schema version used during the join, the label generation logic version, and the filtering rules applied. When a model underperforms in production, you need to be able to reproduce the exact training data it saw. Tools like DVC or Delta Lake handle this well; at minimum, store a manifest file alongside each dataset snapshot.

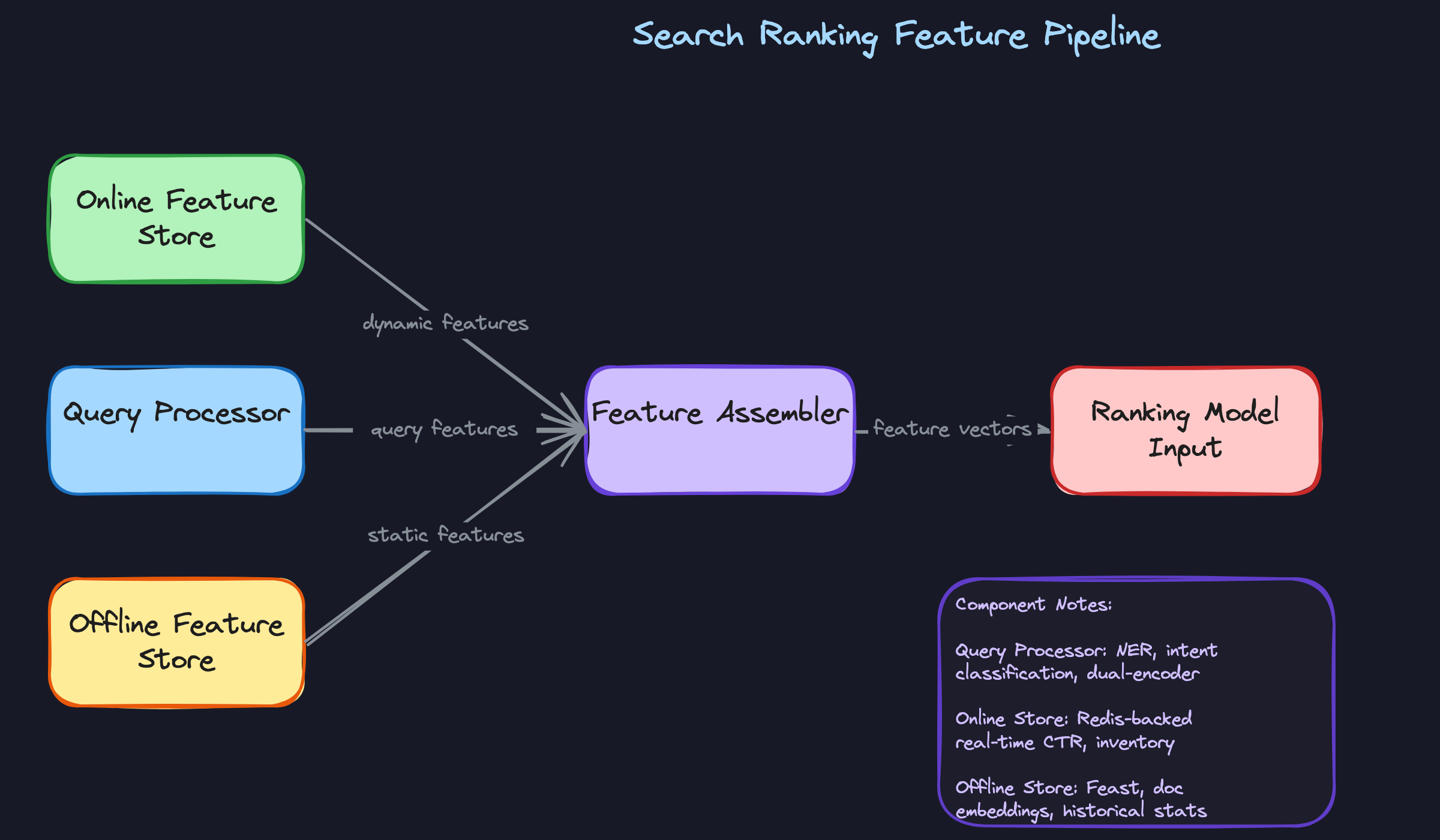

Feature Engineering

Most candidates sketch a ranking model and wave their hands at "features." The interviewer wants specifics: what signals, how computed, and how you guarantee the same computation runs in training and production.

Feature Categories

Search ranking features fall into four buckets. The real skill is knowing which ones to prioritize given your latency budget.

Query features describe what the user is asking for, independent of any specific result.

| Feature | Type | How Computed |

|---|---|---|

| Query length (token count) | Integer | Tokenizer at request time |

| Query type (navigational / informational / transactional) | Categorical | Intent classifier (fine-tuned BERT) |

| Historical CTR for this exact query | Float | 30-day rolling aggregate in feature store |

| Query embedding | Float[768] | Dual-encoder at request time |

| Is rare query (tail query flag) | Boolean | Query frequency lookup against index |

Tail queries are a trap. Your model has seen "nike air max size 10" ten thousand times, but "handmade wool felted mushroom ornament" maybe twice. Features like historical CTR become useless for tail queries, and you need to fall back to semantic similarity.

Document features describe the item being ranked, computed ahead of time.

| Feature | Type | How Computed |

|---|---|---|

| Title TF-IDF score against query | Float | Computed at retrieval time using inverted index |

| Item age (days since listing) | Integer | now() - created_at, refreshed daily |

| 7-day view count | Integer | Batch aggregate from event logs |

| Seller reputation score | Float | Weighted average of ratings, updated nightly |

| Document embedding | Float[768] | Dual-encoder, precomputed and stored in FAISS |

Personalization features are where you win or lose against competitors. A user who has bought three hiking boots should see different results for "waterproof shoes" than someone who just bought a wedding dress.

| Feature | Type | How Computed |

|---|---|---|

| User's 30-day category purchase distribution | Float[] | Spark job, daily batch |

| User embedding (from purchase history) | Float[128] | Matrix factorization, weekly retrain |

| User's historical CTR on this seller | Float | Rolling aggregate per (user, seller) pair |

| Account age (days) | Integer | Static, from user table |

| Device type | Categorical | Extracted from request header at serving time |

Cold-start users with no history get a fallback: population-level priors for their demographic segment, or simply zeros for personalization features with the model trained to handle that case. Don't skip this in the interview. Interviewers will probe it.

Privacy matters here too. In regulated markets, you may not be able to use demographic signals directly. The safe answer is to use behavioral proxies (purchase history, session behavior) rather than raw demographics, and to anonymize user identifiers before they hit the feature store.

Cross features capture the relationship between the query and a specific document. These are the highest-signal features for ranking.

| Feature | Type | How Computed |

|---|---|---|

| BM25 score (query, document title) | Float | Computed at retrieval time |

| Cosine similarity (query embedding, doc embedding) | Float | Dot product at serving time |

| Query-category affinity (user's historical CTR in doc's category for this query type) | Float | Join of user history + doc category at serving time |

| Co-click score (how often this doc was clicked after this query) | Float | Precomputed offline, stored in Redis |

The co-click score is worth calling out. It's a direct signal that users who searched for this exact query found this document relevant. It's also extremely sparse: you'll have it for head queries and popular items, and nothing for the long tail. Your model needs to degrade gracefully when it's missing.

Feature Computation

The computation strategy depends entirely on how often the feature changes and how much latency you can afford.

Batch features are computed on a schedule, typically daily or hourly, using Spark or SQL jobs against your data warehouse. The pipeline looks like this: raw event logs land in S3 or HDFS, a Spark job aggregates them into per-entity statistics, and the results are written to both the offline feature store (Parquet/Hive for training) and pushed to the online store (Redis) for serving.

1# Example: Spark job computing 30-day item view counts

2from pyspark.sql import functions as F

3

4item_views = (

5 events_df

6 .filter(F.col("event_type") == "view")

7 .filter(F.col("event_time") >= F.date_sub(F.current_date(), 30))

8 .groupBy("item_id")

9 .agg(F.count("*").alias("view_count_30d"))

10)

11

12# Write to offline store for training

13item_views.write.parquet("s3://feature-store/item_views/date=2024-01-15/")

14

15# Write to online store for serving

16item_views.foreachPartition(write_to_redis)

17Near-real-time features need a streaming pipeline. The classic example: items a user has viewed in the last 10 minutes should influence what ranks highly for their next query. You can't wait for a daily batch job for that.

The pipeline is Kafka (event stream) → Flink (windowed aggregation) → Redis (online store). Flink maintains a sliding window per user, counting views and clicks within the window, and writes the result to Redis on every update.

1# Flink job: 10-minute sliding window of user item views

2env = StreamExecutionEnvironment.get_execution_environment()

3

4user_views = (

5 env

6 .add_source(KafkaSource("search-events"))

7 .filter(lambda e: e.event_type == "view")

8 .key_by(lambda e: e.user_id)

9 .window(SlidingEventTimeWindows.of(

10 Time.minutes(10), Time.minutes(1)

11 ))

12 .aggregate(ViewCountAggregator())

13)

14

15user_views.add_sink(RedisSink(key_pattern="user:{user_id}:recent_views"))

16Real-time features are computed at request time and can't be precomputed. The query embedding is the main one: you run the dual-encoder on the incoming query text and get a 768-dimensional vector in under 5ms on GPU. BM25 scores against retrieved candidates are also computed on the fly.

Keep real-time computation minimal. Every millisecond you spend here comes out of your latency budget.

Feature Store Architecture

The feature store has two layers that serve different masters.

The online store (Redis or DynamoDB) is optimized for single-key lookups at p99 under 5ms. You store the latest value of each feature keyed by entity ID. For a search ranking system, that means keys like item:{item_id}:features and user:{user_id}:features, each containing a serialized feature vector. Redis works well here because the entire working set fits in memory for most corpus sizes.

The offline store (Parquet files on S3, queryable via Hive or Spark) is optimized for bulk reads during training. You store historical snapshots of feature values, partitioned by date, so you can reconstruct the exact feature values that existed at any point in the past.

The hard problem is keeping these two stores consistent. Training-serving skew kills models in production, and it's subtle. Here's a concrete failure mode: your Spark job computes a 7-day rolling average using date_trunc('day', event_time) in UTC. Your online Flink job computes the same window using the server's local timezone. The features look identical in unit tests and diverge silently in production.

The standard defense is point-in-time correct joins. When you construct your training dataset, you join each (query, document) impression with the feature values that existed at the exact timestamp of that impression, not the current values. Feast handles this natively. Without it, you're training on features from the future relative to the label, which is data leakage.

1# Feast point-in-time correct feature retrieval for training

2from feast import FeatureStore

3

4store = FeatureStore(repo_path=".")

5

6# entity_df contains impression timestamps

7entity_df = pd.DataFrame({

8 "item_id": ["item_123", "item_456"],

9 "user_id": ["user_789", "user_012"],

10 "event_timestamp": ["2024-01-15 14:23:00", "2024-01-15 14:25:00"],

11})

12

13# Feast looks up feature values AS OF each event_timestamp

14training_df = store.get_historical_features(

15 entity_df=entity_df,

16 features=[

17 "item_stats:view_count_30d",

18 "item_stats:seller_score",

19 "user_stats:category_affinity",

20 ],

21).to_df()

22One more thing on cold start. New items have no view counts, no CTR, no co-click history. You have two options: use content-based features only (title embeddings, category, price tier) and accept lower ranking quality, or inject new items into a small exploration bucket to gather signal quickly. Most production systems do both: content features as the baseline, exploration traffic to bootstrap behavioral signals, then promote to the main ranking pool once you have enough data.

Model Selection & Training

Start with the simplest thing that could work. A logistic regression model over BM25 score, query length, and item popularity will beat a pure keyword ranker on day one, and it gives you a baseline you can actually explain to stakeholders. More importantly, it exposes which features matter before you spend weeks training a neural model.

Once you've validated the feature set, you'll outgrow logistic regression fast. The production architecture is a two-stage pipeline, and the reason is pure math: you can't run a 100ms cross-encoder over 500 million documents for every query.

Model Architecture

Stage 1: Dual-Encoder Retrieval

The dual-encoder encodes queries and documents independently into the same embedding space. At query time, you encode the query (under 5ms on GPU), then run approximate nearest neighbor search over pre-computed document embeddings stored in FAISS. You get your top-1000 candidates in roughly 20ms.

The key constraint here: because query and document are encoded separately, the model can't capture fine-grained interactions between them. A query for "running shoes for wide feet" and a document titled "athletic footwear" might land close in embedding space even though the document never mentions width. That's the price of speed.

1class DualEncoder(nn.Module):

2 def __init__(self, encoder_model: str = "bert-base-uncased"):

3 super().__init__()

4 self.query_encoder = AutoModel.from_pretrained(encoder_model)

5 self.doc_encoder = AutoModel.from_pretrained(encoder_model)

6 self.projection = nn.Linear(768, 256) # project to shared 256-d space

7

8 def encode_query(self, input_ids, attention_mask):

9 cls = self.query_encoder(input_ids, attention_mask).last_hidden_state[:, 0]

10 return F.normalize(self.projection(cls), dim=-1)

11

12 def encode_doc(self, input_ids, attention_mask):

13 cls = self.doc_encoder(input_ids, attention_mask).last_hidden_state[:, 0]

14 return F.normalize(self.projection(cls), dim=-1)

15

16 def forward(self, query_inputs, doc_inputs):

17 q_emb = self.encode_query(**query_inputs)

18 d_emb = self.encode_doc(**doc_inputs)

19 return torch.matmul(q_emb, d_emb.T) # similarity matrix

20Loss function: in-batch softmax (NT-Xent). For each query in the batch, the paired document is the positive and all other documents are negatives. This scales well and avoids the need for expensive hard-negative mining pipelines, though you should add hard negatives from ANN misses once you have a working baseline.

Stage 2: LightGBM Re-Ranker

The re-ranker sees only the 1000 candidates from Stage 1. It has time to be expensive. LightGBM with a LambdaRank objective is the standard choice here, and it's worth knowing why gradient boosted trees still beat neural models in this slot at many companies.

Trees handle heterogeneous tabular features naturally. Your feature vector at this stage mixes BM25 scores, embedding cosine similarity, real-time CTR, seller reputation, inventory count, and user purchase history. These features have wildly different scales and distributions. A neural model needs careful normalization and embedding layers for each feature type. LightGBM just works.

1import lightgbm as lgb

2

3params = {

4 "objective": "lambdarank",

5 "metric": "ndcg",

6 "ndcg_eval_at": [5, 10],

7 "learning_rate": 0.05,

8 "num_leaves": 127,

9 "min_data_in_leaf": 50,

10 "feature_fraction": 0.8,

11 "label_gain": [0, 1, 3, 7, 15], # gain per relevance grade 0-4

12}

13

14train_data = lgb.Dataset(

15 X_train,

16 label=y_train,

17 group=query_group_sizes, # critical: tells LightGBM which rows belong to the same query

18)

19

20model = lgb.train(params, train_data, num_boost_round=500,

21 valid_sets=[val_data], callbacks=[lgb.early_stopping(50)])

22The group parameter is the most common mistake candidates make when describing LambdaMART. The loss function operates over a ranked list per query, so the model needs to know which training examples share a query. Forget this and you're training a pointwise model with a misleading objective name.

Model Input/Output Contract

1{

2 "ranking_request": {

3 "query_id": "string",

4 "query_embedding": "float[256]",

5 "candidates": [

6 {

7 "doc_id": "string",

8 "features": {

9 "bm25_score": "float",

10 "cosine_similarity": "float",

11 "item_ctr_7d": "float",

12 "seller_rating": "float",

13 "inventory_count": "int",

14 "user_item_affinity": "float",

15 "query_category_match": "float"

16 }

17 }

18 ]

19 },

20 "ranking_response": {

21 "ranked_doc_ids": "string[]",

22 "scores": "float[]"

23 }

24}

25The LTR Paradigm Choice

You'll get asked about pointwise, pairwise, and listwise. Here's the short version.

Pointwise treats each (query, document) pair as an independent regression or classification problem. It's easy to implement but ignores the fact that ranking is inherently comparative. A document's relevance score only matters relative to the other documents in the result list.

Pairwise methods like RankNet and LambdaRank learn from pairs of documents: "document A should rank above document B for this query." This captures relative ordering but still doesn't optimize the full list structure.

Listwise methods like LambdaMART and SoftmaxCE over the full result list optimize the entire ranked list at once. LambdaMART is the practical winner because it directly approximates NDCG through the lambda gradient trick. NDCG itself is non-differentiable (it depends on discrete rank positions), but LambdaRank computes gradient weights that are proportional to the change in NDCG from swapping any pair of documents. LightGBM implements this natively.

Training Pipeline

Infrastructure

The dual-encoder needs GPU training. Fine-tuning BERT-base on 50M (query, document) pairs takes roughly 8-16 A100 hours depending on batch size. Use distributed data-parallel training across 4-8 GPUs with gradient accumulation to simulate large batches (in-batch negatives get better with larger batches, so this directly improves model quality).

LightGBM trains on CPU and is embarrassingly fast by comparison. 30 days of interaction data with 200 features typically trains in under an hour on a 32-core machine.

Training Schedule

Retrain the LightGBM ranker daily on a rolling 30-day window. User behavior shifts with seasons, trends, and catalog changes, and a model trained on 90-day-old data will miss current patterns. The dual-encoder is more expensive to retrain, so do full retraining weekly and incremental fine-tuning (on hard negatives from the current week's ANN misses) daily.

The 30-day window is a starting point, not a rule. Run ablations: compare NDCG@10 for models trained on 7, 14, 30, and 60 days. For e-commerce, you'll often find that 14-21 days is the sweet spot because older data reflects stale catalog state.

Data Windowing Tradeoffs

Shorter windows mean fresher signal but noisier estimates for tail queries. A query that fires 10 times per day has 300 training examples in a 30-day window. Cut that to 7 days and you have 70, which may not be enough to learn stable feature weights for that query type. Weight your training examples by query frequency bucket to compensate.

Hyperparameter Tuning

For LightGBM, use Optuna with a TPE sampler over num_leaves, learning_rate, min_data_in_leaf, and feature_fraction. Run 50-100 trials with early stopping on the validation NDCG@10. The most impactful parameter is usually num_leaves (controls model complexity) and min_data_in_leaf (controls overfitting on rare queries).

For the dual-encoder, learning rate warmup and the batch size matter most. Start with a linear warmup over 10% of training steps, then cosine decay. Batch sizes of 512-2048 work well; larger batches give more in-batch negatives, which improves contrastive learning quality.

Offline Evaluation

Metrics

NDCG@10 is your primary metric. It rewards putting the most relevant results at the top and penalizes burying them. MRR (Mean Reciprocal Rank) is useful as a secondary metric when you care specifically about the rank of the first relevant result, which matters for navigational queries.

Neither of these tells you anything about business impact directly. The connection is empirical: you establish the correlation between NDCG@10 improvements and CTR/revenue lifts through historical A/B tests, then use that as your deployment threshold. A 0.5% NDCG@10 improvement might correspond to a 0.2% CTR lift based on your historical data. Know that number.

Evaluation Methodology

Don't use random cross-validation. Use time-based splits: train on weeks 1-8, validate on week 9, test on week 10. Random splits leak future information into training and will make your offline metrics look better than they are in production.

Also evaluate separately on query frequency buckets: head queries (top 1% by volume), torso queries (1-20%), and tail queries (bottom 80%). Head queries have abundant training signal and your model will look great on them. Tail queries are where it falls apart, and tail queries are often where users have the highest intent.

Baseline Comparisons

Always compare against at least three baselines:

- BM25 only (keyword matching, no ML)

- Previous production model

- Popularity-based ranking (just sort by item CTR)

The popularity baseline is humbling. On head queries, a model that just returns the most-clicked items beats a lot of ML models. If your LightGBM ranker doesn't clearly beat popularity ranking, your features aren't capturing query-document relevance.

Error Analysis

Run your model on a held-out set of 500 queries, manually review the top-10 results for the worst-performing queries (lowest NDCG@10), and categorize the failure modes.

Common patterns you'll find: the model over-ranks popular items for specific queries (popularity bias bleeding through), it fails on long-tail queries with sparse training signal, and it struggles with queries that have ambiguous intent (is "apple" the fruit or the company?). Each failure category points to a specific fix: add query-intent features, use query expansion for tail queries, add an intent classifier upstream.

Calibration matters too, especially if ranking scores feed downstream systems like bid adjustments or notification triggers. Check that a score of 0.8 actually corresponds to roughly 80% probability of a click. Isotonic regression or Platt scaling on the raw LightGBM outputs is usually sufficient.

Inference & Serving

Search ranking is one of the most latency-sensitive ML systems you'll encounter. A recommendation model can afford 200ms. A search result cannot. Users notice ranking latency directly, and every millisecond you spend in the ML pipeline is a millisecond stolen from the user's experience. Your interviewer will expect you to know your latency budget cold.

Serving Architecture

Online vs. batch inference. This is an easy call for search: you need online inference, full stop. The query is unknown until the user types it, so there's no way to precompute ranked results for arbitrary query-document pairs. The one partial exception is pre-computing document embeddings offline, which is exactly what the dual-encoder architecture does. You shift as much work as possible to batch (document embeddings, static features) and keep the online path lean.

The end-to-end serving path flows through five sequential stages, each with a hard latency budget:

User Query

│

▼

┌─────────────────────────────┐

│ Search Gateway │ (request routing, auth, experiment assignment)

└─────────────┬───────────────┘

│

▼

┌─────────────────────────────┐

│ Query Encoder (Triton) │ GPU │ < 5ms

│ BERT dual-encoder │ │ dynamic batching enabled

└─────────────┬───────────────┘

│ query embedding (768-dim vector)

▼

┌─────────────────────────────┐

│ ANN Retrieval (FAISS) │ CPU │ < 20ms

│ IVF-PQ index │ │ top-1000 candidates

└─────────────┬───────────────┘

│ candidate doc IDs

▼

┌─────────────────────────────┐

│ Feature Fetch (Redis) │ < 10ms

│ online feature store │ pipeline call for all 1000 candidates

└─────────────┬───────────────┘

│ feature vectors

▼

┌─────────────────────────────┐

│ Re-ranker (LightGBM) │ CPU │ < 30ms

│ gRPC microservice │ │ batch predict on all candidates

└─────────────┬───────────────┘

│ ranked scores

▼

┌─────────────────────────────┐

│ Post-processing │ < 10ms

│ result blending, rules │ diversity filters, business boosts

└─────────────┬───────────────┘

│

▼

Ranked Results

(p99 < 100ms end-to-end)

Here's the latency breakdown you should walk through in your interview:

| Stage | Component | Target Latency |

|---|---|---|

| Query encoding | Dual-encoder on GPU | < 5ms |

| ANN retrieval | FAISS lookup | < 20ms |

| Feature fetch | Redis (online feature store) | < 10ms |

| Re-ranking | LightGBM inference | < 30ms |

| Post-processing | Result blending, business rules | < 10ms |

| Total p99 | End-to-end | < 100ms |

The query encoder runs on GPU because BERT-based encoders are painfully slow on CPU. Serve the dual-encoder with Triton Inference Server, which gives you dynamic batching out of the box. If multiple queries arrive within a few milliseconds of each other, Triton batches them into a single forward pass, dramatically improving GPU utilization without adding meaningful latency.

LightGBM is a different story. Tree-based models are CPU-native and actually run faster on CPU than GPU for batch sizes under a few thousand. Serve the ranker as a lightweight gRPC microservice. The binary is small, startup is fast, and you can scale horizontally without GPU quota.

The document index update pipeline is a parallel concern. New listings, articles, or products need to be embedded and inserted into FAISS before users can find them. There are two approaches: near-real-time incremental inserts (low latency, but FAISS's IVF index degrades in recall as you add vectors without rebuilding) and full daily rebuilds (consistent recall, but new documents are invisible for up to 24 hours).

Most production systems do both, and the pipeline looks like this:

New / Updated Documents

│

▼

┌─────────────────────────┐

│ Document Ingestion │ Kafka topic: document_updates

│ (streaming pipeline) │

└──────────┬──────────────┘

│

┌─────┴──────┐

│ │

▼ ▼

┌─────────┐ ┌──────────────────────────┐

│Incremen-│ │ Batch Embedding Job │

│tal Path │ │ (nightly, full corpus) │

│ │ │ Spark + GPU cluster │

│ embed │ └──────────┬───────────────┘

│ + insert│ │ full embedding set

│ to FAISS│ │

└────┬────┘ │

│ ▼

│ ┌─────────────────┐

│ │ FAISS Rebuild │ IVF-PQ index from scratch

│ │ restores recall│

│ └────────┬────────┘

│ │

└────────┬─────────┘

│

▼

┌─────────────────┐

│ Index Health │ monitors embedding coverage,

│ Monitor │ recall degradation vs. ground truth

└────────┬────────┘

│ drift > threshold?

▼

trigger early rebuild

Incremental inserts handle freshness for new documents, and a nightly full rebuild restores index quality. The index health monitor tracks embedding coverage and recall degradation, triggering an early rebuild if drift exceeds a threshold.

Optimization

Quantization is your first lever for the dual-encoder. Converting from FP32 to INT8 cuts memory bandwidth in half and typically costs less than 1% in retrieval recall. Run post-training quantization with a calibration dataset before you deploy. For LightGBM, the model is already compact, so quantization isn't usually necessary.

Knowledge distillation matters when you want to replace a large cross-encoder with something faster. Train a smaller student model to mimic the score distribution of the teacher cross-encoder on your training corpus. You get most of the accuracy at a fraction of the inference cost. This is how you justify using a lighter model in production while still benefiting from a heavy model's signal during training.

Caching is underrated. Query embeddings for high-frequency queries (think "red dress", "iPhone case") can be cached in Redis with a short TTL. If 20% of your queries are repeat head queries, you've just eliminated 20% of your GPU load with a few lines of code.

For batching, the key insight is that ANN retrieval and feature fetching are both embarrassingly parallelizable. Fetch features for all 1000 candidates in a single Redis pipeline call, not 1000 sequential gets. LightGBM can score all 1000 candidates in one predict call. Never loop.

Fallback strategies need to be designed before you need them. If the ranking service is down or exceeds its latency SLA, you should fall back to BM25 scores from the retrieval stage. BM25 results are worse than your ML ranker, but they're far better than a 500 error. Build the fallback into the serving gateway with a configurable timeout threshold, not as an afterthought.

Online Evaluation & A/B Testing

Offline NDCG improvements don't always translate to online wins. The only way to know if your new model is better is to run it on real traffic.

Experiment assignment happens at the search gateway. Each incoming request is assigned to a treatment or control bucket using a deterministic hash of the user ID (or session ID for logged-out users). Consistency matters: the same user should see the same model for the duration of the experiment to avoid within-user contamination.

The metrics you track split into two categories. Primary metrics are the ones you're trying to move: CTR on top-3 results, purchase rate, revenue per query. Guardrail metrics are the ones you can't let degrade: null result rate, p99 latency, and session abandonment rate. If a new model improves CTR but tanks latency, it doesn't ship.

For statistical methodology, use a two-sided t-test on your primary metric with a pre-registered minimum detectable effect. Run the experiment for at least one full week to capture day-of-week variation in search behavior. Don't peek at results and stop early when you see significance; that's p-hacking.

Interleaving is worth knowing for ranking interviews specifically. Instead of splitting users into two groups, interleaving mixes results from both models in a single result list and measures which model's results get more clicks. It's dramatically more statistically efficient than A/B testing because every query is a comparison. The downside is that it only measures relative preference, not absolute metric impact. Use interleaving for fast iteration during development, and A/B testing for final launch decisions.

Deployment Pipeline

Before any model touches production traffic, it has to pass a validation gate. This means running the new model against a held-out query set and verifying that NDCG@10 is within a defined threshold of the champion model. It also means checking that the feature schema hasn't changed in a breaking way and that p99 inference latency on a load test meets the SLA. Automate all of this. Manual checks get skipped under deadline pressure.

Shadow scoring is the first production step. The new model runs alongside the champion, scores every query, and logs its results, but users see the champion's results. This lets you verify that the model behaves correctly on real traffic distributions, including tail queries and edge cases that don't appear in your offline eval set. Run shadow mode for at least 24 hours before touching live traffic.

After shadow mode passes, promote to a canary: 1-5% of live traffic. Monitor your guardrail metrics in real time. If null result rate spikes or p99 latency degrades, the deployment pipeline should automatically halt the rollout and page the on-call engineer. Don't rely on humans to catch this manually at 2am.

Gradual ramp-up follows a schedule: 1% → 5% → 10% → 25% → 50% → 100%, with a hold period at each stage. The hold period gives you time to accumulate enough statistical power to detect regressions in lower-frequency metrics like purchase rate.

Rollback triggers should be explicit and automated. Define thresholds before the experiment starts: if revenue per query drops more than 2% relative to control, or if p99 latency exceeds 120ms, roll back immediately. Every model version in the registry carries its training data snapshot hash, feature schema version, and evaluation scores, so rollback means swapping a config pointer, not re-deploying code.

1# Example rollback trigger config

2rollback_policy:

3 guardrail_metrics:

4 - metric: revenue_per_query

5 max_relative_degradation: 0.02 # 2% drop triggers rollback

6 - metric: p99_latency_ms

7 max_absolute_value: 120

8 - metric: null_result_rate

9 max_absolute_degradation: 0.005 # 0.5pp increase triggers rollback

10 evaluation_window_minutes: 30

11 auto_rollback: true

12 notify_oncall: true

13The engineers who design this kind of explicit, automated deployment contract are the ones who ship models reliably. That's what staff-level thinking looks like in this domain.

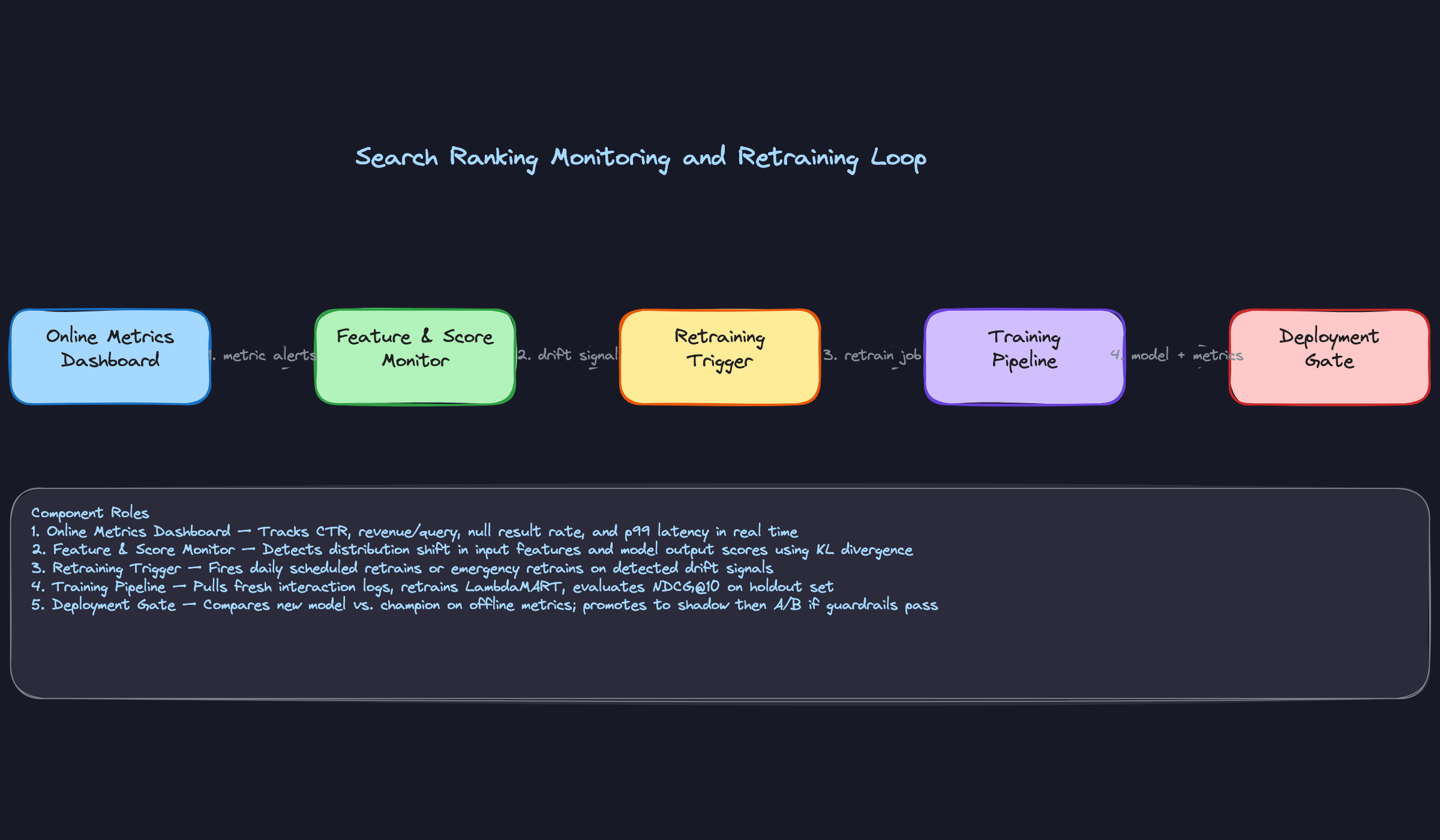

Monitoring & Iteration

A search ranking system that works well at launch will quietly degrade over time. Queries shift, catalogs grow, user behavior evolves. The teams that stay ahead of this don't just react to degradation; they build systems that detect it early and close the loop automatically.

Production Monitoring

Data Monitoring

Start with your inputs, not your outputs. If the features feeding the ranking model drift, the model's predictions will too, and you'll waste hours debugging the wrong layer.

Track three things at the feature level. First, schema violations: did a new field get dropped from the feature store? Did a document embedding dimension change after a model update? These break silently. Second, missing feature rates: if 5% of requests suddenly have null seller scores, something upstream changed. Third, distribution shift: monitor the mean and variance of key features like query length, BM25 score, and real-time CTR. Use KL divergence or population stability index (PSI) to flag when a feature's distribution drifts beyond a threshold from your training baseline.

A sudden shift in the ranking score distribution is often a canary for upstream feature breakage, and it shows up before users start complaining.

Model Monitoring

Your offline metrics (NDCG@10, MRR) tell you how the model performs on a static holdout set. Your online metrics (CTR, revenue per query, session abandonment rate, null result rate) tell you how users are actually responding. These can diverge in ways that are genuinely informative.

If NDCG improves but CTR drops, your model may be optimizing for relevance signals that don't match what users actually want. That's a training data problem, not a model architecture problem. If both drop together, suspect feature drift or a data pipeline failure.

Monitor the prediction score distribution in production. A model that was outputting scores uniformly between 0.3 and 0.9 suddenly compressing to 0.5 to 0.6 is telling you something changed, even if average CTR looks fine on the dashboard.

System Monitoring

Track p50, p95, and p99 latency at each stage of the pipeline separately: query encoding, ANN retrieval, feature fetch, and ranker inference. When your p99 blows up, you need to know which stage is responsible without guessing.

GPU utilization for the dual-encoder and throughput for the LightGBM ranker should both be tracked. An underutilized GPU often means batching is misconfigured. An overloaded ranker means your candidate set is too large or your serving replicas need scaling.

Alerting Thresholds and Escalation

Not every anomaly needs a 3am page. Tier your alerts. PSI above 0.2 on a key feature fires a Slack notification and creates a ticket. A 15% drop in revenue per query fires an immediate page. Null result rate crossing 5% pages the on-call and triggers an automatic rollback evaluation.

The escalation path matters as much as the threshold. Wire your monitoring directly to your deployment gate so that a confirmed online metric regression can trigger a rollback without waiting for a human to notice.

Feedback Loops

How User Feedback Flows Back

Every click, purchase, skip, and explicit report is a training signal. The pipeline that collects these events needs to preserve the context that makes them useful: what position the item appeared at, what the query was, what other items were shown, and what the model's score was at the time.

Without that context, you can't correct for position bias. An item clicked at position 1 is not the same signal as an item clicked at position 8. Log the full impression context, not just the click event.

Feedback Delay Handling

Clicks arrive within seconds. Purchases might arrive hours later. Returns and refunds arrive days later. If you retrain on data from the last 24 hours, you're training on a label distribution that's systematically incomplete.

Two approaches work well in practice. First, use a delayed label join: hold training examples in a staging buffer for 48 to 72 hours before finalizing their labels, so purchases have time to arrive. Second, use a multi-signal label that weights immediate signals (clicks) lower than high-confidence delayed signals (purchases), with the weights tuned to reflect each signal's reliability. Don't just use whatever labels are available at retrain time without thinking about what's missing.

Closing the Loop

The full cycle looks like this: monitoring alert fires on a feature distribution shift, on-call engineer diagnoses the upstream pipeline issue (say, a seller score column went stale in Redis), fix is deployed, emergency retrain is triggered with clean data, new model passes offline evaluation, gets deployed in shadow mode, then promoted to 5% traffic with guardrails active.

That cycle should be documented, tested, and practiced. The teams that handle incidents well have run the loop enough times that each step is automatic.

Continuous Improvement

Retraining Strategy

Daily incremental retraining on a rolling 30-day window handles normal drift. Weekly full retrains rebuild the model from scratch to catch any accumulated bias from the incremental updates. Emergency retraining fires when KL divergence on a key feature exceeds your threshold, or when an online metric drops more than X% in a rolling 1-hour window.

The trigger logic matters. Scheduled retrains are predictable but slow to respond. Drift-triggered retrains are fast but can fire on noise. Use both: scheduled as the baseline, drift-triggered as the safety net.

Prioritizing Improvements

When you're deciding what to work on next, the answer is almost never "train a bigger model." Feature quality improvements almost always outperform architecture changes at the same engineering cost. A new high-signal feature (like real-time inventory status or user's recent purchase category) will typically move NDCG more than switching from LightGBM to a neural ranker.

Prioritize in this order: fix data quality issues first, add high-signal features second, improve the model architecture third. This is counterintuitive for ML engineers who want to work on models, but it's what the data usually supports.

Human evaluation pipelines (using Scale AI or similar) are underrated here. Behavioral signals are noisy and biased toward head queries. Periodic human relevance judgments on tail queries give you a ground truth signal that automated metrics can't provide, and they're often the first place you discover that your model is failing on an entire query category.

Long-Term Evolution

Early in a system's life, the ranking model is the bottleneck. As the system matures, the bottleneck shifts. The catalog grows and retrieval quality becomes the constraint. User behavior diversifies and personalization becomes the differentiator. Query volume grows and latency budget gets tighter.

At scale, you'll also face multi-objective ranking: balancing relevance against diversity, freshness, and monetization. A single NDCG score doesn't capture all of that. Staff-level candidates should be ready to discuss how you represent multiple objectives in the ranking model (constrained optimization, scalarized reward functions, Pareto-optimal candidate selection) and how you decide which objective wins when they conflict.

The data flywheel is the long-term moat. More queries generate more training signal, which improves the model, which improves user satisfaction, which generates more queries. The system design decisions you make early, especially around logging completeness and label quality, determine how fast that flywheel spins.

What is Expected at Each Level

Interviewers calibrate their expectations based on your level. The same answer that gets a mid-level hire can get a senior candidate rejected. Here's where the bar sits.

Mid-Level (L4/L5)

- Correctly describe the two-stage architecture: a fast retrieval stage producing ~1000 candidates, followed by a heavier re-ranking model. You don't need to invent this; you need to explain why one model can't do both.

- Name appropriate algorithms for each stage. Dual-encoder for retrieval, LightGBM or LambdaMART for ranking. Bonus points if you can articulate why a gradient boosted tree is often preferred over a neural ranker at the re-ranking stage (latency, interpretability, feature engineering flexibility).

- Identify the three feature categories: query features, document features, and interaction features. Give at least two concrete examples from each bucket.

- Explain why click data is biased. "Users click what's shown at the top" is the starting point. If you can name position bias and gesture toward propensity correction, you're in good shape.

Senior (L5/L6)

- Go deep on training-serving skew. It's not enough to say it exists. Walk through a specific example: a feature like "item popularity in the last 7 days" computed differently offline versus at serving time, and what that does to model performance in production.

- Articulate the dual-encoder vs. cross-encoder tradeoff without being prompted. Dual-encoders are fast but score query and document independently. Cross-encoders are more accurate but require the full pair at inference time, which makes them infeasible for retrieval over 500M documents.

- Distinguish offline and online metrics, and explain why they can diverge. NDCG@10 improving while CTR drops usually means your training data distribution no longer matches production traffic.

- Describe A/B testing methodology with guardrail metrics. Shadow deployment first, then a 1-5% traffic slice, with explicit guardrails on null result rate and revenue per query before full rollout. Interviewers want to see that you've shipped models before, not just trained them.

Staff+ (L7+)

- Drive toward multi-objective ranking tradeoffs. Relevance, diversity, freshness, and monetization all pull in different directions. A staff candidate proposes a principled way to balance them (weighted objective, constrained optimization, post-ranking blending) and explains the business reasoning behind the choice.

- Identify the feedback loop problem and propose a concrete mitigation. Counterfactual logging, randomized swap experiments, epsilon-greedy exploration at the result level. The interviewer wants to see that you understand the model shapes its own future training data.

- Discuss how the architecture evolves under 10x load or 10x corpus growth. Does FAISS still work at 5 billion documents? What changes in the feature store design? Where does latency blow up first? Staff candidates think in terms of the next two years, not just today's design.

- Name cross-team dependencies proactively. The data flywheel depends on the logging infrastructure team. Index freshness depends on the catalog ingestion team. A staff-level answer acknowledges that the hardest problems in search ranking are organizational, not algorithmic.