Join ML Engineer Interview MasterClass (April Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

ML Engineer MasterClass (April) | 2 seats left

Feature Stores

Feature Stores

Most ML teams build the same feature twice. One version lives in the training pipeline, a batch job that crunches historical data over weeks of transactions. The other version lives in the serving path, recomputed at request time in whatever way the model server engineer thought made sense. They're supposed to be the same feature. They rarely are.

A feature store is the infrastructure that prevents this. It's a centralized system where you define a feature once, compute it consistently, and serve it to both your training jobs and your production models. Think of it as the contract between your data engineering team and your model serving layer.

Here's the concrete version. Your fraud detection model needs to know how many transactions a user made in the last 10 minutes. At inference time, that number needs to come back in under 10ms, because the payment is waiting. At training time, you need that same number computed correctly for every transaction in three years of historical data. A feature store handles both: a low-latency online store (usually Redis or DynamoDB) answers the real-time lookup, while an offline store (S3, BigQuery, Hive) holds the historical snapshots your training jobs read from. Same feature definition, two storage backends, one source of truth.

At Uber, Airbnb, LinkedIn, and Meta, this is table-stakes infrastructure. Candidates who can only talk about model architecture, and go blank when the conversation turns to how features actually get to the model, are leaving a very visible gap. That gap is what this lesson closes.

How It Works

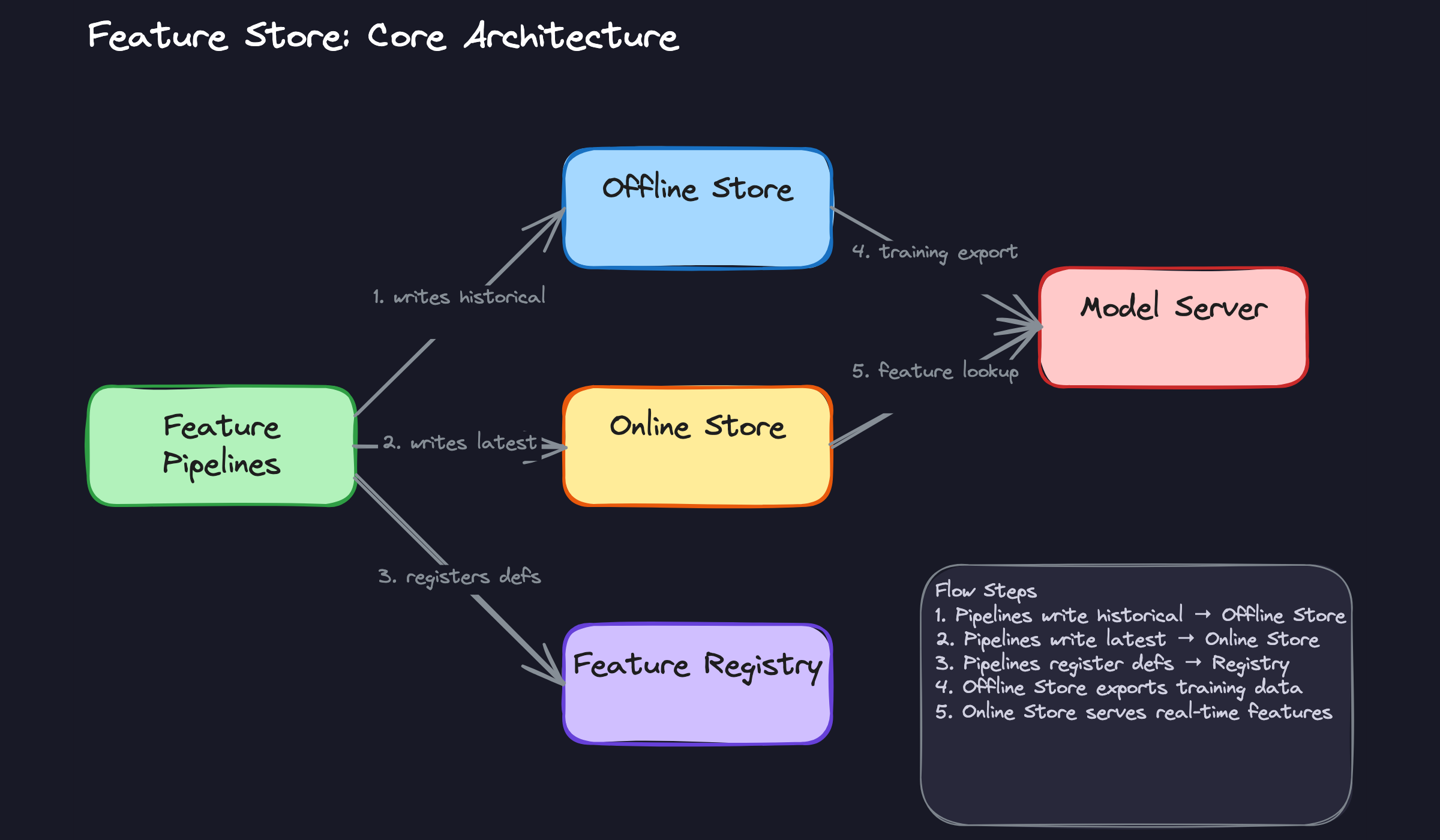

Raw data comes in from two directions: batch jobs pulling from your data warehouse, and event streams firing in real time from Kafka or similar. Feature pipelines pick up that raw data and transform it into named, versioned features. Think of a feature pipeline like a recipe: "take all transactions for this user in the last 10 minutes and sum the dollar amounts." The output gets a name, a version, and a schema. Then it gets written to two places simultaneously.

That dual-write is the heart of the architecture. The offline store (S3, BigQuery, Hive) gets the full historical record, going back months or years. The online store (Redis, DynamoDB, Cassandra) gets only the latest values, optimized for retrieval in single-digit milliseconds. Same feature definition, two storage backends, two very different access patterns.

Here's what that flow looks like:

The Feature Registry

Sitting above all of this is the registry, the metadata layer that most candidates forget to mention. It stores the feature's definition, its owner, its data type, its freshness SLA, and its lineage back to the source data. When a new team wants to use a feature, they look it up in the registry instead of rebuilding the pipeline from scratch.

Without the registry, you have a cache. With it, you have a feature store.

Point-in-Time Correct Joins

This is the concept that separates candidates who've thought carefully about ML infrastructure from those who haven't. When you generate a training dataset, you're joining features to labeled events. The trap is using the feature value that exists right now rather than the value that existed at the moment the label was created.

Say a user made a purchase on March 3rd, and you're training a fraud model. The label is "fraud" or "not fraud." The feature "number of transactions in the last 30 days" should reflect what that count was on March 3rd, not what it is today. If you join on today's value, you've leaked future information into training. Your model looks great offline and falls apart in production.

The offline store is built to answer exactly this kind of query. It stores timestamped snapshots of every feature value, so the training pipeline can reconstruct what the world looked like at any historical moment.

Backfilling

When you define a new feature, or modify an existing one, the online store is easy: you start writing new values and the cache catches up within minutes. The offline store is harder. You need historical values going back potentially years, computed with point-in-time correctness, before that feature is useful for training.

That's what backfilling is. You run the feature pipeline retroactively over historical raw data, generating timestamped feature values as if the pipeline had been running all along. It's expensive and slow, but it's the only way to train on a new feature without waiting months for data to accumulate. Feast and Tecton both have backfill primitives built in. If you're rolling your own, this is one of the first places teams underestimate the work.

Serving at Inference Time

When a request hits your model server, it needs a feature vector before it can score anything. The model server calls the feature store's online API with an entity key, typically a user_id, item_id, or some combination. The online store returns the precomputed feature vector in under 10ms. The model scores it. The response goes back to the user.

The whole lookup-and-score path needs to be fast enough that the user never notices. That's why the online store is a key-value store and not a SQL database. You're not running aggregations at request time. You're doing a point lookup against values that were already computed upstream.

The Consistency Guarantee

This is the property your interviewer actually cares about. Because both the training pipeline and the serving path read from the same feature definitions and the same transformation logic, the features your model trained on match the features it sees in production. That's the consistency guarantee, and it's the core reason feature stores exist.

Training-serving skew happens when someone computes a feature one way in the training pipeline and a slightly different way in the serving code. Even small differences, a different time window, a different null-handling rule, compound into real degradation. A feature store eliminates that entire class of bug by making the definition the single source of truth.

Feature Monitoring

Consistency at definition time doesn't protect you from what happens to the data itself. Features drift. A upstream schema change silently starts producing nulls. A Kafka consumer falls behind and your "last 10 minutes" feature is actually reflecting data from two hours ago. The freshness SLA stored in the registry only helps if something is actively checking against it.

Good feature stores instrument this directly. You get alerts when a feature's null rate spikes, when its distribution shifts beyond a threshold, or when the pipeline hasn't written a fresh value within the expected window. Tools like Feast expose feature statistics you can track over time; teams running on Tecton or SageMaker Feature Store get some of this out of the box. If your interviewer asks how you'd catch a degraded feature before it silently tanks model performance, this is your answer: monitoring on freshness, completeness, and distribution, tied back to the registry's SLA definitions.

Patterns You Need to Know

In an interview, you'll usually need to pick a specific approach. Here are the ones worth knowing.

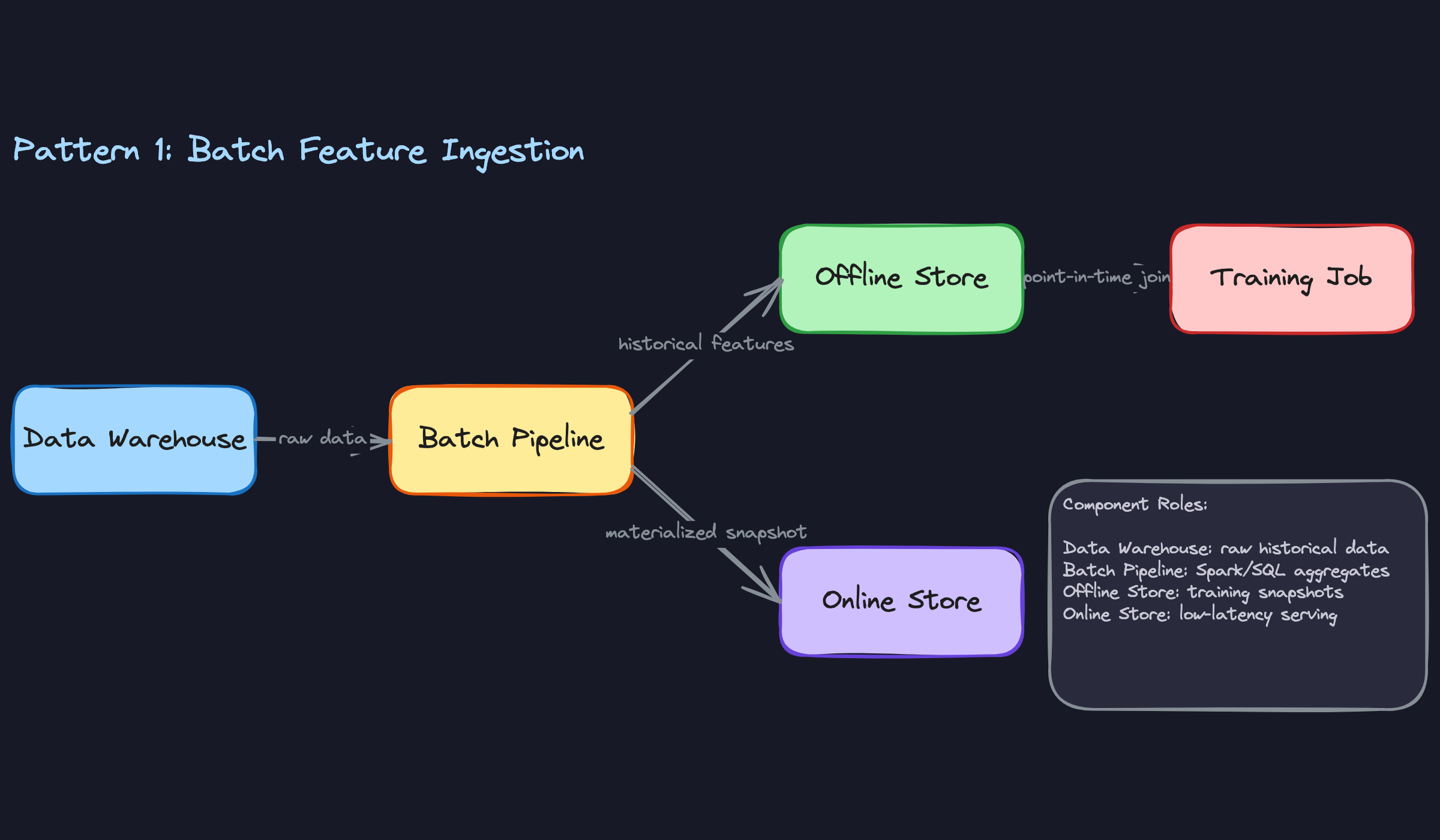

Batch Feature Ingestion

Most features don't need to be fresh to the second. A user's average purchase value over the last 90 days, their account age, their country, the number of orders they've placed: these change slowly, and computing them on a schedule is perfectly fine. Batch ingestion runs a Spark or SQL job on a cadence (hourly, daily, weekly), reads from your data warehouse, computes the feature values, and materializes them into both the offline store for training and the online store for serving.

The trade-off is simple: the less frequently you run the job, the staler your features get, but the cheaper the compute. For a churn prediction model that scores users once a day, staleness of a few hours is irrelevant. For a fraud model that needs to know how many transactions a user made in the last hour, batch is the wrong tool entirely.

When to reach for this: any time your interviewer describes slowly-changing entity attributes, historical aggregates, or a system where the cost of real-time computation outweighs the benefit of freshness.

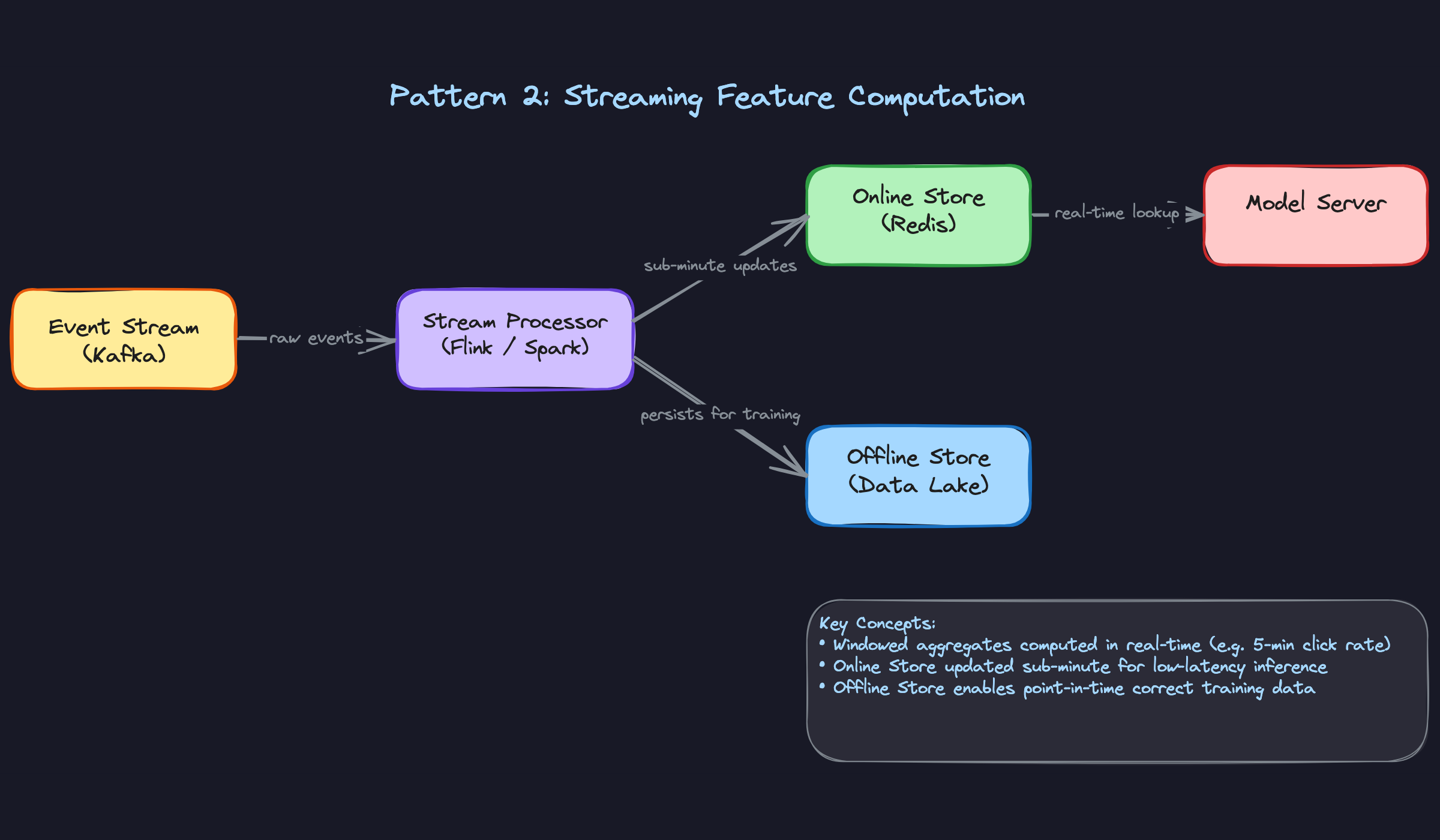

Streaming Feature Computation

When your model needs to know what a user did in the last five minutes, batch won't cut it. Streaming pipelines, typically built on Kafka plus Flink or Spark Streaming, consume raw events as they arrive and compute windowed aggregates continuously. The output gets written directly to the online store, so feature values can be fresh within seconds or minutes rather than hours.

The classic example is fraud detection: "number of transactions from this card in the last 10 minutes" is a powerful signal, but only if it's actually current. Tools like Tecton and Feast both support streaming feature pipelines natively. One important detail to mention in your interview: the stream output should also be persisted to the offline store so you can reproduce training data later. Without that, you've created a training-serving skew problem by accident.

When to reach for this: fraud detection, real-time recommendations, ad ranking, or any system where the interviewer emphasizes that recent user behavior is a key signal.

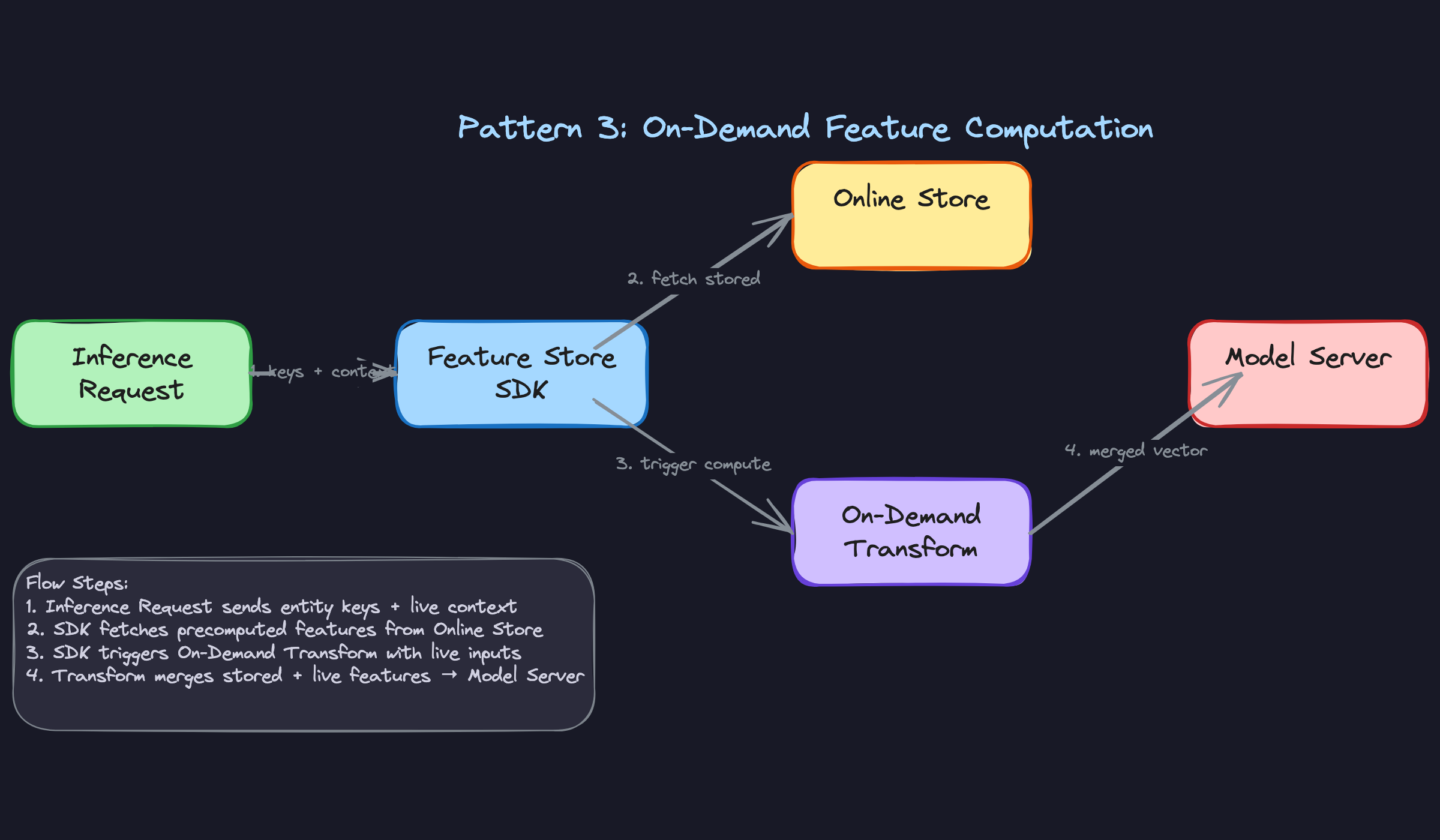

On-Demand Feature Computation

Some features can't be precomputed at all, because they depend on information that only exists at request time. The distance between a user's current GPS location and a restaurant. The similarity between a search query and a product title. The time elapsed since a user's last session. You can't store these in advance because you don't know the inputs until the request arrives.

On-demand features are computed at inference time by combining live request context with stored features retrieved from the online store. The feature store SDK orchestrates this: it fetches precomputed features for the entity keys involved, then runs a lightweight transformation using the live inputs to produce the final feature vector. This keeps the computation logic inside the feature store's definition layer, which means it's versioned, testable, and consistent between training (where you simulate the request context) and serving.

When to reach for this: location-aware systems, search and retrieval, session-level personalization, or any time the interviewer's scenario includes a feature that's inherently request-specific.

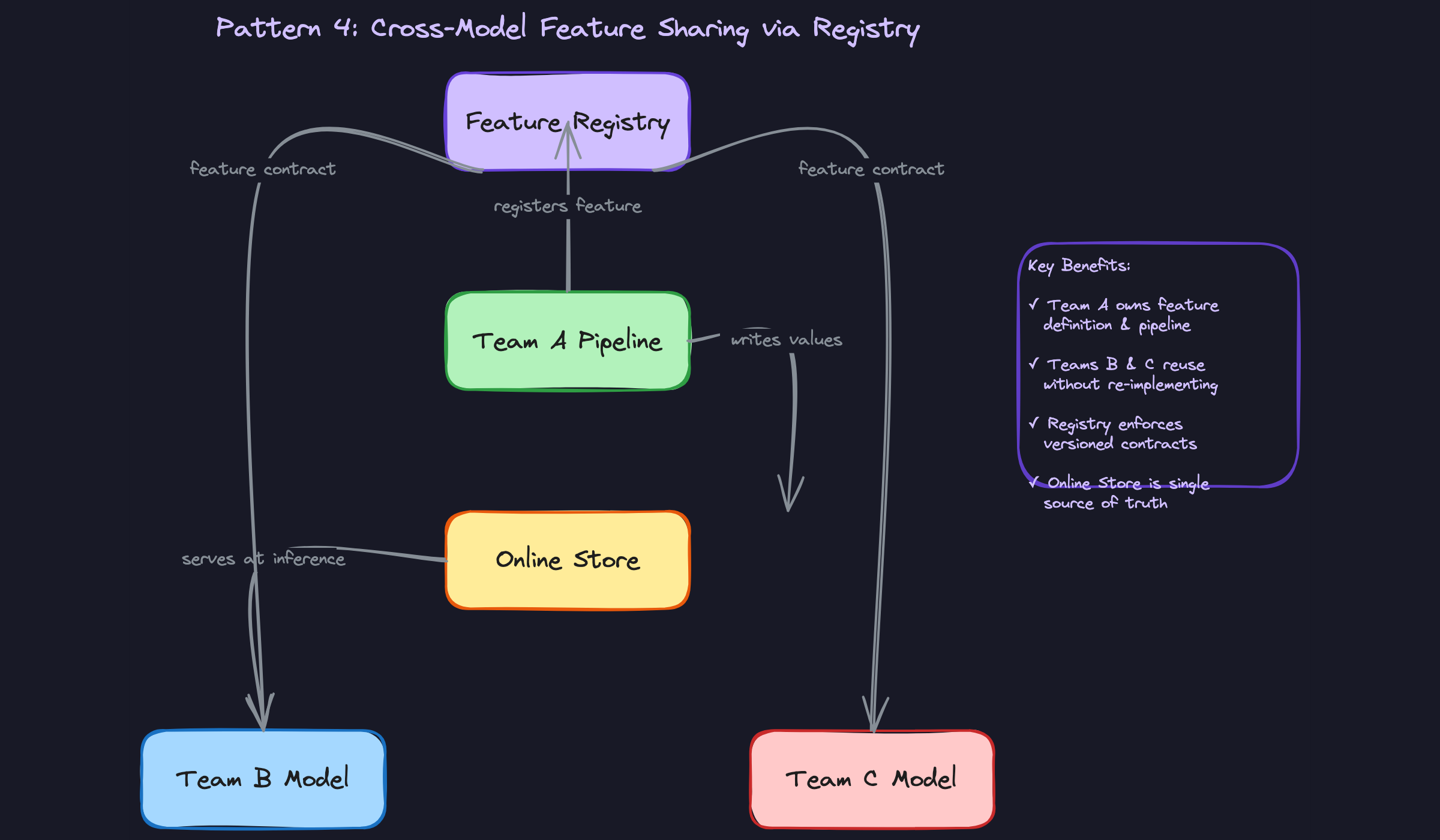

Cross-Model Feature Sharing via Registry

At any company with more than a handful of ML models, the same underlying signals get recomputed over and over by different teams. The search team builds a "user engagement score." Three months later, the recommendations team builds something nearly identical. Six months after that, the growth team does it again. Each implementation has slightly different logic, runs on slightly different data, and produces slightly different values. This is how you end up with five definitions of "active user" across the org.

The registry solves this. When team A publishes their user_engagement_score feature with a clear definition, schema, and ownership metadata, team B can discover it, read the contract, and consume it directly from the online store without writing a single line of pipeline code. They get the same values the original team computed, which also means their model sees the same distribution at training time and serving time. The operational savings compound fast: fewer pipelines to maintain, fewer data quality bugs, and a single place to update the logic when the definition needs to change.

When to reach for this: any interview scenario involving multiple models, multiple teams, or a platform-level ML infrastructure discussion. If the interviewer asks how you'd scale ML across an organization, this is part of the answer.

Comparing the Four Patterns

| Pattern | Feature Freshness | Compute Cost | Best For |

|---|---|---|---|

| Batch Ingestion | Hours to days | Low | Demographics, historical aggregates |

| Streaming Computation | Seconds to minutes | Medium-High | Fraud signals, real-time behavior |

| On-Demand Computation | Request-time | Low (per request) | Location, query similarity, session context |

| Cross-Model Sharing | Depends on source | Near zero (reuse) | Multi-team platforms, org-scale ML |

For most interview problems, you'll default to a combination of batch and streaming, with batch handling stable features and streaming handling behavioral signals. Reach for on-demand when the feature genuinely can't exist until the request arrives. And if the interviewer asks anything about platform design or team coordination, bring in the registry and cross-model sharing: that's the answer that shows you've thought beyond a single model to the full ML lifecycle.

What Trips People Up

Here's where candidates lose points — and it's almost always one of these.

The Mistake: Recomputing Features Differently at Training and Serving Time

You'd be surprised how often this happens. A candidate walks through a clean feature store design, then casually mentions that the training pipeline computes a 7-day rolling average in Spark while the model server recomputes it on the fly using a database query. Those two code paths will diverge. They always do.

The whole point of a feature store is one definition, two stores. The transformation logic lives once, in the feature pipeline. The offline store and online store are just different materialization targets for the same computation. The moment you have two separate implementations, you've reintroduced the exact problem you were trying to solve.

When the interviewer asks how you'd keep training and serving consistent, say something like: "The feature transformation is defined once in the pipeline. Training reads historical snapshots from the offline store; serving reads the latest values from the online store. The same code produces both."

The Mistake: Treating the Offline Store Like a Data Warehouse

"We can just use BigQuery for the offline store" is a sentence that will cost you points. BigQuery is great for analytics. It is not designed for point-in-time correct joins.

Here's the problem: when you generate training data, you need to join features to labels using only the feature values that existed at the moment the label was created. If a user churned on March 15th, you need their feature values from March 14th, not March 20th. A standard data warehouse query doesn't enforce this. You'll accidentally leak future information into your training set, your model will look great offline and fall apart in production, and you'll spend weeks figuring out why.

The offline store in a feature store is built specifically for this pattern. Feast, for example, stores timestamped feature rows and handles the point-in-time join for you when you call get_historical_features. That's not something you get for free from a general-purpose warehouse.

The Mistake: Proposing a Database That Can't Hit the Latency SLA

Fraud detection models need a feature vector in under 5ms. Ad ranking systems are often tighter than that. When a candidate says "we'll store features in Postgres and look them up at inference time," the interviewer mentally flags it and moves on.

Postgres under load, with network overhead, is not a sub-5ms system for arbitrary key lookups. Redis is. DynamoDB with DAX can be. Purpose-built online stores like Feast's Redis backend or Tecton's online store are designed exactly for this access pattern: single entity key in, feature vector out, single-digit milliseconds, high throughput.

The fix isn't complicated. Just be explicit about the latency requirement and match your storage choice to it. Say: "For online serving, I'd use Redis. The access pattern is pure key-value lookup by entity ID, and we need p99 latency under 5ms. Redis handles that comfortably at this scale."

The Mistake: Forgetting the Feature Registry

Candidates describe the data flow beautifully: events come in, pipelines compute features, offline store gets the history, online store gets the latest. Then the interviewer asks "how does another team know this feature exists?" Silence.

Without a registry, you have a cache with a fancy name. The registry is what makes it a feature store. It's the metadata layer that holds feature definitions, data types, freshness SLAs, ownership, and lineage. It's what lets a ranking team discover that the personalization team already computes user_engagement_score and consume it directly instead of rebuilding the pipeline from scratch.

At org scale, the registry is the whole value proposition for platform teams. Skipping it in your design signals that you're thinking about the feature store as a single-team caching solution rather than shared infrastructure.

How to Talk About This in Your Interview

When to Bring It Up

You don't need to wait for someone to say "feature store." The concept belongs in the conversation whenever you hear certain signals.

Bring it up when the interviewer mentions training-serving skew, or asks how you'd ensure consistency between your training pipeline and production. That's the clearest opening.

Other triggers: "multiple teams are building models on the same user data," "we need sub-10ms feature retrieval," "our fraud model needs real-time signals," or "how would you reproduce a model's predictions from six months ago?" Any of these is your cue. The feature store solves all of them, and naming it early signals that you think in systems, not just models.

Sample Dialogue

Follow-Up Questions to Expect

"How do you handle feature freshness for different features?" Not every feature needs the same pipeline. Slowly-changing features like account age belong in a daily batch job; real-time signals like recent transaction counts need a streaming pipeline. The answer is to set a freshness SLA per feature and choose the pipeline accordingly.

"What's a point-in-time join and why does it matter?" When you generate training data, you need to join features to labels using only the values that existed at the time of the label event, not values that were computed later. Without it, you leak future information into your training set and your offline metrics will be optimistic.

"How would you monitor a feature store in production?" Track feature freshness (is the online store being updated on schedule?), distribution drift (are feature values shifting compared to training time?), and null rates. Stale or drifted features are often the first sign a model is degrading.

"What's the difference between the feature registry and the online store?" The online store holds the actual feature values for serving. The registry holds the metadata: what the feature means, who owns it, what its schema is, and what its freshness SLA is. You can have a cache without a registry. You can't have a real feature store without one.

What Separates Good from Great

- A mid-level answer describes the offline/online split correctly and names a tool like Feast. A senior answer explains why the split exists (latency vs. storage cost vs. point-in-time correctness) and talks about the operational trade-offs of each pattern.

- Mid-level candidates treat feature freshness as a binary (batch vs. streaming). Senior candidates reason about it as a spectrum and tie freshness decisions to business impact: "for fraud, a 5-minute-stale transaction count might be acceptable; for ad ranking, it might not be."

- The best answers acknowledge when not to use a feature store. Interviewers respect candidates who can say "this adds operational complexity, and at early scale the cost isn't worth it." It shows you're solving the problem, not showing off vocabulary.