Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

Design a URL Shortener

Understanding the Problem

What is a URL Shortener?

You've almost certainly used one. Paste a long URL into bit.ly, get back something like https://bit.ly/3xK9mQ, and anyone who clicks that short link lands on the original page. Simple on the surface, but the interview is about what happens underneath: how you generate millions of unique short codes without collisions, and how you serve redirects so fast that users never notice the extra hop.

One thing to clarify early with your interviewer: is this a public consumer product (like bit.ly or TinyURL) or an internal enterprise tool? The answer changes your threat model significantly. A public service needs abuse prevention, spam detection, and rate limiting. An internal tool cares more about multi-tenancy and access controls. For this walkthrough, we'll assume a public consumer product, since that's the more common interview framing and the harder design problem.

Functional Requirements

Core Requirements:

- Shorten a URL: Given a long URL, generate a unique short URL and return it to the user

- Redirect: Given a short URL, redirect the user to the original long URL with minimal latency

- Custom aliases: Allow users to optionally specify a vanity short code (e.g.,

/my-brand) instead of a random one - Link expiration: Support configurable TTL so links can auto-expire after a set duration

Below the line (out of scope):

- User accounts, authentication, and link management dashboards

- Detailed click analytics (click counts, geographic breakdowns, referrer tracking). We'll touch on the analytics pipeline in the high-level design, but we won't design the analytics product itself.

- Link editing or updating the destination URL after creation

Non-Functional Requirements

- Read-heavy ratio (100:1): For every URL created, expect roughly 100 redirect lookups. This single fact should shape almost every design decision you make.

- Low-latency redirects (p99 < 50ms): The redirect is the hot path. Users clicking a short link shouldn't perceive any delay. Sub-50ms at the 99th percentile is the target.

- Global uniqueness: Every short code must be unique across the entire system. Two different long URLs must never map to the same short code. Ever.

- Scale: 100M new URLs created per month, 10B redirects served per month. The system should remain operational and performant for at least 5 years of data growth.

- High availability: Redirect availability should target 99.99%. A short link that doesn't resolve is a broken link on someone's marketing campaign, social media post, or email. Downtime has a blast radius far beyond your service.

Back-of-Envelope Estimation

Start with the numbers from our requirements and work through the math out loud. Interviewers want to see your reasoning process, not just final answers.

| Metric | Calculation | Result |

|---|---|---|

| Write QPS | 100M URLs / (30 days × 86,400 sec) | ~40 writes/sec |

| Read QPS (avg) | 10B redirects / (30 days × 86,400 sec) | ~4,000 reads/sec |

| Read QPS (peak) | Assume 5x spike factor | ~20,000 reads/sec |

| Storage per record | short_code + original_url + metadata | ~500 bytes |

| Monthly storage | 100M × 500 bytes | ~50 GB/month |

| 5-year storage | 50 GB × 60 months | ~3 TB |

| Daily bandwidth (reads) | 10B/30 × 500 bytes | ~170 GB/day |

A few things jump out from these numbers. Writes are trivially low at 40 QPS. You won't need to do anything clever to handle write throughput. Reads at 4,000 QPS average (with peaks around 20K) are moderate but absolutely require a caching layer. And 3 TB over five years is small enough that storage is not your bottleneck.

This is a storage-light, read-heavy system. That profile tells you exactly where to invest your design energy: a fast cache layer for redirects, and a collision-free code generation strategy that doesn't slow down under load. Those are the two problems your interviewer is waiting to see you solve.

The Set Up

Before you start drawing boxes and arrows, take two minutes to nail down what you're actually storing and how clients interact with the system. Interviewers notice when you jump straight to architecture without grounding yourself in the data model and API contract. This is where you show discipline.

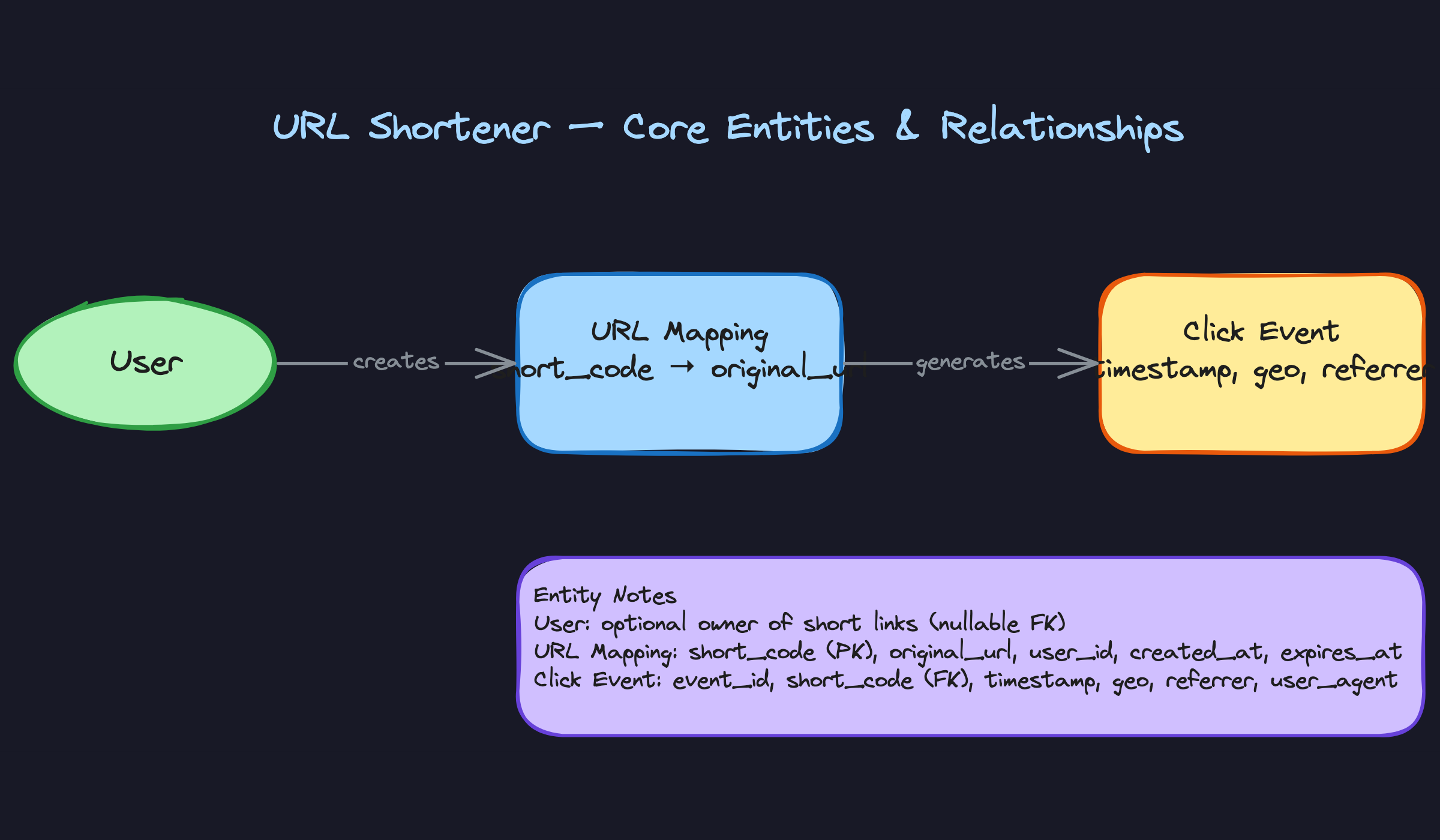

Core Entities

Three entities carry the entire system.

URL Mapping is the star of the show. It's the record that ties a short code to its original destination. Key attributes: short_code, original_url, user_id (nullable, for anonymous creation), created_at, and expires_at. Every read, every write, every redirect touches this entity.

User is optional but worth mentioning. If the service supports authenticated link creation (dashboards, link management, usage limits), you need a user entity. Keep it simple: user_id, email, api_key, created_at. If the interviewer says "assume anonymous usage only," acknowledge it and move on. Don't over-engineer what they didn't ask for.

Click Event captures what happens after the redirect. Each time someone hits a short URL, you want to record the short_code, timestamp, referrer, geo (derived from IP), and user_agent. This is your analytics fuel. It's a write-heavy, append-only entity that lives in a completely different storage tier than URL mappings.

API Design

Two endpoints. That's it. A URL shortener has one of the simplest API surfaces you'll encounter in a system design interview, which means the interviewer will scrutinize every choice you make on it.

1// Create a shortened URL

2POST /urls

3{

4 "original_url": "https://example.com/very/long/path?with=params",

5 "custom_alias": "my-brand", // optional

6 "expires_in": 86400 // optional, TTL in seconds

7}

8-> 201 Created

9{

10 "short_url": "https://sho.rt/a1B2c3",

11 "short_code": "a1B2c3",

12 "expires_at": "2025-01-16T00:00:00Z"

13}

14POST is the right verb here because you're creating a new resource. Some candidates reach for PUT, but PUT implies idempotency with a known resource identifier, and the client doesn't know the short code yet. If the client provides a custom_alias, you could argue for PUT, but POST keeps things consistent and simple.

1// Redirect to the original URL

2GET /{short_code}

3-> 302 Found

4Location: https://example.com/very/long/path?with=params

5No request body. No JSON response. Just a redirect header. The browser follows the Location header automatically, and the user never sees your service.

Now here's the question that separates prepared candidates from everyone else: why 302 and not 301?

A 301 (Moved Permanently) tells the browser to cache the redirect. Next time the user clicks that short link, the browser goes straight to the destination without ever hitting your server. Great for reducing load. Terrible for analytics, because you never see the second click.

A 302 (Found, temporary redirect) means every single click passes through your servers. More load, but you capture every visit. Since we listed analytics as a requirement, 302 is the right default.

One more thing worth calling out: if custom aliases are supported, the POST endpoint needs to handle the case where the requested alias is already taken. Return a 409 Conflict with a clear error message. Don't silently generate a different code. The user asked for a specific alias for a reason.

High-Level Design

Your interviewer has heard the requirements and seen your API contracts. Now they want to see how the pieces fit together. The best way to structure this is to walk through each functional requirement as its own data flow, building up the architecture incrementally. Don't try to draw the entire system at once.

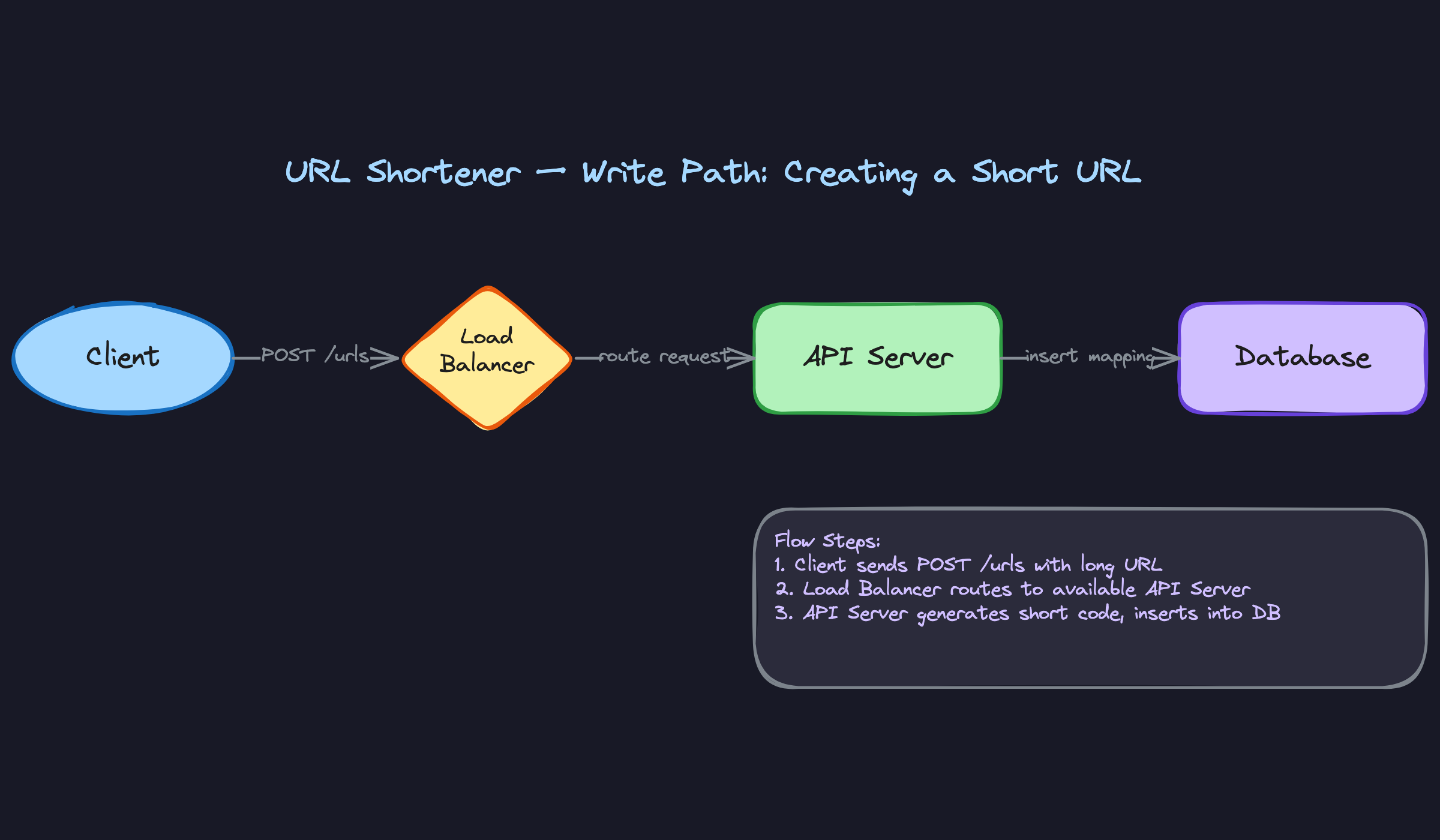

1) Shortening a Long URL (Write Path)

Components involved: Client, Load Balancer, API Server, Database.

The write path is straightforward, but the interesting design decision lives inside step 3 below. Here's the flow:

- The client sends a

POST /urlsrequest with theoriginal_url(and optionally acustom_alias). - The load balancer routes the request to one of several stateless API servers.

- The API server generates a unique short code. More on this in a moment.

- The server inserts the mapping (

short_code → original_url) into the database. - The server returns the full short URL (e.g.,

https://short.ly/a3Xk9z) to the client.

Where does code generation happen? You have two broad choices: let the database generate it (auto-increment ID, then base62-encode) or generate it in the application layer before the database write. Generating in the application layer is better for scaling because it removes the database as a single point of coordination. We'll go deep on the specific generation strategies in the deep dives, but flag this tradeoff now so your interviewer knows you're thinking about it.

At ~40 writes per second, the write path is not the bottleneck. A single relational database (Postgres, MySQL) handles this volume easily. Don't over-engineer this side of the system.

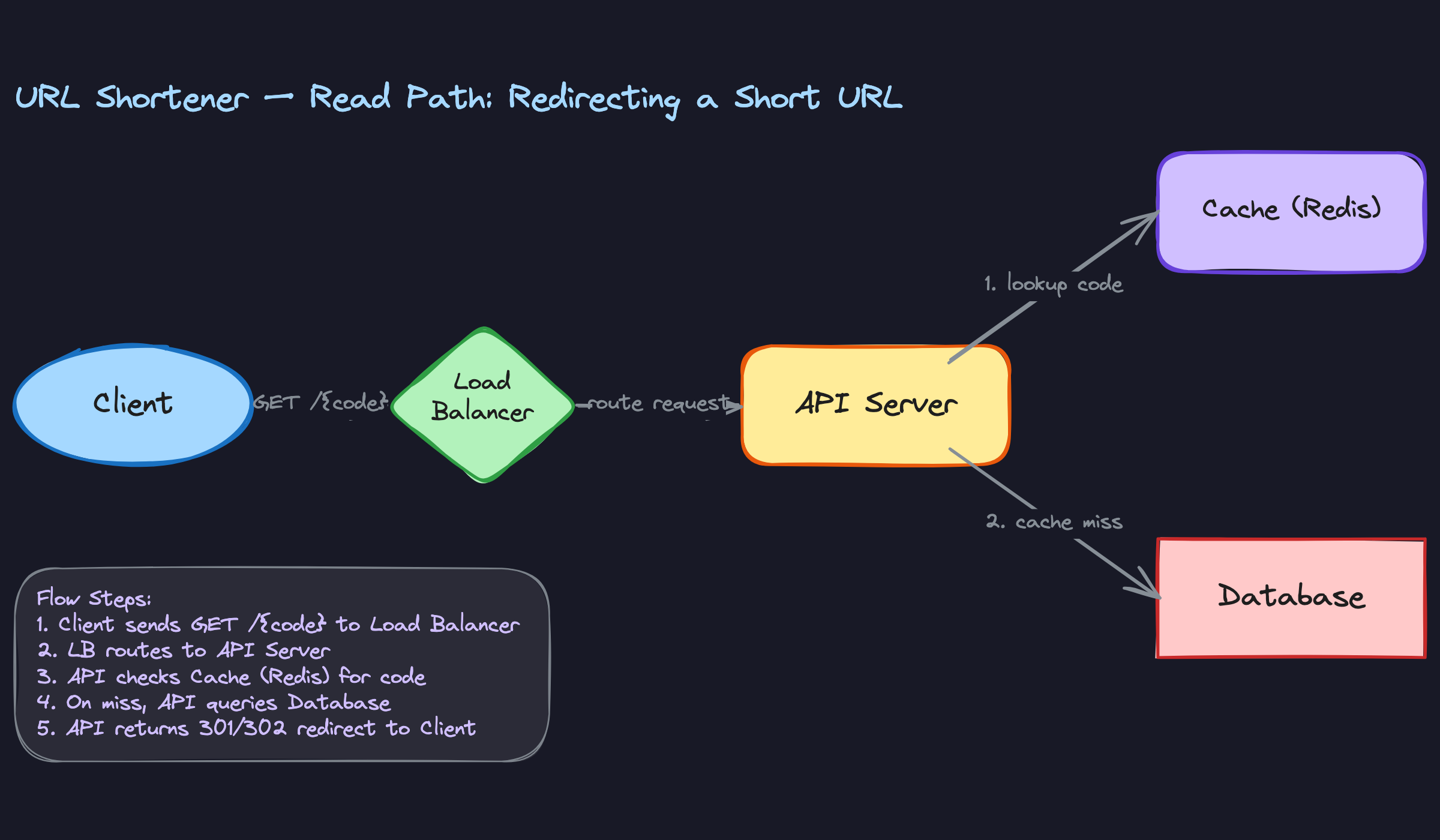

2) Redirecting a Short URL (Read Path)

Components involved: Client, Load Balancer, API Server, Cache (Redis), Database.

This is the hot path. 4,000 reads per second on average, with spikes potentially 10x that for viral links. Every millisecond of latency here matters.

- The client (usually a browser) sends

GET /{short_code}. - The load balancer routes to an API server.

- The API server checks Redis for the short code.

- Cache hit: Redis returns the original URL. Skip to step 6.

- Cache miss: The server queries the database, gets the original URL, and writes it back into Redis with a TTL.

- The server returns an HTTP

302 Foundwith theLocationheader set to the original URL.

Why Redis first? Because a cache lookup takes under 1ms, while a database query takes 5-20ms. With a 100:1 read-to-write ratio, even a modest cache hit rate of 80% eliminates the vast majority of database reads.

The cache-aside pattern is the right fit here. The application checks the cache, falls back to the database on a miss, and populates the cache after a miss. You don't need write-through caching because the write path is low-volume and the data is essentially immutable once created. A URL mapping almost never changes.

For TTL strategy, set Redis entries to expire after 24-48 hours. Popular links will be re-cached constantly through organic traffic. Unpopular links will naturally evict, keeping memory usage proportional to the active working set rather than the total dataset.

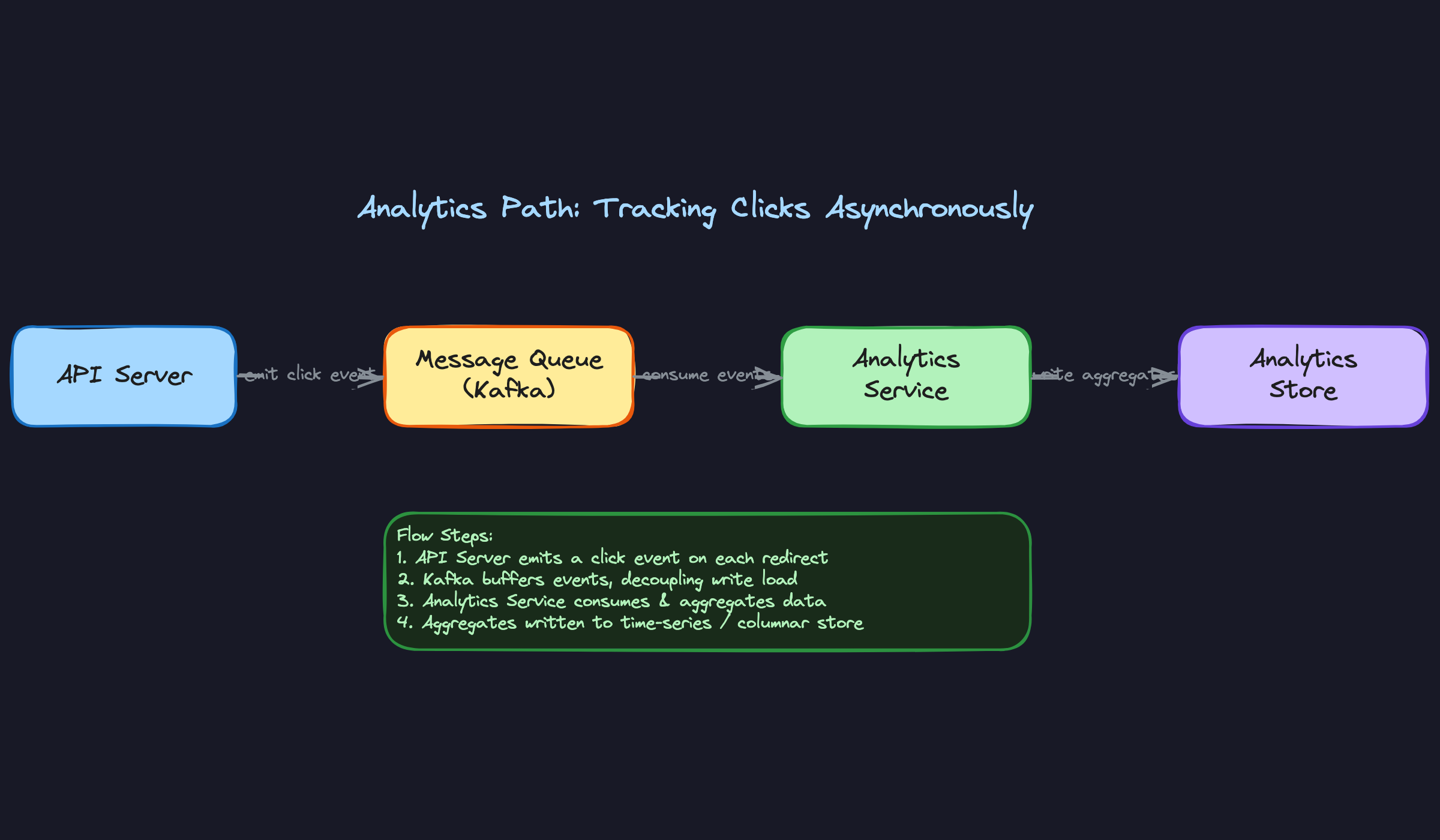

3) Tracking Click Analytics (Async Path)

Components involved: API Server, Message Queue (Kafka), Analytics Service, Analytics Store.

If you try to write analytics data synchronously during the redirect, you'll add latency to the hot path. That's unacceptable. The redirect needs to return in under 50ms. Writing to an analytics database could take 10-50ms on its own, and under load it could spike much higher.

Instead, treat analytics as a fire-and-forget side channel:

- During the redirect (step 6 above), the API server asynchronously publishes a click event to Kafka. This event includes the

short_code, timestamp, referrer, user-agent, and IP (for geo lookup). - A separate analytics consumer service reads from Kafka.

- The consumer enriches the event (e.g., IP → geo lookup) and writes aggregated data to a time-series or columnar store like ClickHouse or TimescaleDB.

The API server doesn't wait for Kafka acknowledgment before returning the redirect. The publish is non-blocking. If Kafka is temporarily unavailable, you might lose a small number of click events, and that's an acceptable tradeoff for keeping redirects fast.

Kafka also gives you replay capability. If the analytics service goes down or you need to reprocess events with a new schema, you can replay from the Kafka topic without losing data.

Putting It All Together

Here's the full picture. The system has three distinct data flows layered on top of shared infrastructure:

Stateless API servers sit behind a load balancer and handle both writes and reads. They're the only component that touches all three paths. Because they hold no state, you can horizontally scale them by adding more instances behind the load balancer.

Redis absorbs the read amplification. With a working set of maybe 10-50 million active URLs (a few GB), a single Redis cluster handles the entire read cache comfortably. You don't need to shard Redis until you're well past the scale described in our requirements.

The database (Postgres or MySQL) is the source of truth. At 40 writes/sec, a single primary handles writes without breaking a sweat. Add one or two read replicas if you want redundancy for cache-miss fallback queries, but honestly, with Redis absorbing 80%+ of reads, even that's optional at this scale.

Kafka plus the analytics pipeline runs completely independently. If it falls over, redirects keep working. If you need to add new analytics dimensions later, you add a new consumer. The redirect path doesn't change.

The beauty of this architecture is that each layer can scale independently. Reads spiking? Add Redis capacity or put a CDN in front (we'll discuss this in deep dives). Writes growing? Shard the database by short code prefix or switch to a distributed ID generation scheme. Analytics backlogged? Add more Kafka consumers. No single component is a bottleneck at the stated scale.

Deep Dives

"How do we generate unique short codes without collisions?"

This is the question your interviewer is waiting for. It's the heart of the URL shortener problem, and how you tier through the options tells them a lot about your design maturity.

Bad Solution: Hash and Truncate

The instinct most candidates have is to hash the original URL with something like MD5 or SHA-256, then take the first 7 characters as the short code.

1import hashlib

2import base64

3

4def generate_short_code(original_url: str) -> str:

5 hash_bytes = hashlib.md5(original_url.encode()).digest()

6 return base64.b64encode(hash_bytes)[:7].decode()

7It feels clean. Same URL always produces the same code (deduplication for free!), and you don't need any external state. But the math kills you. A 7-character truncation of MD5 gives you a tiny fraction of the hash space, and collisions become inevitable well before you hit a billion URLs. You now need a collision-check loop: hash, check the database, if taken, append a counter or salt and re-hash. Under high write throughput, this retry loop becomes a real performance problem and a source of race conditions.

There's a subtler issue too. Two different users submitting the same long URL get the same short code. That sounds like a feature until one of them deletes their link and the other user's redirect breaks. You'd need to add user-scoping to the hash input, which erodes the "simplicity" argument quickly.

Good Solution: Base62-Encoded Auto-Increment ID

A much simpler approach: let the database assign an auto-incrementing integer ID, then encode it to base62 (a-z, A-Z, 0-9) to get a short, URL-safe string.

1ALPHABET = "0123456789abcdefghijklmnopqrstuvwxyzABCDEFGHIJKLMNOPQRSTUVWXYZ"

2

3def encode_base62(num: int) -> str:

4 if num == 0:

5 return ALPHABET[0]

6 result = []

7 while num > 0:

8 result.append(ALPHABET[num % 62])

9 num //= 62

10 return ''.join(reversed(result))

11

12# encode_base62(1000000) -> "4c92"

13# encode_base62(56800235583) -> "zzzzzz" (max 6-char code)

14With 6 characters of base62, you get 56.8 billion unique codes. That's plenty. No collision checks, no retry loops, and the logic is trivial to implement.

The trade-offs are real, though. Auto-increment IDs are sequential, so anyone can estimate your total link count by decoding a short code. That might matter for a public product. More importantly, a single auto-incrementing sequence means a single writer. You can't easily shard writes across multiple database nodes because you need a globally unique, monotonically increasing counter. At 40 writes/sec this isn't a crisis, but it's an architectural ceiling you're choosing to live under.

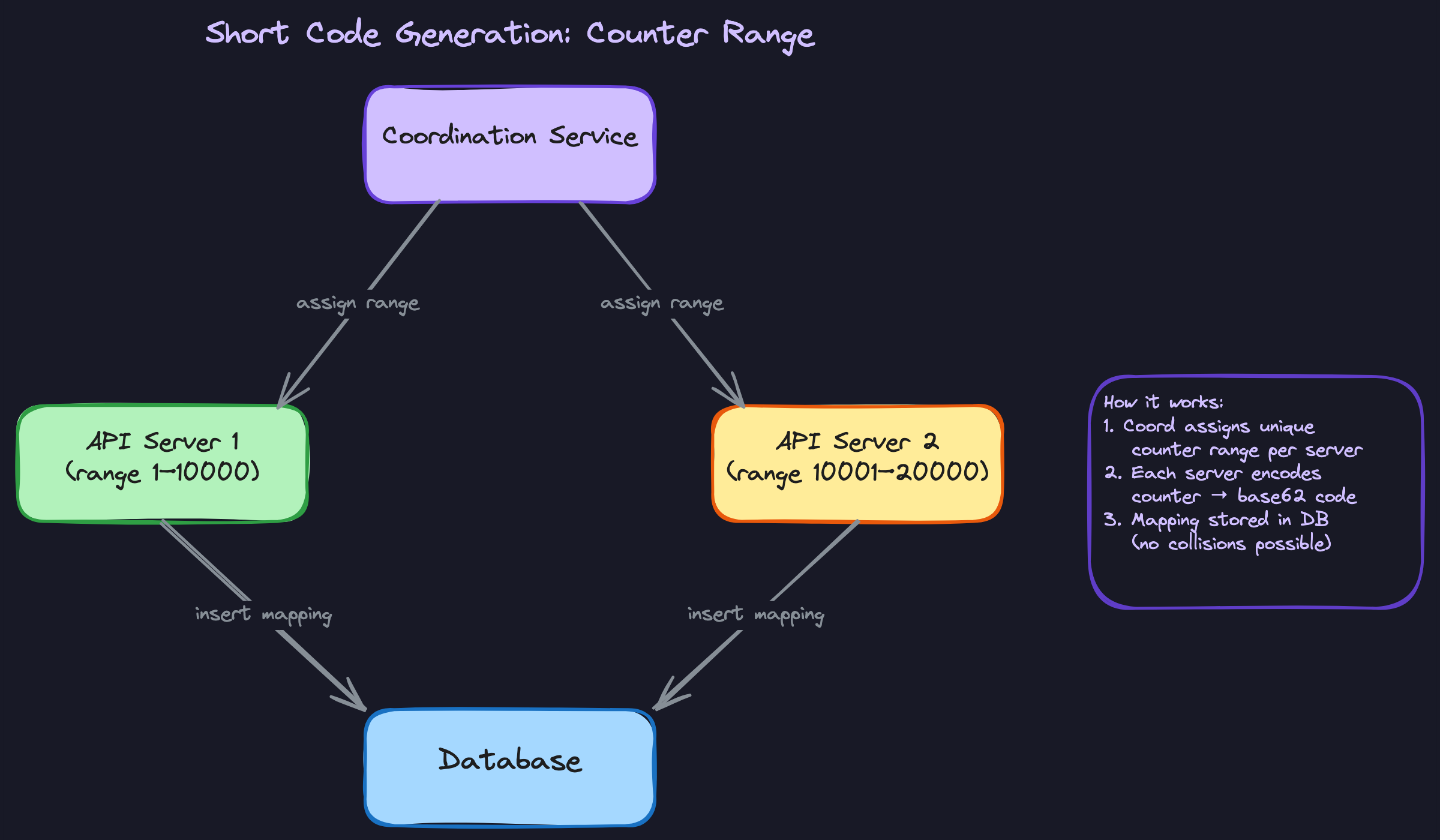

Great Solution: Pre-Allocated Counter Ranges

Take the base62 encoding idea but remove the single-writer bottleneck. A coordination service (Zookeeper, etcd, or even a simple database table with atomic increments) hands out ranges of IDs to each API server. Server 1 gets 1-10,000. Server 2 gets 10,001-20,000. Each server then increments locally, in memory, with zero coordination on every write.

1class ShortCodeGenerator:

2 def __init__(self, range_start: int, range_end: int):

3 self.current = range_start

4 self.range_end = range_end

5

6 def next_code(self) -> str:

7 if self.current > self.range_end:

8 raise RangeExhaustedError("Request new range from coordinator")

9 code = encode_base62(self.current)

10 self.current += 1

11 return code

12When a server exhausts its range, it asks the coordinator for a new one. The coordinator itself is doing very little work (one request per 10,000 writes), so it's not a bottleneck. If a server crashes mid-range, you lose the unused IDs in that range. That's fine. Wasting a few thousand IDs out of 56 billion is a rounding error.

This gives you everything: no collisions (ranges never overlap), no coordination on the hot path, horizontal write scaling, and short codes that don't reveal your exact volume because different servers are encoding from different parts of the number space.

"How do we scale reads to handle viral links?"

Your average short URL gets a handful of clicks. But when someone posts a link on Twitter that goes viral, a single short code might receive millions of requests per minute. Your system needs to handle both cases without the viral link taking down the redirect path for everyone else.

Bad Solution: Direct Database Lookups

Every GET /{short_code} hits the database. At 4,000 QPS average this might survive, but a viral spike can push a single short code to tens of thousands of QPS on its own. Your database connection pool saturates, latency spikes for all users, and you're one trending tweet away from an outage.

Good Solution: Redis Cache-Aside

Put a Redis cluster in front of the database. On each redirect request, check Redis first. On a cache miss, read from the database and populate Redis with a TTL (say, 24 hours for active links).

1async def resolve_short_code(code: str) -> str | None:

2 # Check cache first

3 cached_url = await redis.get(f"url:{code}")

4 if cached_url:

5 return cached_url

6

7 # Cache miss: hit the database

8 row = await db.fetch_one(

9 "SELECT original_url, expires_at FROM url_mappings WHERE short_code = $1", code

10 )

11 if not row or (row.expires_at and row.expires_at < now()):

12 return None

13

14 # Populate cache

15 await redis.set(f"url:{code}", row.original_url, ex=86400)

16 return row.original_url

17This handles the common case well. Popular links stay hot in Redis, and the database only sees cache misses. With a small working set of active URLs, your cache hit rate should be 90%+ easily.

The gap shows up with truly viral links and geographic distribution. If your Redis cluster and API servers are in us-east-1, users in Tokyo are paying 150ms+ in network latency before the redirect even starts. That's noticeable.

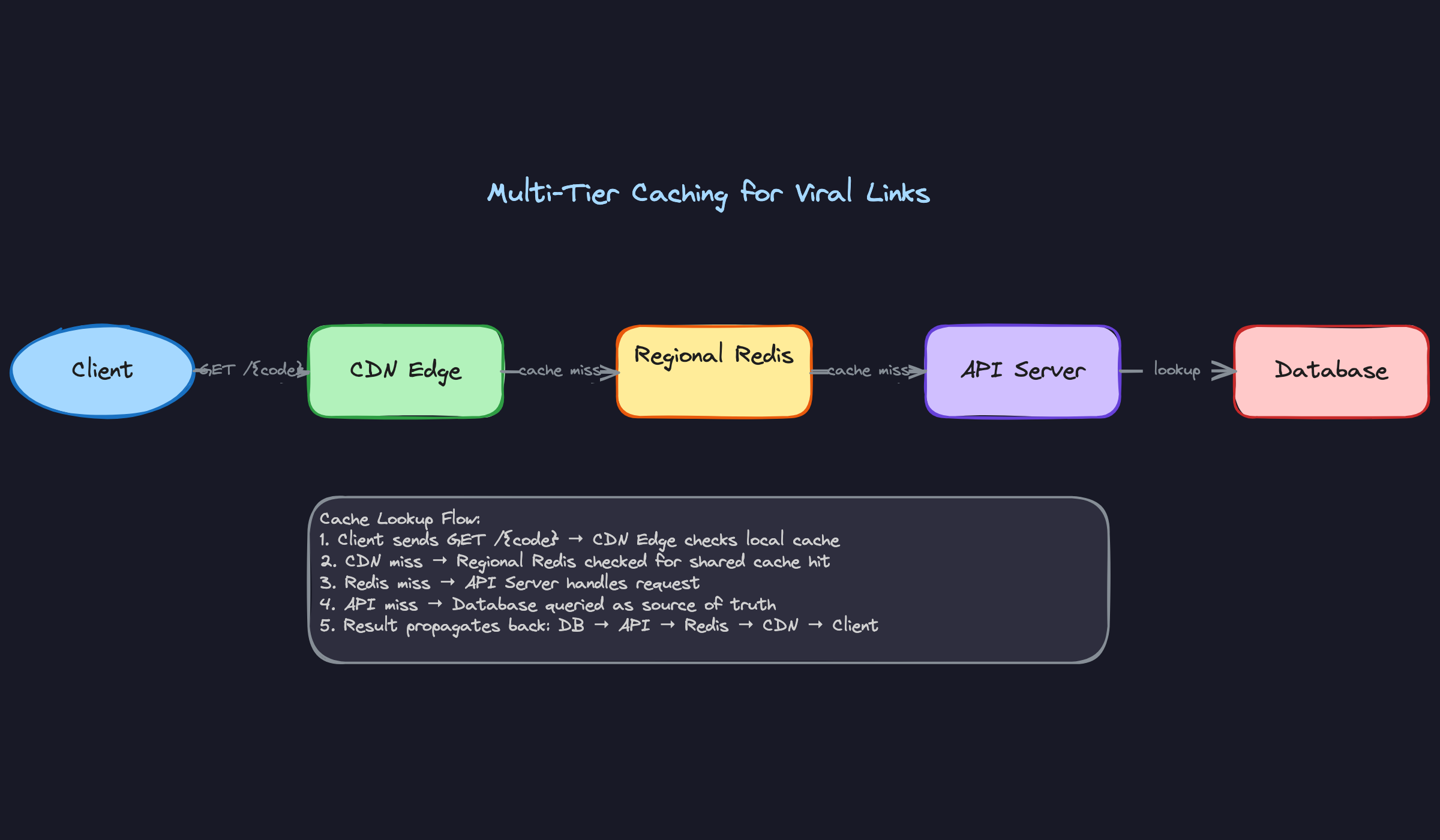

Great Solution: Multi-Tier Caching with CDN Edge Redirects

Layer your caching. The CDN (CloudFront, Cloudflare) sits closest to the user and can serve redirect responses directly from edge nodes. Behind that, regional Redis clusters handle cache misses within each geographic region. The database is the final fallback.

For the CDN layer, you configure your edge to cache 302 responses with a short TTL (say, 5 minutes). A viral link gets served from 200+ edge locations simultaneously, and your origin servers barely feel it.

1CDN config (conceptual):

2 Cache-Control: public, max-age=300

3 Vary: none (short code responses are identical for all users)

4One subtlety: you need 302 (temporary) redirects here, not 301 (permanent). A 301 tells the browser to cache the redirect forever and never ask again. That's great for reducing load, but it means you lose all analytics visibility and can never update or expire the link for that user. With 302 plus a CDN TTL, you get edge caching benefits while retaining control.

For links that are about to go viral (say, a brand just created a short link for a Super Bowl ad), you can proactively warm the cache. The API server pushes the mapping into Redis and triggers a CDN prefetch at creation time, so the first wave of traffic hits warm caches everywhere.

Rate limiting per short code is your safety valve. If a single code exceeds, say, 50,000 requests/second at the origin, something is probably wrong (bot traffic, DDoS). Apply per-code rate limits at the load balancer level to protect downstream systems.

"How do we handle link expiration and cleanup?"

Users want links that expire after a certain time. Sounds simple, but the implementation touches the read path, the cache layer, and storage reclamation. Getting it wrong means either serving stale redirects or burning resources scanning the entire database.

Bad Solution: Active-Only Cleanup

Run a cron job every hour that scans the database for expired links and deletes them.

DELETE FROM url_mappings WHERE expires_at < NOW() LIMIT 10000;

The problem: between cleanup runs, expired links still resolve. A user sets a 1-hour TTL, and the link might work for up to 2 hours depending on when the job last ran. Worse, you also need to invalidate the cache for every deleted record, or Redis will keep serving stale redirects until its own TTL expires. At scale, that invalidation fan-out gets expensive.

Good Solution: Lazy Deletion on Read

Check the expiration timestamp on every read. If the link is expired, return 404 and optionally delete the cache entry right there.

1async def resolve_short_code(code: str) -> str | None:

2 row = await cache_or_db_lookup(code)

3 if not row:

4 return None

5 if row.expires_at and row.expires_at < now():

6 await redis.delete(f"url:{code}")

7 return None # 404 to the client

8 return row.original_url

9This gives you correctness on the read path immediately. No expired link ever resolves successfully, regardless of when the cleanup job last ran. And it's cheap: you're already reading the record, so checking one timestamp field adds negligible overhead.

But lazy deletion alone means expired records pile up in the database forever if nobody clicks them. Over months, you accumulate millions of dead rows consuming storage and slowing index scans.

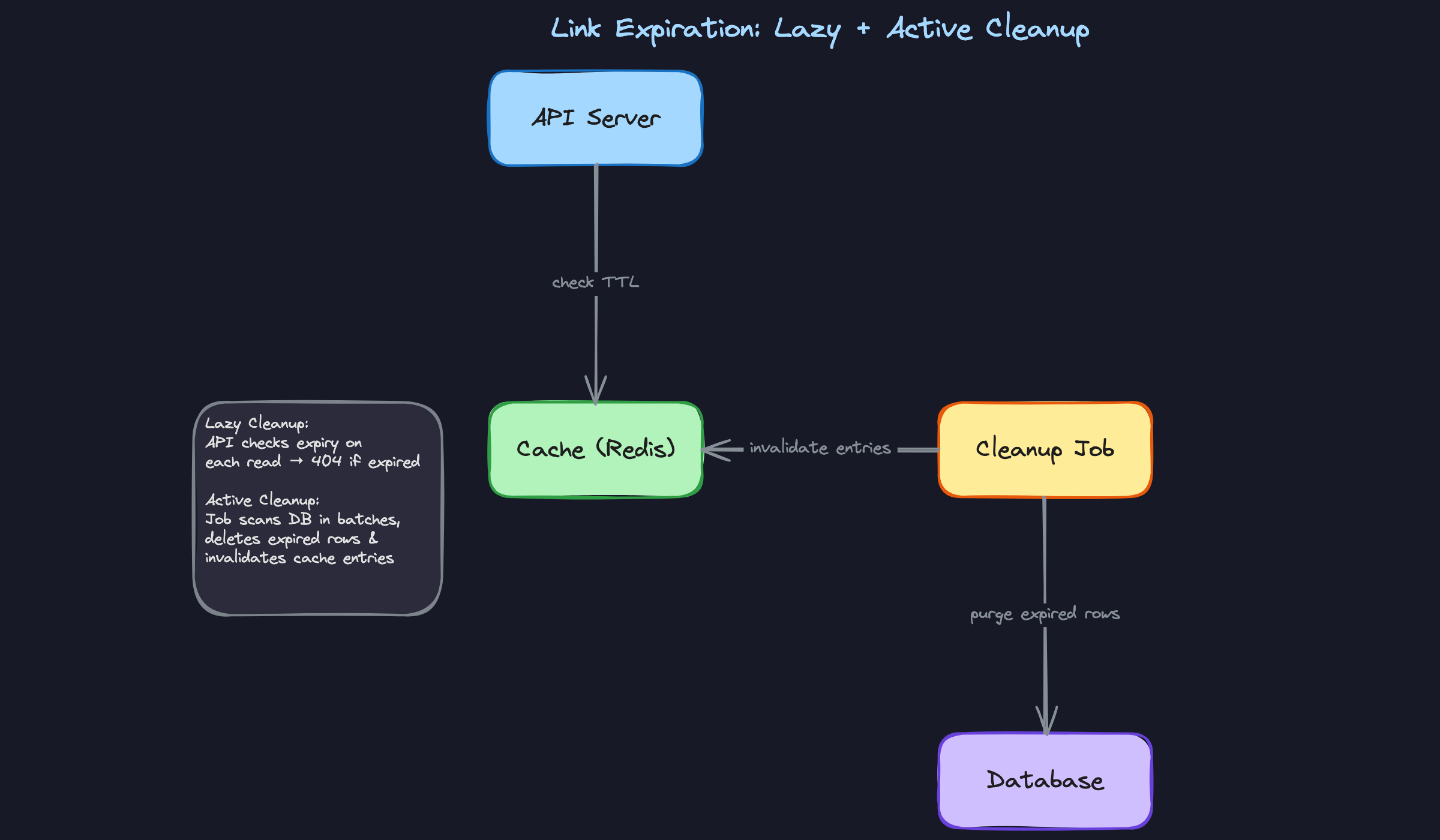

Great Solution: Lazy Deletion + Active Cleanup + Cache TTL Alignment

Combine all three mechanisms, each handling a different concern.

Lazy deletion ensures correctness on the read path. No expired link ever returns a redirect, period.

Cache TTL alignment means when you write a record to Redis, you set the Redis TTL to match the link's remaining lifetime. If a link expires in 3 hours, the Redis entry auto-evicts in 3 hours. No explicit invalidation needed.

remaining_ttl = max(int((row.expires_at - now()).total_seconds()), 0)

await redis.set(f"url:{code}", row.original_url, ex=remaining_ttl)

Active cleanup runs as a background job to reclaim storage. It doesn't need to be real-time since lazy deletion already handles correctness. Run it during off-peak hours, delete in small batches to avoid lock contention, and use the expires_at index to avoid full table scans.

1-- Efficient batch cleanup using the index on expires_at

2DELETE FROM url_mappings

3WHERE expires_at < NOW() - INTERVAL '1 day'

4ORDER BY expires_at

5LIMIT 5000;

6Notice the 1-day buffer. We're not deleting links the instant they expire; we give a grace period. This avoids races where a link expires at 3:00:00 PM, the cleanup job deletes it at 3:00:01 PM, but a read request at 3:00:00.500 PM was still in flight and now gets a database error instead of a clean 404 from the lazy check.

"How do we handle custom aliases?"

This one comes up quickly in most interviews, and the answer is shorter than you'd think. But the edge cases matter.

Users want vanity URLs like /my-brand instead of /a3Xk9. These custom aliases live in the same namespace as auto-generated short codes, which means you need to prevent collisions between the two systems.

The first thing to do: reserve a portion of the code space. If your auto-generated codes are always 6-7 characters of base62, require custom aliases to be at least 8 characters, or restrict them to contain at least one hyphen or special pattern. This eliminates any possibility of a custom alias colliding with a generated code.

If you can't enforce that separation (maybe the product requires short custom aliases), then you need a uniqueness check. The database handles this cleanly with a unique constraint on short_code:

ALTER TABLE url_mappings ADD CONSTRAINT uq_short_code UNIQUE (short_code);

When two users simultaneously request /my-brand, one INSERT succeeds and the other gets a unique constraint violation. Your application catches that error and returns a 409 Conflict.

1try:

2 await db.execute(

3 "INSERT INTO url_mappings (short_code, original_url, user_id) VALUES ($1, $2, $3)",

4 custom_alias, original_url, user_id

5 )

6except UniqueViolationError:

7 raise HTTPException(409, "This alias is already taken")

8No distributed locks. No two-phase checks. The database constraint is your concurrency control, and it's the right tool here because custom alias creation is low-volume (a tiny fraction of total writes). Don't over-engineer this.

What is Expected at Each Level

Interviewers calibrate their expectations based on your level. Knowing what "good" looks like at each tier helps you allocate your time. A mid-level candidate who nails the fundamentals will outscore a senior candidate who rushes to deep dives with a shaky foundation.

Mid-Level

- You should clearly articulate both core flows (shorten and redirect) without being prompted. Walk through the write path and the read path separately, showing the interviewer you understand this is two distinct problems with different performance profiles.

- Propose a reasonable short code generation strategy and explain why it works. Hashing with base62 or auto-incrementing IDs are both fine here. You don't need to land on the optimal solution, but you do need to acknowledge collision risk or sequentiality as a concern.

- Add a cache layer for the read path and explain why. Saying "it's read-heavy, roughly 100:1, so caching popular URLs in Redis gives us the biggest latency win" is enough. You don't need to design a multi-tier caching architecture.

- Present a clean API contract and database schema. The interviewer should be able to look at your POST /urls and GET /{short_code} definitions and immediately understand the data flow. Sloppy schemas (missing primary keys, no expiration field) signal carelessness.

Senior

- Compare at least two code generation strategies head-to-head and pick a winner with clear reasoning. The interviewer expects you to articulate why counter ranges beat naive hashing, not just that they do. Talk about collision probability, write contention, and information leakage.

- Proactively raise the 301 vs. 302 redirect tradeoff before the interviewer asks. This is one of those signals that tells them you've actually thought about the product, not just the infrastructure. Tie your choice back to the analytics requirement.

- Decouple analytics from the redirect hot path. You should be the one to say "we can't afford synchronous writes to an analytics store on every redirect" and propose the async pipeline (Kafka or similar). Explain what happens if the queue backs up and why that's acceptable.

- Drive the conversation. Senior candidates don't wait for the interviewer to ask "what about caching?" or "how do you handle expiration?" You should be sequencing your own deep dives, flagging tradeoffs as you go, and asking the interviewer which areas they'd like to explore further.

Staff+

- Address multi-region uniqueness. If you have data centers in US-East and EU-West both generating short codes, how do you guarantee no collisions? This is where you discuss partitioned counter ranges coordinated through etcd/Zookeeper, or region-prefixed ID spaces. The interviewer wants to see that you think beyond a single datacenter.

- Push caching up to the CDN layer. Serving 302 redirects from edge nodes means most viral link traffic never reaches your origin servers at all. Discuss how you'd set Cache-Control headers, handle TTL mismatches between the CDN and your database, and what happens when a link is deleted but the CDN still has it cached.

- Raise abuse prevention and operational concerns without being asked. Spam URL detection (integrating with Google Safe Browsing or similar), per-IP rate limiting on the creation endpoint, and monitoring cache hit rates are all things a staff engineer would flag. If your cache hit rate drops from 95% to 70%, that's an alert-worthy event that could cascade into database overload.

- Discuss how the system evolves. What happens at 10x the current scale? When do you shard the database, and on what key? How do you migrate from a single-region deployment to multi-region without downtime? Staff candidates think in terms of operational lifecycle, not just launch-day architecture.