Join Data Science Interview MasterClass (May Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

Data Science MasterClass (May) | 2 seats left

Batch vs Stream Processing

Batch vs Stream Processing

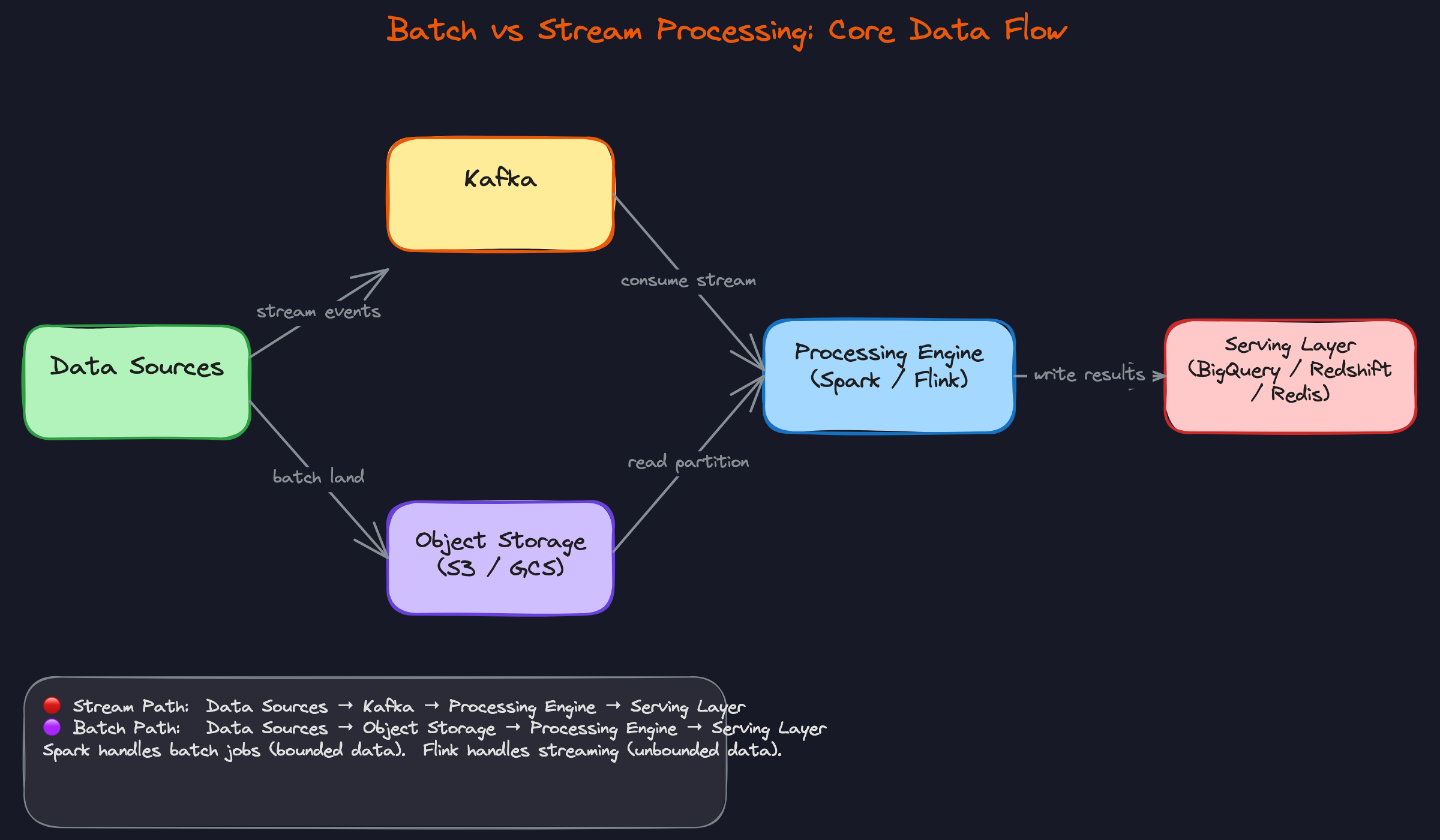

A nightly Spark job crunches 500GB of clickstream data and lands clean aggregates in BigQuery by 6am. A Flink job updates a fraud score within 200ms of a transaction hitting Kafka. Both are "processing data at scale." The tools, the failure modes, the operational costs, and the architectural decisions around them are almost nothing alike.

Batch processing works on bounded datasets: a fixed chunk of data that already exists, read from object storage, transformed, and written somewhere useful. Stream processing works on unbounded data: events arriving continuously, processed as they come in, with no natural finish line. The distinction isn't just about speed. It's about how data arrives, how you recover from failures, how you handle schema changes, and what guarantees you can make about correctness.

That last part is what interviewers at Uber, Airbnb, and Netflix are actually probing when they ask about this. "We need near-real-time dashboards" is a business requirement, not a technical decision. Translating it into a choice between a 15-minute Airflow DAG, a Spark Structured Streaming micro-batch job, or a stateful Flink deployment with watermarks and exactly-once semantics, that's the engineering judgment they're testing. Most production data platforms use both paradigms, and knowing when to reach for each is one of the clearest signals that separates a senior data engineer from someone who just knows the tools.

How It Works

Start with batch, because it's the simpler mental model. Raw data lands in object storage, say S3 or GCS, partitioned by date. At midnight (or whatever interval you've configured), Airflow wakes up and fires a Spark or dbt job. That job reads exactly one partition, the bounded slice of data for that day, applies your transformations, and writes the results to BigQuery or Redshift. Then it exits. Done. The scheduler logs a success, and nothing runs again until tomorrow night.

That "exits cleanly" part is load-bearing. Batch jobs have a defined start, a defined end, and a finite dataset. That's what makes them easy to reason about, retry, and backfill.

Streaming works differently at every step. Instead of data sitting at rest in S3, events are being produced continuously into Kafka topics by apps, services, and microservices. A stream processor like Flink or Spark Structured Streaming sits downstream, consuming those events as they arrive. It applies transformations, often with windowed aggregations like "count events per user in the last 5 minutes," and writes results to a serving layer like Redis or Cassandra, or back into another Kafka topic.

The stream processor never exits. It just keeps running.

Here's what that flow looks like:

The job that never finishes

This is the mechanical difference that interviewers want you to feel, not just recite. A batch job knows it's done because it runs out of data. A stream job has no such signal. It has to handle backpressure when Kafka is producing faster than it can consume, checkpoint its state to durable storage so it can recover from failures without reprocessing everything, and manage memory continuously across a potentially infinite stream of events.

Your interviewer cares about this because it changes your failure model entirely. When a batch job fails, you re-run it. When a stream job fails mid-window, you need to know where it left off, which is exactly what Flink's checkpointing mechanism is designed to handle.

Processing time vs event time

Here's where a lot of candidates get caught out. A user opens your mobile app on the subway at 11:58pm, triggers a click event, and that event finally reaches Kafka at 12:03am once their phone reconnects. If your Flink job is computing hourly aggregations based on when events arrive (processing time), that click lands in the wrong bucket. Your midnight aggregate is wrong.

Event time processing uses the timestamp embedded in the event itself, not the moment the processor sees it. This matters because in an interview, the moment you mention windowed aggregations, a good interviewer will ask "what about late data?" You need to know that watermarks are how stream processors track event-time progress, and that you have to decide what to do with events that arrive after the watermark has advanced past their window. Drop them, or route them to a side output for later correction.

State is the hidden cost

Counting events in a batch job is a SQL aggregation. Counting unique users in a rolling 24-hour window in a stream is a state management problem.

For every key you're tracking (every user ID, every product, every session), your stream processor has to maintain running state across windows. At scale, that's millions of keys held in memory or spilled to disk. Flink handles this with its RocksDB state backend, which persists state locally and snapshots it to S3 during checkpoints. Kafka Streams uses changelog topics to do the same thing. Neither is free. The bigger your key space and the longer your windows, the more memory, disk I/O, and checkpoint overhead you're paying.

This matters because when an interviewer asks "how would you count unique visitors per hour in real time?", the right answer isn't just "use Flink." It's "use Flink with a keyed state store, and here's what the state management looks like at scale."

Patterns You Need to Know

In an interview, you'll usually need to pick a specific approach. Here are the ones worth knowing.

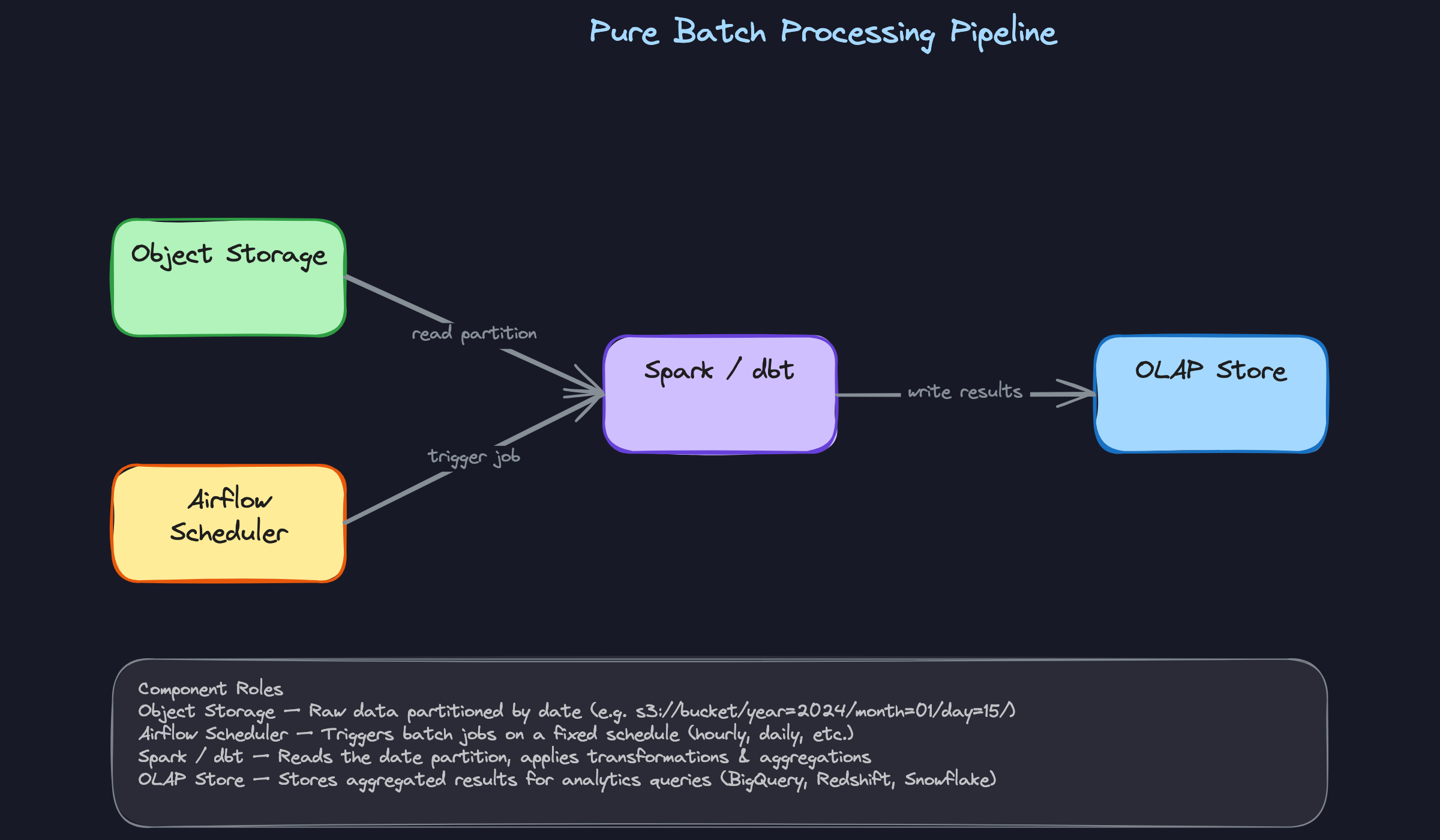

Pure Batch

A scheduler (Airflow, Dagster) fires a Spark or dbt job on a fixed interval. The job reads a bounded partition from object storage, usually scoped by date, applies transformations, and overwrites the output partition in your OLAP store. The overwrite is the key move: because you're replacing the entire partition, the job is naturally idempotent. Run it twice, get the same result. That makes reruns and backfills straightforward.

This is still the right default for most analytical workloads. If the business question is "how many users converted last week?", you don't need a 24/7 Flink cluster. A nightly Spark job is simpler to write, cheaper to run, and far easier to debug when something goes wrong.

When to reach for this: Any time the interviewer describes a reporting or analytics use case where hourly or daily freshness is acceptable. Lead with batch. Let the interviewer push you toward streaming if they need to.

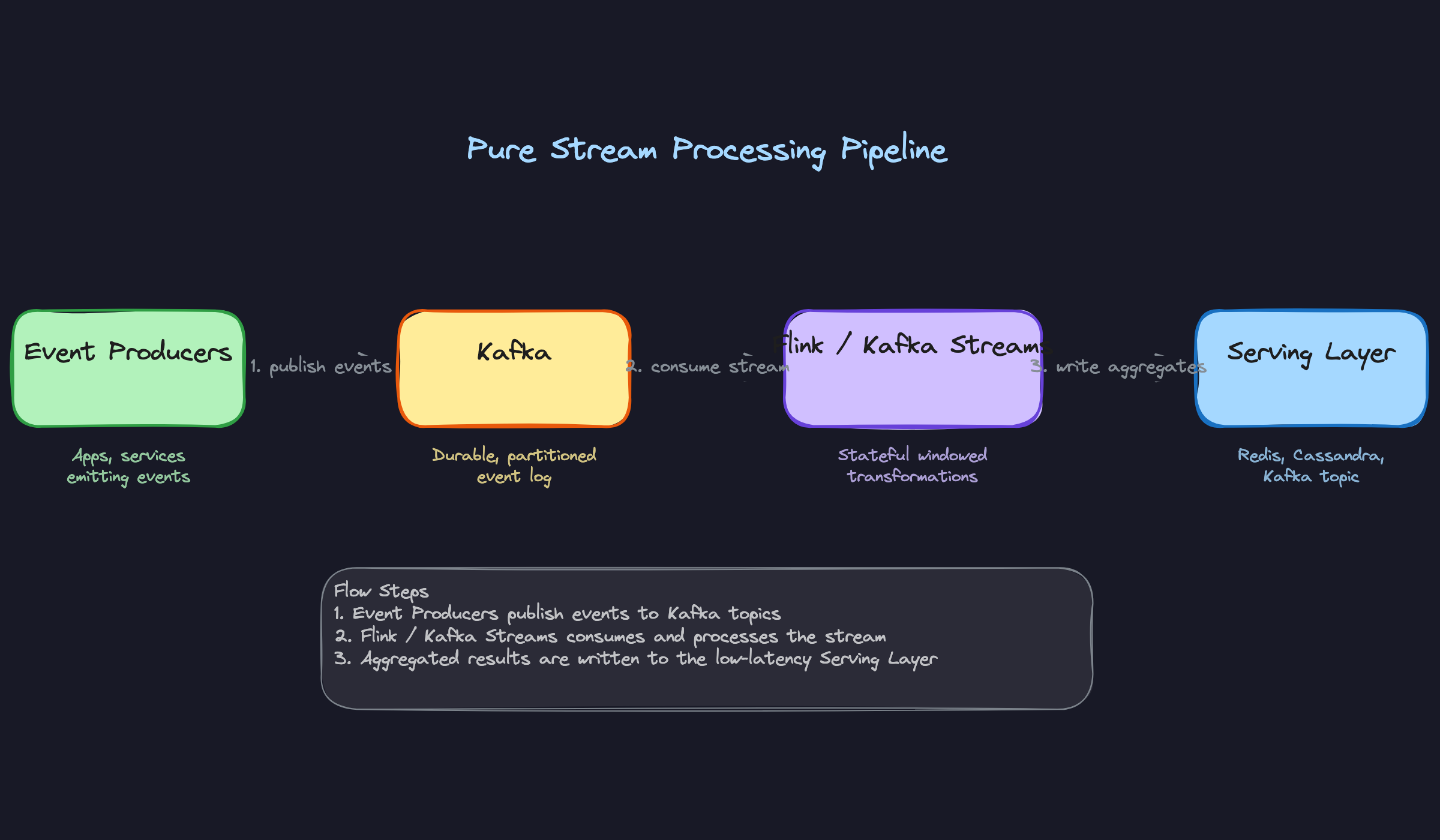

Pure Streaming

Events flow from producers into Kafka, and a stream processor (Flink, Kafka Streams, or Spark Structured Streaming) consumes them continuously. The processor applies windowed aggregations, joins, or enrichments, then writes results to a serving layer like Redis or Cassandra. The job never finishes. It runs indefinitely, checkpointing state to survive failures.

The operational cost here is real. You're maintaining a long-running stateful process across potentially millions of keys. Exactly-once semantics (which you almost certainly want) require coordinated checkpointing, idempotent sinks, and transactional Kafka producers. When a Flink job crashes and recovers from a checkpoint, you need confidence it won't double-write to your downstream store. None of that is free.

When to reach for this: Fraud detection, real-time recommendations, live dashboards with sub-minute latency requirements. If the interviewer says "we need to act on this event within seconds," pure streaming is your answer.

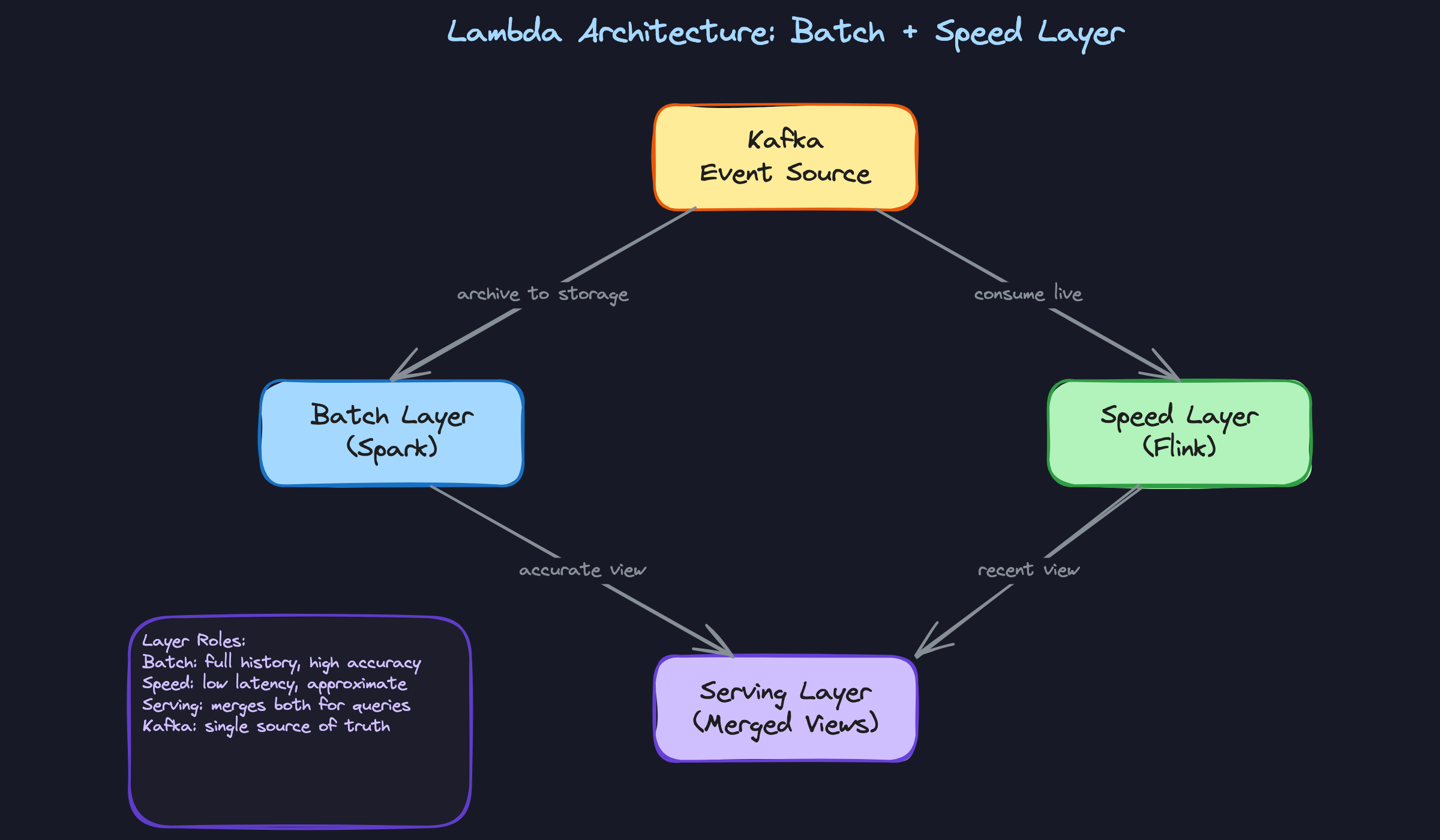

Lambda Architecture

Lambda runs two parallel pipelines off the same event source. The batch layer (Spark) reprocesses full historical data on a schedule and produces accurate, complete results. The speed layer (Flink or similar) processes the most recent events in near-real-time and produces approximate results that are available immediately. The serving layer merges both views: queries get accurate historical data from the batch layer and recent approximate data from the speed layer, with the batch results overwriting the speed layer's output as they catch up.

The appeal is obvious: you get both accuracy and low latency. The pain is that you're maintaining two separate codebases that need to produce semantically identical results. A bug fix in your batch aggregation logic has to be replicated in the streaming job. A schema change has to be handled in both places. Teams that have lived with Lambda for a few years tend to hate it.

When to reach for this: Mention Lambda when the interviewer describes a use case that needs both historical accuracy and real-time freshness, and then immediately flag the dual-codebase maintenance cost. Showing you know the trade-off is more impressive than just naming the pattern.

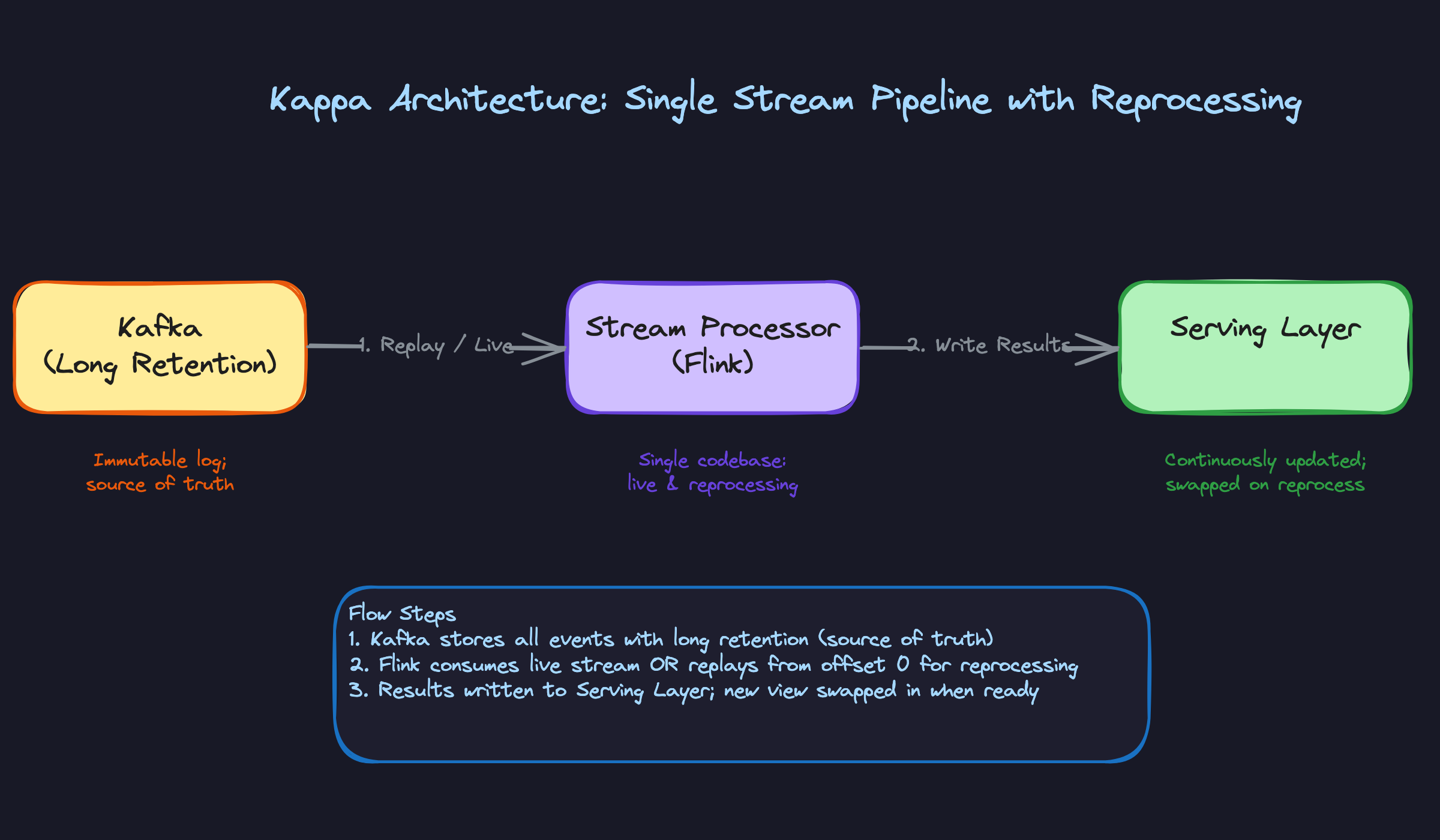

Kappa Architecture

Kappa's premise is simple: if your stream processor is powerful enough, you don't need a separate batch layer at all. Kafka becomes the source of truth, with long retention configured so the full event history is available for replay. A single Flink job handles both live processing and reprocessing. When you need to backfill or fix a bug, you spin up a new instance of the same job, point it at the beginning of the Kafka topic, let it catch up, then swap it into production.

One codebase. One processing model. That's the win. The trade-offs are around cost and throughput. Retaining months or years of events in Kafka (or Kafka-compatible storage like WarpStream or Confluent's tiered storage) isn't cheap. Reprocessing at scale also means your stream processor needs to handle historical replay throughput without starving the live pipeline, which often means running the reprocessing job in parallel rather than in-place.

When to reach for this: When the interviewer asks how you'd simplify a Lambda architecture, or when the system needs reprocessing capability without the overhead of maintaining separate batch and streaming codebases. Kappa is the modern answer to Lambda's complexity.

Comparing the Four Patterns

| Pattern | Latency | Operational Complexity | Best For |

|---|---|---|---|

| Pure Batch | Hours to days | Low | Reporting, historical analytics, backfills |

| Pure Streaming | Milliseconds to seconds | High | Fraud detection, real-time features, live dashboards |

| Lambda | Seconds (speed) + hours (batch) | Very high | Use cases needing both real-time and historical accuracy |

| Kappa | Seconds, with replay capability | Medium-high | Streaming-first platforms that need clean reprocessing |

For most interview problems, you'll default to pure batch. It's simpler, cheaper, and the right fit for the majority of analytical workloads. Reach for pure streaming when the latency requirement is genuinely in the seconds range and the business impact justifies the operational overhead. Lambda is worth mentioning historically, but if you propose it, be ready to explain why you'd accept the dual-codebase burden. Kappa is the cleaner modern alternative when your team has the streaming maturity to pull it off.

What Trips People Up

Here's where candidates lose points — and it's almost always one of these.

The Mistake: Reaching for Streaming by Default

The bad answer sounds like this: "I'd set up a Flink job consuming from Kafka, apply windowed aggregations, and write results to the serving layer." Fine. But then the interviewer asks what the business question is, and it turns out to be "what were total sales yesterday?"

A 24/7 stateful Flink cluster to answer a daily question is like renting a Formula 1 car to drive to the grocery store. A nightly Spark job reading a date partition from S3 and writing to BigQuery is simpler, cheaper, and infinitely easier to debug when something goes wrong at 2am. Streaming systems have real operational costs: you need to manage checkpointing, handle backpressure, monitor consumer lag, and deal with failures in long-running jobs.

Always anchor your choice to the actual SLA. If the business can wait an hour, or a day, batch is probably the right call.

The Mistake: Confusing "Fast" with "Streaming"

"We need data within 15 minutes" does not mean you need Flink. This comes up constantly, and it's a meaningful distinction.

Micro-batch Spark Structured Streaming running every 5 minutes can hit a 15-minute SLA with far less operational overhead than a fully stateful streaming deployment. In some cases, a well-tuned Airflow DAG with incremental dbt models gets you there too. True event-by-event streaming with stateful operators is the right tool when you need sub-second latency or continuous aggregations over unbounded windows. Not for "15 minutes is fine."

The Mistake: Forgetting That Events Arrive Late

Candidates describe a windowed aggregation — "count unique users per 5-minute window" — and stop there. The interviewer then asks: "What if a mobile event is generated at 11:58pm but doesn't reach Kafka until 12:04am because the user's phone was offline?"

Most candidates go quiet.

If you don't handle watermarks and allowed lateness, your aggregations are silently wrong. Events that arrive after the window closes either get dropped or land in the wrong bucket, and you'll never know unless you're explicitly tracking it. In Flink, you configure a watermark strategy that tells the engine how far behind event time can lag before a window is considered complete. You can also route late arrivals to a side output for separate handling rather than just discarding them.

This is one of the most common probe questions at companies like Uber and Airbnb, where mobile event latency is a real production problem. Know the difference between event time and processing time, and have a concrete answer for what happens to data that arrives after the watermark.

The Mistake: Treating Exactly-Once as a Free Setting

"We'll configure exactly-once semantics" is one of those phrases that sounds confident and falls apart immediately under follow-up.

Exactly-once in Flink requires two-phase commit between the source, the operator state, and the sink. Your sink needs to support idempotent writes or transactional producers. Checkpointing intervals directly affect your recovery latency and your throughput — tighter checkpoints mean more overhead. Kafka transactional producers add latency. If any part of that chain doesn't support the guarantee, you don't have exactly-once; you have at-least-once with extra steps.

The right answer isn't to avoid saying "exactly-once." It's to say it and immediately follow up with what it costs. Something like: "We'd use Flink's exactly-once mode with checkpointing, but that means configuring idempotent sinks and accepting some throughput reduction. If the sink doesn't support transactions, we'd need to design for idempotency at the application level instead." That's what a senior engineer sounds like.

How to Talk About This in Your Interview

When to Bring It Up

The batch vs stream decision belongs in the conversation the moment you hear anything about data freshness, latency SLAs, or pipeline design. Specific cues to listen for:

- "We need near-real-time dashboards" or "data within a few minutes"

- "We're running nightly jobs but stakeholders want fresher data"

- "We need to detect fraud / anomalies / events as they happen"

- "Our pipeline needs to handle late-arriving data"

- Any mention of Kafka, Flink, or event-driven architecture

When you hear these, don't just nod and say "we could use streaming." Walk the interviewer through your reasoning. That's what they're actually evaluating.

Sample Dialogue

Follow-Up Questions to Expect

"How do you handle exactly-once semantics in a streaming pipeline?" Exactly-once requires coordinated checkpointing in Flink, idempotent sinks, and transactional producers on the Kafka side; it's not a free config flag, and it costs throughput.

"What's the difference between Lambda and Kappa architecture?" Lambda runs a batch layer and a speed layer in parallel (two codebases, two sets of bugs); Kappa eliminates that by treating the Kafka log as the single source of truth and replaying it through one stream processor for both live processing and reprocessing.

"When would you stick with pure batch in 2024?" Anytime the freshness SLA is hourly or daily, the transformations are complex SQL-style aggregations, or the team doesn't have the operational maturity to run stateful streaming jobs reliably.

"How do watermarks work?" A watermark is the processor's estimate of how far event time has progressed; it advances as events arrive, and when the watermark passes a window boundary, that window closes and emits its result, with any events arriving after that point treated as late.

What Separates Good from Great

- A mid-level candidate picks batch or streaming based on the word "real-time" in the requirements. A senior candidate asks what "real-time" actually means to the business, then maps that to a latency number, then picks the simplest architecture that meets it.

- Mid-level candidates describe Lambda architecture as "batch plus streaming." Senior candidates immediately flag the dual-codebase maintenance burden and explain why most modern teams prefer Kappa when their stream processor can handle reprocessing throughput, or a well-partitioned batch pipeline when it can't.

- Knowing that late data exists is table stakes. What impresses interviewers is explaining the specific mechanism: watermarks, allowed lateness windows, side outputs, and the organizational decision about whether late events get corrected or discarded.