Join Data Science Interview MasterClass (May Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

Data Science MasterClass (May) | 2 seats left

Choosing the Right Data Store

Choosing the Right Data Store

A candidate I coached last year had genuinely impressive depth. They knew Iceberg's merge-on-read vs. copy-on-write trade-offs cold. They could explain Kafka's log compaction from memory. They still bombed the system design round because when the interviewer asked "what would you use to store this data?", they said "Snowflake" before the problem statement was even finished.

That's the failure mode senior interviewers are watching for. Data store selection is one of the highest-signal moments in a DE interview, because it immediately reveals whether you think in trade-offs or just name-drop tools. Saying "I'd use Postgres" or "throw it in S3" in the first 30 seconds tells the interviewer you have a hammer and everything looks like a nail.

The opposite mistake is just as costly. Some candidates overcorrect and propose five stores for a problem that needs two, turning a straightforward pipeline into a distributed systems dissertation. Neither extreme shows the judgment a senior engineer actually needs.

What this guide gives you is a four-question decision process you can speak out loud in real time, so the interviewer hears structured thinking instead of instinct. Not a list of tools to memorize. A repeatable framework you can apply to any scenario they throw at you, whether it's a fraud detection feature store or a petabyte-scale clickstream lake.

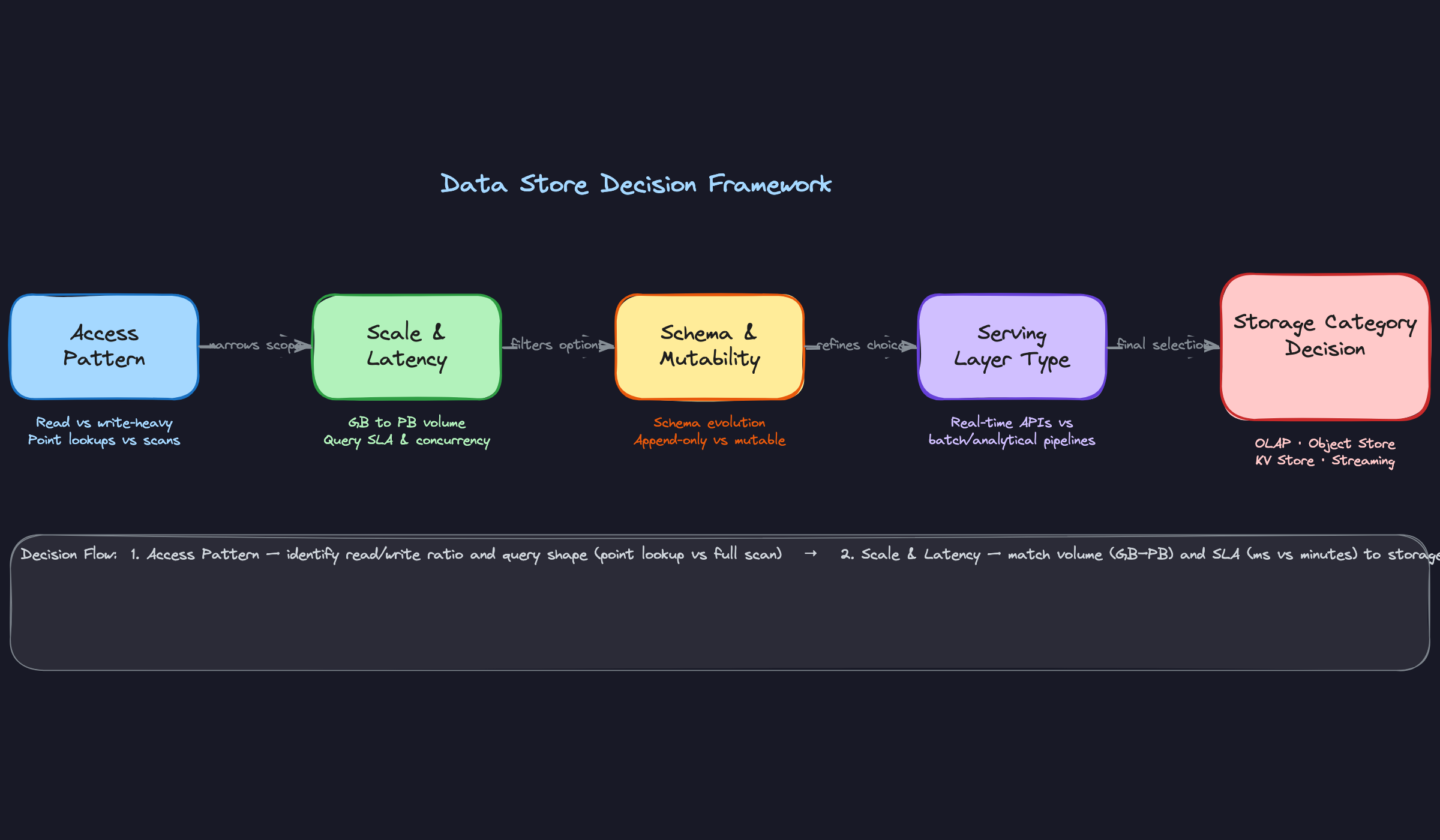

The Framework

Four questions. That's it. If you can answer these four questions about any system, you can justify any storage decision out loud, under pressure, in front of a skeptical senior engineer.

Internalize this table before anything else:

| Phase | Time | Goal |

|---|---|---|

| Q1: Access Pattern | 1-2 min | Determine read/write ratio, query shape (point lookup vs. scan) |

| Q2: Scale & Latency | 1-2 min | Pin down data volume, query SLA, and concurrency requirements |

| Q3: Schema & Mutability | 1 min | Understand how often the shape of data changes and whether records get updated |

| Q4: Serving Layer Type | 1 min | Decide if the consumer is a human dashboard, an API, or a downstream pipeline |

This is your verbal script for the first five minutes of any data store question. You're not guessing at tools yet. You're narrowing the decision space, one question at a time.

Q1: What Is the Access Pattern?

Start here, always. Before you name a single tool, you need to know how data flows in and out of this system.

What to do: - Ask whether reads dominate, writes dominate, or both are heavy - Identify the query shape: are consumers doing point lookups by ID, or scanning millions of rows with aggregations? - Clarify whether reads need to be random-access (give me trip ID 8675309) or sequential (give me all trips in the last 30 days, grouped by city)

What to say:

"The first thing I want to understand is the access pattern. Are we primarily reading or writing? And when we read, are we doing point lookups, or are these analytical queries that scan large ranges of data?"

Point lookups at low latency push you toward key-value stores like DynamoDB or Cassandra. Wide scans with aggregations push you toward columnar OLAP stores. If you skip this question and jump straight to "I'd use Snowflake," the interviewer hears that you don't think in trade-offs.

How the interviewer evaluates you: They're checking whether you distinguish between OLTP and OLAP access patterns. Candidates who conflate the two almost always pick the wrong store.

Q2: What Is the Scale and Latency Requirement?

Once you know the access pattern, you need to know the pressure it's under.

What to do: - Get a data volume estimate: gigabytes, terabytes, or petabytes? Growing at what rate? - Pin down the query SLA: is 200ms acceptable, or does this need sub-10ms? Is a 5-minute query fine for a nightly dashboard? - Ask about concurrency: ten analysts running queries, or ten thousand API calls per second?

What to say:

"Before I go further, I want to sanity-check the scale. Are we talking gigabytes or petabytes? And what's the latency budget? Is this serving a real-time API or a scheduled report?"

This question is where a lot of candidates lose points by skipping it. Recommending Redshift for a 50GB dataset that needs sub-100ms lookups is technically possible but practically wrong. Scale and latency together tell you whether managed OLAP is overkill, whether object storage is viable, or whether you need a purpose-built low-latency store.

How the interviewer evaluates you: They want to see you use numbers. Vague answers like "it could be a lot of data" signal that you've never designed a production pipeline. Throw out an estimate and ask if it's in the right ballpark.

Q3: Does the Schema Need to Evolve?

This is the question that separates candidates with production experience from those who've only read documentation.

What to do: - Ask whether new fields get added regularly (a product team shipping new event properties every sprint is a real constraint) - Clarify whether records get updated or deleted after they're written, or if this is append-only - Ask about downstream consumers: does a schema change break an existing Spark job or a BI tool?

What to say:

"One thing that matters a lot here is schema evolution. Are we expecting the shape of this data to change over time? And do records ever get updated after they're written, or is this append-only?"

Append-only, stable schema? Object storage with Parquet and a table format like Iceberg or Delta Lake is a strong default. Frequent schema changes with update/delete requirements? You need a store that handles ACID transactions and schema evolution gracefully, which is where Iceberg's schema evolution support or a warehouse like BigQuery earns its keep. Ignoring this question and proposing raw Parquet on S3 for a mutable dataset is a red flag.

How the interviewer evaluates you: Mentioning schema evolution unprompted signals that you've dealt with the pain of a broken pipeline at 2am because someone added a nullable column. That experience is exactly what they're hiring for.

Q4: Is This Serving Humans in Real-Time or Machines in Batch?

The last question reframes everything. The same data, stored differently, depending on who consumes it.

What to do: - Identify the consumer: a BI dashboard, a machine learning model, a microservice API, or another pipeline - Clarify the freshness requirement: does the consumer need data that's seconds old, minutes old, or is a daily snapshot fine? - Ask if there are multiple consumers with different needs, because that's where layered architectures become necessary

What to say:

"Last question before I propose anything: who or what is consuming this data, and how fresh does it need to be? Is this powering a live dashboard, feeding a model, or is it an input to another pipeline that runs nightly?"

A growth dashboard that refreshes once a day doesn't need the same serving layer as a fraud detection API that needs sub-50ms lookups. Getting this wrong means you'll propose a data warehouse for something that needs a feature store, or a Redis cache for something that only needs a scheduled Spark job.

How the interviewer evaluates you: They're watching whether you recognize that storage and serving are sometimes the same layer (BigQuery serving a dashboard) and sometimes different layers (Iceberg for storage, Redis for serving). Candidates who treat every consumer as identical miss this entirely.

Putting It Together: The Ride-Share Example

Walk through all four questions on a single scenario and watch how fast the decision space collapses.

The prompt: "Design a pipeline to process trip events for a ride-share app."

Q1, Access Pattern: Trip events are written continuously (high write volume), and reads are mostly analytical: aggregate revenue by city, driver utilization over time. No point lookups by trip ID needed in the analytical layer.

Q2, Scale & Latency: Millions of trips per day, growing to tens of billions of rows over years. Analytical queries can tolerate 10-30 seconds. But the ops team also wants a real-time view of active trips, which needs sub-second freshness.

Q3, Schema & Mutability: The product team ships new event fields every few weeks. Trip records are immutable once closed, but the schema itself evolves. Append-only writes, but schema changes are frequent.

Q4, Serving Layer: Two consumers. A data science team running batch queries (machines, batch). An ops dashboard showing live trip counts (humans, real-time).

The answers point directly to a layered architecture: Kafka for ingestion and real-time event retention, Iceberg on S3 for the historical analytical layer (handles schema evolution natively, append-only writes, petabyte-scale), and Redshift or BigQuery on top for the data science team's SQL queries. The ops dashboard gets a separate materialized view or a Redis layer fed from the Kafka stream.

Do this: When you land on a multi-store architecture, explicitly say why each layer exists. "Kafka handles ingestion and gives us replay. Iceberg on S3 is our historical store because it handles schema evolution and scales cheaply. Redshift sits on top for the analysts." One sentence per layer. It shows you're not over-engineering; you're solving distinct problems.

That's the framework in action. Four questions, five minutes, a justified answer you can defend.

Putting It Into Practice

Three scenarios. Each one shows the framework in motion, not just on paper. Read the dialogue, then steal the moves.

Scenario 1: Real-Time Fraud Detection Feature Store

The interviewer asks you to design the storage layer for a fraud detection system. Features like "transactions in the last 5 minutes" and "average spend per merchant" need to be served to an ML model at inference time.

Do this: Notice how the candidate didn't say "I'd use Redis" in the first sentence. They walked through what ruled things out before landing on what ruled things in. That's the move.

Don't do this: Don't cave when the interviewer pushes back. They're often stress-testing your reasoning, not correcting you. Acknowledge the alternative, explain the trade-off, and hold your ground if your reasoning is sound.

user:{id}:v2, so old and new features can coexist during rollout."Scenario 2: Historical Analytics Warehouse for a Growth Dashboard

The growth team wants a dashboard showing weekly active users, retention cohorts, and revenue by region. Data comes from three sources: a Postgres transactional DB, a mobile event stream, and a third-party payments API.

Do this: When the interviewer gives you a constraint you missed, absorb it cleanly and adjust. Don't apologize. Just say "In that case..." and pivot. It shows you're designing, not reciting.

SELECT region, SUM(revenue) FROM orders GROUP BY region only reads the two columns it needs instead of every row. That's the fundamental difference."Here's the Redshift table DDL you'd sketch out during this conversation:

1CREATE TABLE fact_transactions (

2 transaction_id VARCHAR(36) NOT NULL,

3 user_id BIGINT NOT NULL,

4 event_timestamp TIMESTAMP NOT NULL,

5 region VARCHAR(50),

6 revenue_usd DECIMAL(12, 2),

7 payment_method VARCHAR(30)

8)

9DISTSTYLE KEY

10DISTKEY (user_id)

11SORTKEY (event_timestamp);

12The SORTKEY on event_timestamp means range queries like "last 90 days" skip most of the data entirely. The DISTKEY on user_id collocates rows for the same user on the same node, which speeds up cohort joins.

Scenario 3: Data Lake Ingestion for Raw Clickstream Events

A media company streams 200 billion click events per day from their web and mobile apps. The data needs to be queryable for ad-hoc analysis, fed into ML training pipelines, and retained for three years.

Do this: "I'd ask the team" is a legitimate answer when the trade-off genuinely depends on context. It signals maturity, not indecision. Just make sure you've already demonstrated you know the technical difference.

event_type or platform, depending on the most common query patterns. If analysts mostly ask 'show me all mobile events from last Tuesday,' you want the partition scheme to match that. Here's roughly what the Iceberg DDL looks like:"1CREATE TABLE clickstream.events (

2 event_id STRING,

3 user_id BIGINT,

4 session_id STRING,

5 event_type STRING,

6 platform STRING,

7 event_timestamp TIMESTAMP,

8 properties MAP<STRING, STRING>

9)

10USING iceberg

11PARTITIONED BY (

12 days(event_timestamp),

13 event_type

14)

15TBLPROPERTIES (

16 'write.target-file-size-bytes' = '134217728', -- 128 MB files

17 'write.parquet.compression-codec' = 'zstd'

18);

19The 128 MB file size target matters. Too many small files and your query engine spends more time on metadata than reading data. Too few large files and you lose parallelism. This is the kind of operational detail that separates candidates who've run these systems from candidates who've read about them.

Common Mistakes

Most candidates don't fail because they picked the wrong tool. They fail because of how they picked it. These mistakes signal the same thing to every senior interviewer: you've memorized tools, not trade-offs.

Jumping to a Tool in the First 30 Seconds

You've heard this one before and you're still doing it. The interviewer finishes describing the problem and you say "I'd use Snowflake here" before you've asked a single clarifying question.

Interviewers penalize this immediately because it tells them you have a favorite hammer. The next 45 minutes become an uphill battle where you're defending a choice you made without enough information, and they're probing to see if you even know what you don't know.

Don't do this: "So for this pipeline, I'd probably go with BigQuery since it handles large-scale analytics well."

Do this: "Before I pick a store, I want to understand the access pattern. Are we talking point lookups, full table scans, or something in between?"

The fix is mechanical: don't name a tool until you've verbalized at least two constraints.

Treating S3 as the "Dumb Fallback"

This one is subtle. Candidates mention S3 almost apologetically, as if it's what you use when you can't afford a real database. "We could dump raw data to S3 as a fallback..."

That framing is about five years out of date. S3 with Iceberg or Delta Lake is the primary architecture at companies like Netflix, Uber, and Airbnb. It's not a fallback; it's the lakehouse foundation. When you treat it as a last resort, you signal that you haven't worked in a modern data platform.

Say "I'd land raw events in Iceberg on S3 because it gives us time travel for backfills and schema evolution without rewriting the table" and you sound like someone who's actually built this.

Recommending the Right Tool for the Wrong Team

Cassandra is a perfectly valid choice for high-throughput, low-latency writes at scale. It's also a terrible recommendation for a five-person startup that runs entirely on GCP and has no one who's ever operated it.

Senior interviewers at companies like Airbnb or Spotify have been burned by this exact pattern in real life. They will push back hard when they hear technically correct but operationally reckless suggestions. "Who's going to tune the compaction strategy?" is a real question you will get.

Don't do this: Recommending Cassandra, HBase, or a self-managed Kafka cluster without acknowledging the operational burden and whether the team can absorb it.

The fix is to add one sentence of operational context every time you recommend a non-managed service: "This works if the team has the bandwidth to operate it, otherwise I'd look at Bigtable since they're already on GCP."

Conflating Storage and Compute

"I'd store the data in Spark" is a real thing candidates say. So is "we can use Kafka as our database for this."

Spark is a compute engine. It processes data that lives somewhere else. Kafka is a distributed log optimized for event streaming and retention; it's not a queryable store you build a dashboard on. Mixing these up doesn't just sound imprecise, it tells the interviewer you haven't actually built a production pipeline where these layers are separate concerns with separate failure modes.

Be explicit about the boundary. "Kafka handles ingestion and replay. Flink reads from Kafka and writes aggregated results to Iceberg. Redshift queries Iceberg for the dashboard." Every tool has a lane.

Forgetting Schema Evolution and Backfills

This is the mistake that separates candidates who've read about data engineering from candidates who've done it. You pick a store, justify the access pattern, nail the latency requirements, and then the interviewer asks "what happens when the upstream team adds a new field to the event?" and you go quiet.

Schema changes are a fact of life in production pipelines. If your chosen store can't handle them gracefully, you're signing up for painful migrations. Failing to mention this signals you've never had a 3am page because a schema change broke a downstream pipeline.

Do this: For any store you recommend, add one sentence on schema evolution. "Iceberg supports schema evolution natively, so adding a nullable column doesn't require a table rewrite." Or: "We'd use Avro with Schema Registry so consumers can evolve independently."

Backfills are the same story. If you recommend an append-only store, mention how you'd reprocess historical data when logic changes. "Because Iceberg supports time travel, we can rerun the transformation against the state of the table at any point in the past" is a sentence that will make your interviewer visibly relax.

Using a Single Store for Everything (or Five Stores When Two Would Do)

Two failure modes, same root cause: not matching the store to the actual requirement.

The "one store for everything" candidate suggests Postgres for a petabyte-scale analytics use case because it's familiar. The over-engineer suggests Kafka plus Redis plus Cassandra plus BigQuery plus S3 for what is essentially a nightly batch job. Both get penalized, just for opposite reasons.

When you catch yourself adding a third or fourth store, pause and ask out loud: "Is this complexity justified by a specific requirement, or am I adding it because I can?" Interviewers respect that question more than the extra store.

Quick Reference

Use Case to Store: The Fast-Lookup Table

| Use Case | Recommended Store | One-Line Justification | Trade-off to Mention |

|---|---|---|---|

| Petabyte-scale analytics, BI dashboards | BigQuery / Snowflake / Redshift | Columnar storage, massively parallel query execution | Cost at high concurrency; Redshift needs cluster sizing |

| Raw event ingestion, clickstream, logs | S3/GCS + Iceberg or Delta Lake | Cheap, durable, decoupled storage from compute | Requires a query engine (Athena, Spark); no sub-second latency |

| Real-time feature serving for ML | Redis / DynamoDB | Sub-millisecond point lookups at high throughput | Limited query flexibility; not suited for range scans or aggregations |

| Event streaming + replay | Kafka | Durable log with configurable retention, consumer group offsets | Not a database; retention is time/size bounded, not indefinite |

| High-throughput write + wide-column reads | Cassandra / Bigtable | Optimized for write-heavy workloads with known row key access | No joins, no ad-hoc queries; schema must match access pattern |

| Operational metrics, time-series data | InfluxDB / TimescaleDB | Native time-series compression and downsampling | Narrow use case; don't generalize beyond time-series |

| Mutable analytical data (GDPR deletes, late updates) | Iceberg + S3 / Delta Lake | Row-level deletes and ACID transactions on object storage | Compaction overhead; small file problem if not managed |

The Four Questions: Say These Out Loud

| Framework Question | Exact Phrasing to Use | What It Signals to the Interviewer |

|---|---|---|

| Access pattern | "Before I pick a store, I want to understand the access pattern. Are we doing point lookups, range scans, or full-table aggregations?" | You think before you name-drop |

| Scale and latency | "What's the expected data volume, and what's the query SLA? Are we talking milliseconds for a user-facing API, or minutes for a nightly batch job?" | You know that scale changes the answer |

| Schema evolution | "How stable is the schema? If upstream producers change their event shape, I want a store that handles that gracefully without breaking downstream consumers." | You've dealt with production pipelines |

| Serving layer type | "Is this serving a human in real-time, a machine learning model, or an internal analytics pipeline? Each has very different latency and freshness requirements." | You understand the full data product lifecycle |

Phrases to Use

These are the sentences that separate candidates who have a framework from candidates who have a list.

- Opening the discussion: "The first thing I want to nail down is the access pattern, because that determines everything else about the storage choice."

- Justifying a choice: "I'd go with Iceberg on S3 here rather than loading directly into Snowflake, because we want to preserve the raw layer and keep storage costs decoupled from compute."

- Handling pushback: "Postgres would work at smaller scale, but once you're doing full-table scans across hundreds of millions of rows, you'll hit I/O limits that a columnar store handles natively."

- Layering stores: "I'd actually use two stores here: Kafka for ingestion and replay, and Iceberg for the historical layer. They solve different problems and I don't want one store doing both jobs badly."

- Flagging a trade-off proactively: "The downside of Cassandra here is that we're locked into the access pattern we design for. If query requirements change, schema migration is painful."

- Closing your recommendation: "Given the team is already on GCP, query patterns are analytical, and schema is evolving, BigQuery is the pragmatic choice. If we were on AWS with predictable concurrency, I'd revisit Redshift."

Red Flags to Avoid

- MySQL or Postgres for petabyte-scale analytics. Row-oriented storage cannot scan 500GB efficiently. This answer ends conversations.

- Elasticsearch as a primary data store. It's a search index, not a database. Suggesting it as your main analytical store signals you've confused the tool's purpose.

- HDFS without a table format. Recommending raw HDFS in 2024 without Iceberg or Delta Lake on top tells the interviewer you haven't kept up with the lakehouse shift.

- Kafka as a database. Kafka is a log. It has no query interface, limited retention, and no random access. Treating it as persistent storage is a category error.

- Recommending Cassandra for a five-person team on GCP. Operationally expensive, requires deep expertise, and Bigtable exists. Technical correctness without operational awareness is a yellow flag at senior levels.

Safe Defaults (With the Caveat That Makes Them Sound Earned)

These work in most DE interviews. Add the caveat so you sound like you're reasoning, not reciting.

- Analytical warehouse: BigQuery or Snowflake. Add: "assuming the team doesn't already have a strong Redshift investment and needs serverless scaling."

- Data lake raw layer: S3 + Iceberg. Add: "with a compaction job to manage small files and a schema registry if the events are Avro."

- Low-latency feature store: Redis or DynamoDB. Add: "DynamoDB if we need durability and multi-region; Redis if we can tolerate the operational overhead of managing eviction and persistence."

- Event ingestion: Kafka. Add: "with retention set based on how far back consumers need to replay, not just defaulting to seven days."

- Real-time + historical hybrid: Kafka for ingestion, Iceberg for the historical layer, and a warehouse for aggregated reporting. Add: "this is only worth the complexity if the real-time and batch use cases are genuinely separate; otherwise a single warehouse with micro-batch is simpler."