Join Data Science Interview MasterClass (May Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

Data Science MasterClass (May) | 2 seats left

The Data Engineering Interview Framework

The Data Engineering Interview Framework

A candidate I coached last year could explain the internals of Spark's shuffle mechanism, had opinions on Iceberg versus Delta Lake, and had built real pipelines at scale. They bombed the system design round. The feedback: "didn't demonstrate senior-level thinking." What they lacked wasn't knowledge. It was a way to show it.

Data engineering interviews look like software system design interviews, but the success criteria are completely different. Your interviewer isn't waiting to hear about load balancers and API gateways. They want to know if you think about SLAs, data freshness, schema evolution, idempotency, and what happens when upstream data arrives six hours late. Candidates who treat these interviews like backend design interviews talk past the rubric entirely, and never know why they failed.

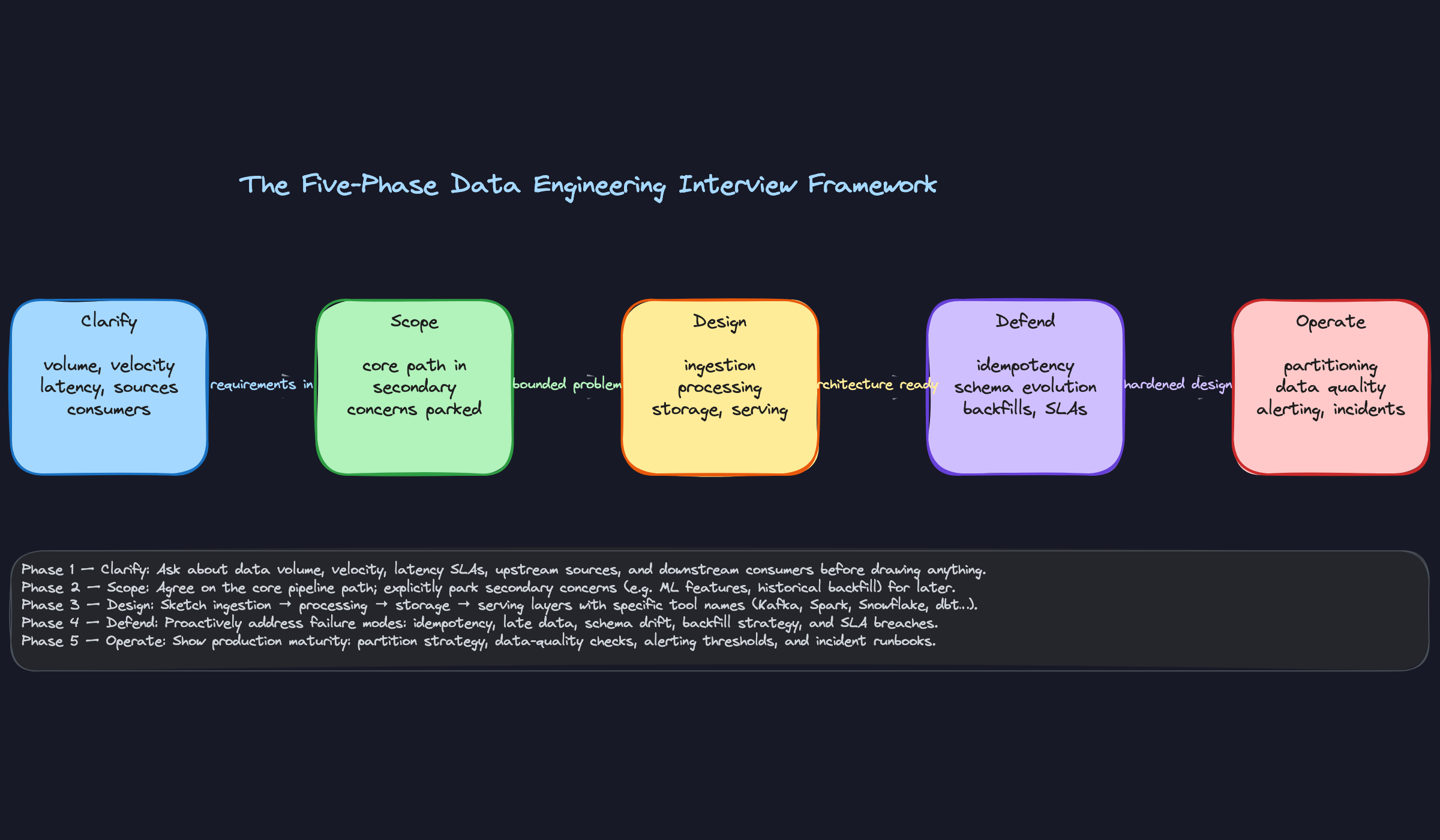

Without a repeatable structure, you'll either go too deep too fast (explaining Spark executor configs before you've clarified whether this even needs batch processing) or stay too shallow (saying "I'd use Kafka" and waiting for follow-up questions that never quite save you). This playbook gives you a five-phase framework you can apply to any prompt: Clarify, Scope, Design, Defend, Operate. Each phase has a specific goal, a time budget, and a clear signal to send the interviewer. Walk in knowing these five phases cold, and you control the conversation.

The Framework

Memorize this table. It's your interview GPS.

| Phase | Time Budget | Goal |

|---|---|---|

| 1. Clarify | 5 min | Extract requirements before touching the whiteboard |

| 2. Scope | 3 min | Define what you're building and what you're parking |

| 3. Design | 15 min | Walk the pipeline end-to-end with named tools |

| 4. Defend | 12 min | Address failure modes, idempotency, and SLAs proactively |

| 5. Operate | 10 min | Show you can run this thing in production |

The most common failure mode is spending 25 minutes in Phase 3 and never reaching Phases 4 or 5. Interviewers hire for senior roles based on what you say in those last two phases.

Phase 1: Clarify

Your only job here is to ask questions. Don't sketch anything yet.

Ask these five questions, in roughly this order:

- Volume and velocity: "What's the expected event volume? Are we talking thousands of events per second or millions? And is this a steady stream or bursty?"

- Latency requirements: "What's the acceptable lag for downstream consumers? Are we targeting seconds, minutes, or is a daily batch acceptable?"

- Sources and sinks: "Where is the data coming from, and who are the consumers? Are we feeding a dashboard, an ML model, a reporting table, or all three?"

- Schema and format: "Do we own the schema, or is it defined by an upstream team? Has it changed before, and do we need to support schema evolution?"

- Reliability expectations: "What's the SLA on this pipeline? Is data loss acceptable, or do we need exactly-once guarantees?"

You won't always get clean answers. That's fine. The act of asking signals that you don't design in a vacuum.

What to say to open this phase:

"Before I start drawing anything, I want to make sure I understand the requirements. Can I ask a few questions about data volume, latency expectations, and the downstream consumers?"

The interviewer is evaluating whether you treat requirements as inputs to your design or as an afterthought. Jumping straight to tools here is a red flag they'll note immediately.

Do this: Write down the answers as you get them. Reference them explicitly later ("Since you said latency needs to be under 30 seconds, I'll use streaming here rather than batch").

Phase 2: Scope

Three minutes. No longer. State what you're building and what you're not.

Name the core pipeline path explicitly: "I'm going to design the ingestion-to-serving path for the real-time use case. I'll treat the batch reporting path as a secondary concern we can revisit." Then list two or three things you're parking: "I'll set aside auth, cost optimization, and multi-region replication for now unless you want to prioritize one of those."

What to say:

"Based on what you've told me, I'm going to focus on the end-to-end pipeline from event ingestion to the serving layer. I'll park monitoring and access control for now and come back to them if we have time. Does that sound right to you?"

That last question matters. You're confirming alignment, not just narrating.

The interviewer is watching for prioritization instincts. Can you identify the core problem and resist the urge to boil the ocean? This is what separates senior candidates from mid-level ones.

Phase 3: Design

Walk the pipeline left to right: ingestion, processing, storage, serving. Every tool you name should be justified against a requirement you surfaced in Phase 1.

Don't just say "I'd use Kafka." Say "I'd use Kafka here because you mentioned bursty traffic and multiple downstream consumers. Kafka's consumer group model lets the real-time dashboard and the batch job read from the same topic independently, without coupling them."

Three concrete actions for this phase:

- Sketch the pipeline on the whiteboard as you talk, left to right. Label each component with the tool name and a one-line role.

- Make one explicit trade-off per major decision. Kafka vs. Kinesis, Spark vs. Flink, Iceberg vs. Delta Lake. Name the alternative you rejected and why.

- Annotate with numbers. Write the SLA target next to the component it constrains. "30s latency budget" next to your stream processor. "T+1 by 6am" next to your batch layer.

What to say to transition into this phase:

"Okay, I think I have a good understanding of the requirements. Let me sketch out a high-level design and walk you through each layer."

The interviewer is evaluating technical depth and whether your tool choices are principled or reflexive. If you can't explain why you chose Flink over Spark Structured Streaming for a given requirement, expect a follow-up that exposes it.

Phase 4: Defend

Don't wait to be challenged. Bring up the hard problems yourself.

Work through this checklist proactively:

- Idempotency: "Each processing step writes with an idempotent key so re-running a failed job doesn't produce duplicates."

- Schema evolution: "I'd use a schema registry with Avro. Backward-compatible changes are fine; breaking changes require a new topic version."

- Late-arriving data: "For the streaming layer, I'd use a watermark of 10 minutes. Events arriving after that go to a late-data reconciliation job that patches the affected partitions."

- Backfill strategy: "If we need to reprocess 90 days of history, the pipeline needs to be idempotent end-to-end. I'd parameterize the Spark job by date partition and run it in parallel with a concurrency limit to avoid overwhelming the source."

- SLA guarantees: "For the T+1 SLA, I'd set an Airflow SLA miss alert at 5am so we have an hour to investigate before the business notices."

What to say to open this phase:

"Now I want to walk through some of the failure modes and operational concerns, because this is where the design either holds up or falls apart."

The interviewer is checking whether you've actually run pipelines in production. Anyone can draw boxes. The Defend phase is where you prove you've been paged at 2am and learned something from it.

Phase 5: Operate

Close the loop on production readiness. This phase is short but it's what makes your design feel real.

Cover three things:

- Partitioning strategy: "I'd partition the output tables by event date and hour. That keeps query costs low and makes backfills surgical, since you only reprocess the affected partitions."

- Data quality checks: "I'd add a Great Expectations suite at the output of each major processing step, checking row counts, null rates, and schema validity. Failures block downstream DAG tasks."

- Alerting and incident response: "I'd alert on pipeline lag exceeding 5 minutes and on row-count drops greater than 20% compared to the same window yesterday. If either fires at 2am, the on-call engineer has a runbook that starts with checking Kafka consumer lag."

What to say:

"The last thing I want to cover is how we'd actually operate this. A pipeline that works in staging but breaks silently in production isn't a finished design."

The interviewer is evaluating operational maturity. A lot of candidates skip this phase entirely. The ones who don't tend to get the offer.

Do this: End Phase 5 with a one-sentence summary of your design and name one metric you'd instrument first. "I'd start by instrumenting consumer lag on the Kafka topic, because that's the earliest signal that something upstream has gone wrong." It's a clean, confident close.

Putting It Into Practice

The prompt: "Design a pipeline that ingests clickstream events from our mobile app and makes them available for both real-time dashboards and daily batch reports."

This is a classic dual-path problem. You need to serve two very different consumers from the same raw event stream, and the failure modes for each are completely different. Here's how the framework plays out in a real 45-minute interview.

Your Time Budget

Before the dialogue, internalize this split:

| Phase | Time | What you're doing |

|---|---|---|

| Clarify | 5 min | Ask targeted questions, listen hard |

| Scope | 3 min | State your boundaries out loud |

| Design | 18 min | Walk the pipeline end-to-end |

| Defend | 12 min | Failure modes, idempotency, schema evolution |

| Operate | 7 min | Production readiness, data quality, alerting |

The most common way candidates fail this interview is spending 30 minutes in Design and never getting to Defend or Operate. The interviewer notices. Set a mental alarm at the 23-minute mark.

The Walkthrough

Do this: Notice the candidate asked about schema evolution explicitly. Most candidates skip this entirely. Asking it in Phase 1 means you can justify Iceberg or Avro later without it feeling like a tangent.

Do this: Saying "I'm going to park X" out loud is a senior-level signal. It shows you know those things exist and are consciously choosing not to design them right now, not that you forgot them.

The first path is the real-time path. A Flink job reads from Kafka, does lightweight aggregation (session counts, active users, funnel steps), and writes to a serving layer. For the serving layer at 60-second refresh, I'd use Apache Druid or ClickHouse. Both support sub-minute OLAP queries at this scale.

The second path is the batch path. A separate Spark job runs on a schedule, reads from Kafka's retained topic (or an S3 landing zone, which I'll explain in a second), and writes Parquet files partitioned by date and event type to an Iceberg table in S3. The 7am SLA is comfortable here. A 5am Airflow DAG gives us two hours of buffer.

I'm writing raw events to S3 as well, directly from a Kafka consumer. This is the landing zone. It decouples the batch job from Kafka retention limits and gives us a reprocessing surface if something goes wrong.

For the batch path, late data is manageable. Iceberg supports partition overwriting, so if events for Tuesday arrive on Wednesday, I can reprocess Tuesday's partition without touching Wednesday's data. The Airflow DAG would need a late-data window, maybe a 48-hour lookback, before marking a partition "final."

For the real-time path, it's harder. Flink has native watermarking and event-time windows. I'd configure a 5-minute allowed lateness on the Flink job. Events that arrive more than 5 minutes late get routed to a side output, written to S3, and reconciled in the next batch run. The dashboard shows a "last updated" timestamp so users know they're looking at a 60-second-old snapshot, not a real-time guarantee.

The Flink job is the bigger concern. At 500k events per second, I'd want to make sure the aggregation logic is stateless or uses RocksDB-backed state carefully. I'd also reconsider ClickHouse as the serving layer. At that ingest rate, ClickHouse can handle it, but I'd want to benchmark against Druid, which has a more mature tiered storage story for cold data.

The S3 landing zone actually becomes more important at 10x. I'd switch from writing individual events to micro-batching into Parquet files every 5 minutes before landing in S3. Raw event-per-message S3 writes at 500k/sec would be expensive and slow.

Do this: When the interviewer pivots the constraints, don't restart your design from scratch. Reference your earlier clarifications, identify the specific bottleneck, and adjust incrementally. That's what a senior engineer does in a real incident too.

I'd add a PagerDuty alert on the freshness check. Everything else goes to a Slack channel and a data quality dashboard. The 2am on-call rotation only wakes up for freshness violations and completeness drops over 5%.

Do this: Naming all five data quality dimensions (completeness, freshness, schema validity, referential integrity, reconciliation) in one clean answer is a strong close. It shows you've thought about this in production, not just in theory.

Using the Whiteboard

During Phase 3, sketch the pipeline left to right: mobile clients, Kafka, then two branches (Flink to Druid, Spark to Iceberg). Keep it sparse. Labels and arrows only.

In Phase 4, annotate the diagram. Write "60s SLA" above the Flink path. Write "7am SLA" above the Spark path. Add a small "late data side output" arrow from Flink to S3. The interviewer can see you're connecting your design to the requirements you clarified.

In Phase 5, add a horizontal "data quality layer" below the diagram. List your five checks there. It visually communicates that data quality is a first-class concern, not an afterthought you mentioned because they asked.

Common Mistakes

Most candidates who fail data engineering system design interviews don't fail because they lack knowledge. They fail because of predictable, repeatable habits that signal inexperience to the interviewer. Read these carefully. If any of them sound familiar, that's the point.

Naming Tools Before Understanding the Problem

You hear "design a data pipeline" and immediately say "I'd use Kafka for ingestion, Spark for processing, and Snowflake for storage." It feels confident. It's actually a red flag.

Interviewers hear this and think: this person has a pre-built answer they're going to force onto my problem. They're not actually listening. When you name Kafka before you know the data volume, the latency requirements, or even the upstream source, you've signaled that you don't design systems, you template them.

Don't do this: "I'd start with Kafka for the event stream, then use Spark Structured Streaming to process..." (said 45 seconds into a 45-minute interview)

Do this: Spend the first 5 minutes asking about volume, velocity, latency, and consumers. Then name tools, tied explicitly to those answers.

The fix is mechanical: ban yourself from naming a specific tool until you've asked at least three clarifying questions.

Designing a Backend System Instead of a Data System

This one is subtle and it sinks a lot of strong engineers who are transitioning from software engineering roles. You spend your time thinking about API response times, service availability, and horizontal scaling of request handlers. You draw microservices. You talk about p99 latency.

The interviewer is waiting to hear about throughput, data freshness SLAs, idempotency, exactly-once semantics, and what happens when you need to backfill six months of data after a bug fix. These are the concepts that separate data engineers from backend engineers, and if you skip them, the interviewer assumes you don't know them.

Ask yourself mid-design: have I mentioned idempotency? Have I mentioned what "done" means for this pipeline? If not, you're probably still in backend mode.

Living in the Happy Path

Your design works perfectly. Data arrives on time, in the expected schema, with no duplicates, and every upstream system is healthy. Congratulations, you've designed a pipeline for a world that doesn't exist.

Interviewers at Airbnb, Uber, and Meta have been paged at 2am because of late-arriving events, schema drift from an upstream team, and silent data loss that nobody noticed for three days. They want to know you've thought about this. If you finish your design without addressing a single failure mode, you've told them you haven't.

Don't do this: Finishing your entire design and then saying "and of course we'd add monitoring" as a throwaway line at the end.

Strong candidates spend at least 30% of their time on failure modes: what happens when an upstream Kafka topic goes silent, when a producer starts sending a new field without warning, when your Spark job processes a batch twice. Name the failure, name the consequence, name the mitigation.

Forgetting That Data Quality Is Part of the Design

You built a beautiful pipeline. Kafka ingestion, Flink processing, Iceberg tables, Trino for serving. The interviewer asks "how would you know if this pipeline was silently dropping 5% of events?" and you pause.

This is the most common gap at the senior level, and it's disqualifying for staff-level roles. A pipeline without data quality checks isn't production-ready, it's a prototype. Interviewers expect you to close the loop: how do you verify completeness, freshness, schema validity, referential integrity, and row-count reconciliation? If you don't bring it up yourself, you're leaving a gaping hole in your design.

Do this: Before you finish Phase 3 of your design, explicitly add a data quality layer. Name the checks. Name where they run (post-ingestion? post-transform? both?). Name what happens when a check fails.

One sentence is enough to open the door: "I'd also want to add quality checks here, specifically row-count reconciliation against the source and a freshness check that alerts if the partition hasn't landed by T+30 minutes."

Waiting to Be Asked

You finish describing the ingestion layer and then stop. You look at the interviewer. They ask "what about processing?" You describe processing and stop again. The whole interview becomes a game of 20 questions where the interviewer has to drag each component out of you.

This pattern makes you look like a junior engineer who needs supervision, not a senior engineer who can own a system end-to-end. The interviewer shouldn't be steering your design. You should be.

Don't do this: Pausing after each component and waiting for a prompt to continue.

The framework exists precisely to prevent this. Walk through the pipeline proactively, phase by phase. If the interviewer wants to go deeper somewhere, they'll interrupt you, and that's fine. But the default should be you driving, not them pulling.

Treating Scope as Optional

Some candidates skip scoping entirely and just start designing. Others mention scope briefly and then immediately violate it by trying to design everything: the core pipeline, the monitoring system, the cost optimization layer, the auth model, and the disaster recovery strategy, all in 45 minutes.

Neither works. Skipping scope means you might spend 20 minutes designing something the interviewer didn't care about. Over-scoping means you run out of time before you get to the parts that actually matter, like failure modes and production operations.

State your scope out loud, get a nod from the interviewer, and then stick to it.

Quick Reference

Scan this once before you walk in. That's all you need.

The Five-Phase Framework

| Phase | Time (45-min interview) | Goal | Your opening move |

|---|---|---|---|

| Clarify | 5 min | Extract volume, velocity, latency, sources, consumers | "Before I draw anything, can I ask a few questions about the requirements?" |

| Scope | 3 min | Define what's in, park what's out | "I'll focus on the core ingestion-to-serving path. We can revisit monitoring and auth if we have time." |

| Design | 15 min | Walk the pipeline end-to-end with named tools | "Let me sketch this left to right, starting with ingestion." |

| Defend | 10 min | Address failure modes before you're asked | "I want to talk through what happens when things go wrong." |

| Operate | 7 min | Production readiness, data quality, alerting | "Let me close with how I'd actually run this in production." |

Don't let Design eat your whole clock. If you hit 20 minutes and haven't started Defend, cut the design short and move on.

Tool Decision Cheat Sheet

| Requirement Pattern | Reach For |

|---|---|

| High-throughput event streaming | Kafka, Kinesis |

| Sub-second OLAP queries | Apache Pinot, Druid |

| Schema evolution + time travel | Apache Iceberg, Delta Lake |

| Complex stateful stream processing | Apache Flink |

| Large-scale batch transforms | Spark |

| Orchestration + scheduling | Airflow, Prefect |

| Columnar analytical storage | Parquet + S3, BigQuery, Snowflake |

| Lightweight SQL transforms on top of a warehouse | dbt |

Failure Modes to Address in Phase 4

Cover at least three of these unprompted. Interviewers at senior levels expect you to bring these up yourself.

- Late-arriving data. How does your pipeline handle an event that shows up 6 hours after its event timestamp?

- Duplicate events. What makes your processing idempotent? Dedup key? Upsert semantics?

- Schema changes upstream. Does a new field or a renamed column break your consumers?

- Pipeline backpressure. What happens when your processing falls behind the ingestion rate?

- Upstream outage. Can you replay from Kafka? Do you have a dead-letter queue?

Data Quality Checkpoints

These five come up constantly. Name them by category, not just as vague "checks."

- Completeness. Are all expected rows present? Row-count reconciliation against the source.

- Freshness. Is the latest partition timestamp within your SLA window?

- Schema validity. Do all fields match the expected types and nullability constraints?

- Referential integrity. Do foreign keys in your fact table resolve in your dimension tables?

- Value distribution. Are there sudden spikes or drops in key metrics that signal silent data corruption?

Phrases to Use

These are exact sentences you can drop into the interview. Practice saying them out loud tonight.

- "Before I start designing, I want to make sure I understand the requirements. Can I ask a few questions about data volume and latency expectations?"

- "I'll treat that as a parking lot item. We can come back to it after we've covered the core pipeline."

- "The reason I'm choosing Kafka here over Kinesis is the retention window and the replay capability, which matters given the backfill requirement we discussed."

- "Let me proactively address some failure modes, because the happy path is the easy part."

- "For data quality, I'd instrument completeness and freshness checks first, since those are the ones that page you at 2am."

- "To summarize: we're ingesting clickstream events via Kafka, processing with Flink for the real-time path and Spark for the batch path, storing in Iceberg, and serving via Pinot for dashboards. The first thing I'd instrument in production is partition lag on the Kafka consumer group."

That last one is your closing move. A two-sentence summary plus one concrete instrumentation priority. It signals you've thought past the whiteboard.

Red Flags to Avoid

- Naming a tool (Kafka, Spark, Flink) before you've asked a single clarifying question.

- Designing only the happy path and waiting for the interviewer to bring up failure modes.

- Finishing the design without mentioning how you'd detect a broken or silently degraded pipeline.

- Treating throughput and latency as the same thing, or ignoring data freshness SLAs entirely.

- Letting the interviewer steer every transition. You have a framework; use it to drive the conversation.