Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

Order Book Mechanics and Matching Engines

Order Book Mechanics and Matching Engines

The NASDAQ matching engine processes over 10 million order updates per second during peak trading hours. Each one needs to be sequenced, validated, matched if eligible, and published to downstream consumers, all within a latency budget measured in hundreds of nanoseconds. The data structure doing that work is, at its core, just a sorted list of prices with queues of orders attached to each one.

That gap between conceptual simplicity and implementation brutality is exactly why interviewers at Jane Street, Citadel, and HRT ask about order books. They're not testing whether you can describe a priority queue. They're testing whether you understand what happens to your data structure choices when "slow" means one microsecond instead of one millisecond.

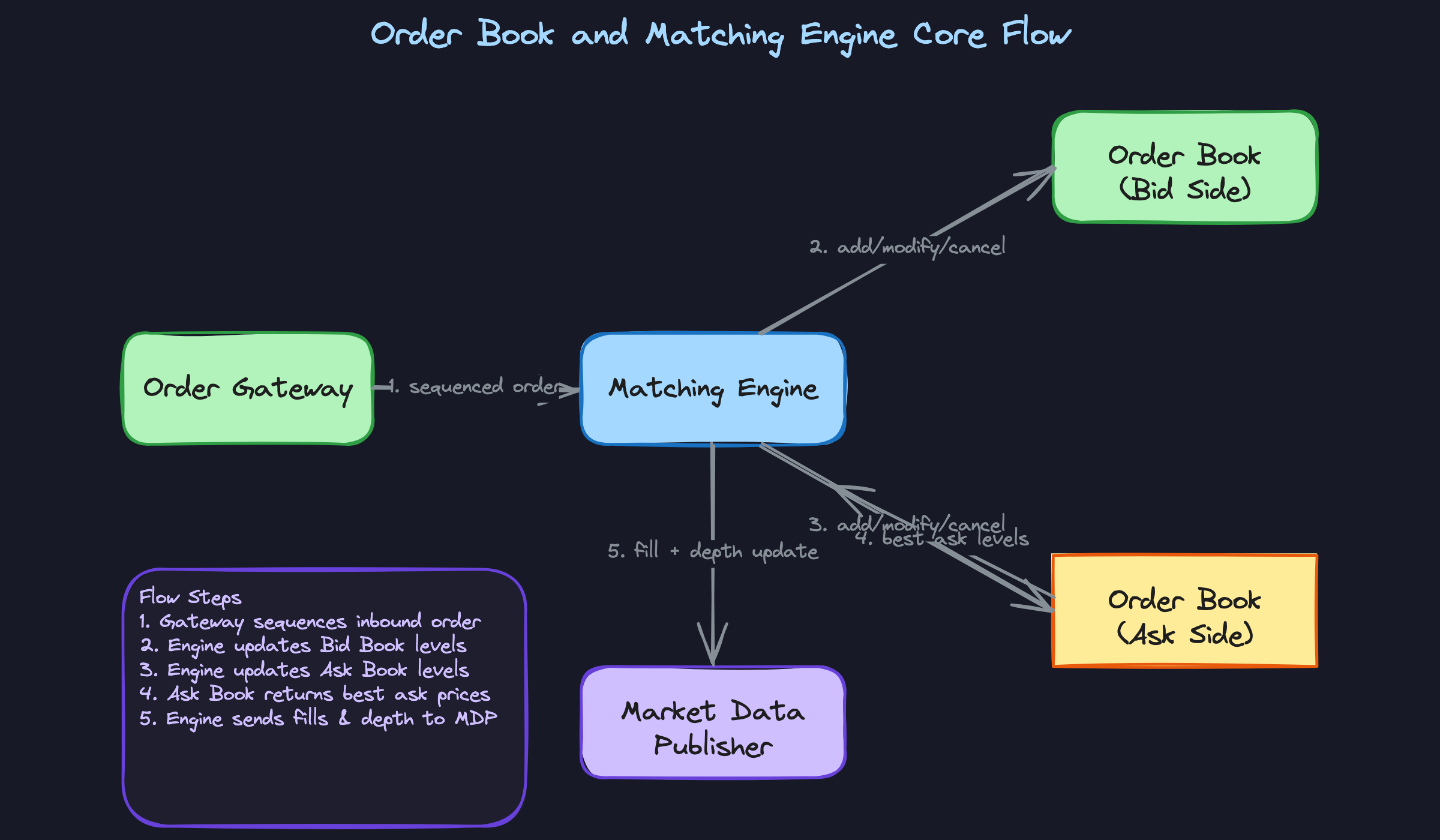

The order book plays two distinct roles simultaneously. It's a real-time state machine, tracking every live order in the market and mutating that state with every add, cancel, and modify. It's also the input to the matching engine, which watches for moments when a buyer's price crosses a seller's price and triggers a trade. Conflating those two things, treating the book and the engine as the same component, is one of the fastest ways to signal inexperience to an interviewer who has actually built one.

How It Works

Start with two sorted lists. One for buyers, one for sellers. Every resting order in the market sits somewhere in one of those lists, waiting. The highest price a buyer is willing to pay is the best bid. The lowest price a seller will accept is the best ask. Together, those two numbers form the NBBO, the National Best Bid and Offer, and the spread between them is where all the action happens.

Each price level isn't just a number. It's a queue. If five traders all want to buy at $100.50, they line up in the order they arrived. First in, first out. When a sell order comes in at $100.50 or below, it starts filling from the front of that queue.

Think of it like a deli counter: your ticket number is your place in line, and the price you're willing to pay determines which counter you're standing at.

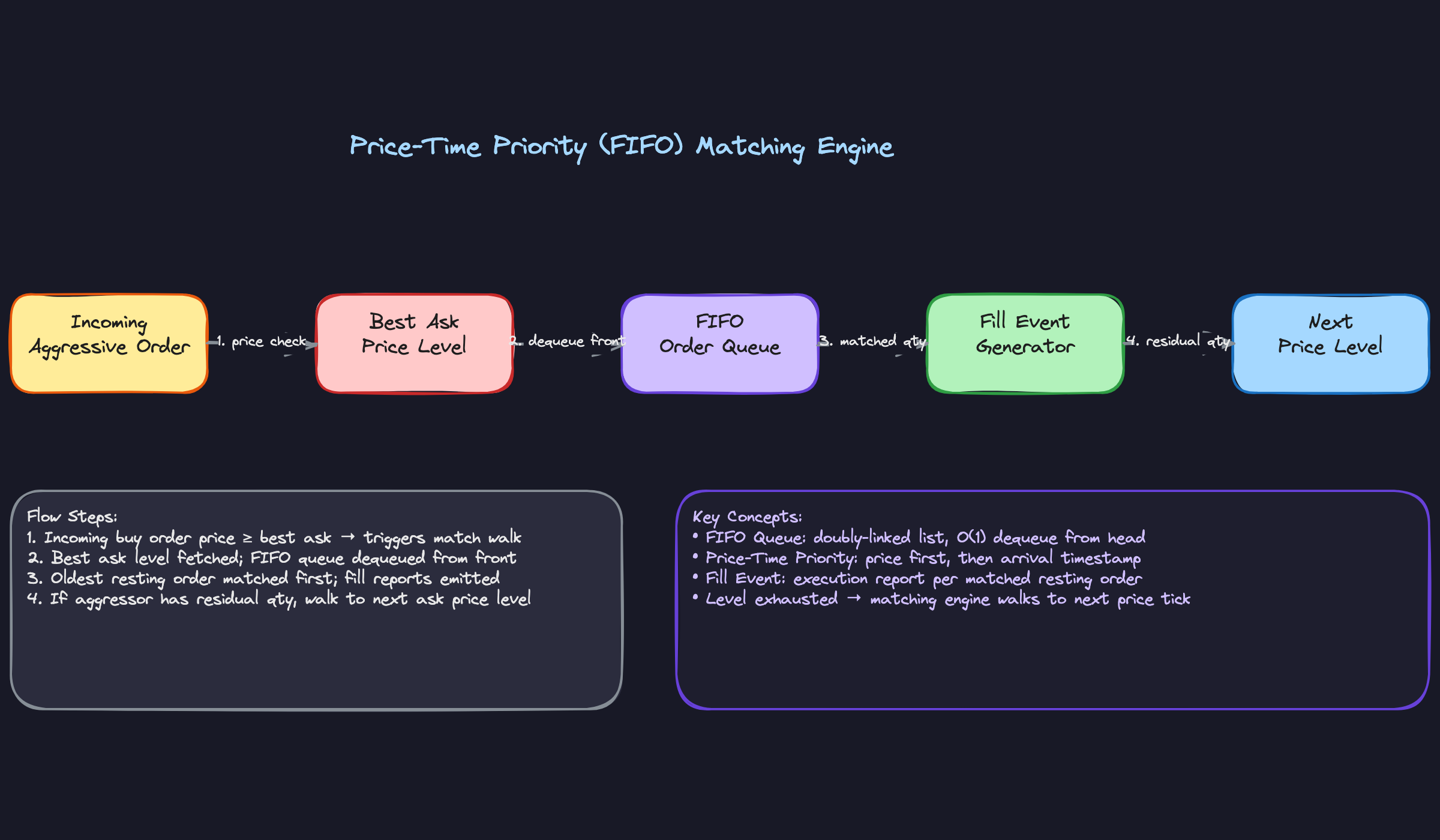

When an aggressive order arrives (a market order, or a limit order priced to cross the spread), the matching engine takes over. It walks the opposite side of the book starting at the best price, drains the queue at that level, moves to the next price level if there's still quantity left, and keeps going until the order is fully filled or runs out of eligible resting orders. Every match produces a fill event. Every fill event mutates the book state.

Here's what that flow looks like:

The Order Lifecycle

An order doesn't just appear and disappear. It moves through states: New, Acknowledged, Partially Filled, then either Filled or Cancelled. Each transition is a book mutation, and each mutation has to be published to downstream consumers before the next event is processed.

This matters in an interview because the interviewer is probing whether you understand consistency. If a partial fill updates the resting quantity in the book but the fill event hasn't been published yet, your risk system is looking at stale exposure. The engine has to treat each state transition as atomic from the perspective of everything downstream.

The Data Structure Underneath

A naive implementation uses a sorted map from price to queue. That works, but it's not what a production engine uses. The real choice is a flat array indexed by price tick, where the index is just (price - min_price) / tick_size. For instruments with a bounded tick range (most futures, many equities), this gives you O(1) price level lookup with cache-local memory access, which is far more important than asymptotic complexity when you're operating in the hundreds-of-nanoseconds range.

Each price level holds a doubly-linked list of orders. Dequeuing from the front is O(1). Cancelling an order anywhere in the list is O(1) if you hold a pointer to the node, which the order management layer always does.

The Latency Budget Is Not Negotiable

At co-located venues, the expected round trip from order receipt to fill acknowledgment is under 10 microseconds. Some venues target under one microsecond for the matching step itself. That number shapes every decision: why the engine is single-threaded, why the order book lives in L3 cache, why kernel bypass networking exists. If you understand the latency budget, the architectural choices stop looking arbitrary and start looking inevitable.

Your interviewer cares about this because it's the dividing line between candidates who understand trading infrastructure and candidates who are pattern-matching to generic distributed systems. The constraints here are different in kind, not just degree.

Patterns You Need to Know

In an interview, you'll usually need to pick a specific approach. Here are the ones worth knowing.

Price-Time Priority (FIFO)

This is the default matching algorithm for equities and most futures markets. When multiple resting orders sit at the same price level, the engine fills them strictly in arrival order. First in, first out. The order that arrived earliest gets filled first, and the next order in the queue only sees a fill once the one ahead of it is exhausted.

That simplicity has a profound consequence for market structure: speed becomes the primary competitive variable. If you can get your order to the front of the queue at a price level before anyone else, you have a better fill rate. This is the entire reason co-location exists. HFT firms pay significant fees to place their servers inside exchange data centers, shaving microseconds off network latency, because queue position at a price level is a direct proxy for profitability. When your interviewer asks why latency matters so much, this is the answer.

When to reach for this: any time the problem involves equities, futures on major exchanges (CME, ICE), or any venue where the design goal is price discovery through competitive quoting.

Pro-Rata Matching

Pro-rata throws out the timestamp entirely. When an aggressive order arrives at a price level, every resting order at that level gets a share of the fill proportional to its size. An order representing 20% of the total resting quantity at that price gets 20% of the fill.

The rounding math creates a small wrinkle: proportional allocation rarely divides evenly, so venues define rules for distributing the residual. Common approaches give the remainder to the largest resting order, or to the earliest-arriving order among those tied for largest. You should know this exists even if you don't memorize the exact rules, because interviewers at options-focused firms will probe it. Pro-rata is the dominant algorithm on EURIBOR and Eurodollar futures (CME), and it's common across options markets. The strategic implication flips from FIFO: speed no longer matters, so participants compete on size instead. Firms post large passive orders to capture a bigger slice of each fill, which is why these markets tend to have deep, thick order books.

When to reach for this: options markets, short-term interest rate futures, or any scenario where the interviewer describes a market with thick resting liquidity and participants competing on order size rather than speed.

Single-Threaded Deterministic Engine Architecture

Most candidates, when asked how to scale a matching engine, instinctively reach for parallelism. More threads, more cores, partition the work. This is the wrong answer for a single-instrument matching engine, and getting it right is one of the clearest signals of real trading systems experience.

Production matching engines at major exchanges are single-threaded by design. One thread reads from a sequenced input queue, mutates the order book, generates fill events, and writes to an outbound journal. No locks, no contention, no non-determinism. The sequencer upstream is the only point of serialization: it stamps every inbound event with a monotonically increasing sequence number before the matching thread ever sees it. Because the engine processes events one at a time in a fixed order, the entire system is fully reproducible. Feed the same sequence of events through the same engine code and you get identical output every time. That property is not just elegant; it's required. MiFID II and equivalent regulations mandate that exchanges maintain complete, replayable audit trails of every order and fill.

Performance comes not from parallelism but from eliminating every source of latency in the single-threaded path: kernel bypass networking (DPDK, Solarflare) to get packets off the wire without touching the OS, CPU pinning to keep the matching thread on a dedicated core with no context switches, a lock-free ring buffer (the LMAX Disruptor pattern) between the network thread and the matching thread, and an order book that fits entirely in L3 cache for hot instruments. When the problem demands even lower latency, the matching loop itself can be offloaded to an FPGA.

The contrast worth knowing: some venues with many instrument classes run partitioned multi-engine designs, where each engine owns a subset of instruments. This scales horizontally but introduces a hard problem: cross-instrument orders (spreads, combos) require coordination across engines, which reintroduces synchronization complexity. Most exchange architects treat this as a last resort.

When to reach for this: any question about exchange-side matching engine design, or when the interviewer asks how you'd guarantee deterministic, auditable order processing.

Call Auction and Uncrossing

Continuous matching handles the trading day, but markets don't open cold. Before continuous trading begins, orders accumulate during a pre-open window where no matching occurs. Participants can submit, modify, and cancel orders, and the exchange publishes an indicative price so everyone can see where the market is likely to open. At the scheduled auction trigger time, the engine runs a single batch match.

The uncrossing price is the price that maximizes executable volume across all resting orders. The algorithm sweeps every price level, computing how many shares would trade if the auction cleared at that price, and selects the level with the highest executable quantity. Ties are broken by minimizing the order imbalance (the difference between buy and sell volume at that price). Every eligible order fills at this single price simultaneously, regardless of their individual limit prices. A buy order with a limit of $102 fills at the same $100.50 uncrossing price as a buy order with a limit of $100.51, as long as both are on the right side of the uncrossing price.

This pattern reappears in two common interview follow-ups: IPO openings (where the first trade ever for a security uses an auction to establish a fair opening price) and circuit breaker reopenings (where trading halts after extreme volatility and resumes via an auction to let the market find a new equilibrium). If your interviewer mentions either scenario, the answer starts with "call auction."

When to reach for this: market open/close design, IPO mechanics, circuit breaker recovery, or any scenario where the interviewer asks how you'd establish a fair price after a period of no trading.

| Algorithm / Pattern | Core Mechanism | Primary Venue Type | Key Design Implication |

|---|---|---|---|

| Price-Time Priority | Fill by arrival order within price level | Equities, futures (CME, ICE) | Queue position tracking; co-location arms race |

| Pro-Rata | Fill proportional to resting order size | Options, STIR futures (EURIBOR) | Size competition; thick passive books |

| Single-Threaded Engine | One sequenced thread, no locks | Exchange matching cores | Determinism, auditability, cache optimization |

| Call Auction / Uncrossing | Batch match at MEV price | Market open/close, IPOs, halts | Maximizes executable volume; seeds continuous book |

For most interview problems, you'll default to price-time priority. It's the baseline assumption for any equity or futures market, and it anchors every discussion about latency, co-location, and queue management. Reach for pro-rata when the problem involves options or short-term interest rate products, or when the interviewer describes a market where participants are competing on order size. The auction pattern is almost always a follow-up, but knowing it cold when the interviewer pivots to "what happens at market open" is exactly the kind of depth that separates candidates who've read about matching engines from those who've built them.

What Trips People Up

Here's where candidates lose points — and it's almost always one of these.

The Mistake: Saying "The Order Book Matches Orders"

It sounds harmless. You're explaining the system, things are going well, and you say something like "when a buy order comes in, the order book matches it against the best ask." The interviewer's pen stops moving.

The order book is a data structure. It holds state. It doesn't do anything. The matching engine is the process that reads that state, applies a matching algorithm, and transitions the book from one state to the next. Conflating the two tells the interviewer you're thinking about this as a black box, not as a system with distinct components and clear responsibilities.

Say instead: "The matching engine receives an incoming aggressive order, walks the ask side of the order book from best price inward, and generates fill events until the order is exhausted or no more price levels cross." One sentence. Clean separation.

The Mistake: Stopping at "Use a Sorted Map"

When asked about the order book's internal data structure, most candidates say "a sorted map of price levels, each holding a queue of orders" and then look satisfied. That answer is not wrong. It's just incomplete in a way that signals you've never had to squeeze latency out of this code.

A std::map or a red-black tree gives you O(log n) price level lookup, but it also gives you pointer-chasing through heap memory on every access. At microsecond latency, that's the difference between a cache hit and a cache miss, and cache misses are measured in hundreds of nanoseconds. For instruments with a bounded, predictable tick range (most futures, many equities), a flat array indexed by price tick gives you O(1) lookup and keeps hot price levels in L1 or L2 cache. The data structure choice is a latency choice.

That one sentence moves you from "knows data structures" to "thinks about hardware."

The Mistake: Getting Order Amendment Semantics Wrong

This one trips up even candidates who have read a lot about order books. The question comes as a follow-up: "What happens when a participant modifies a resting order?" A common answer is something like "the order gets updated in place and keeps its queue position."

Half right. Reducing an order's quantity preserves priority at most venues. The order stays where it is in the FIFO queue, just with a smaller size. But changing the price is a cancel-and-replace. The original order is cancelled, a new order is created at the new price, and it goes to the back of the queue at that price level. You lose your time priority entirely. This distinction matters enormously to HFT participants who guard queue position like real estate.

Getting this wrong signals you haven't worked with actual exchange protocols. FIX OrderCancelReplaceRequest with a price change is treated as a new order by every major venue. Know that cold.

The Mistake: Defaulting to "Add More Threads" for Performance

Someone asks how you'd get matching latency below a microsecond. A lot of candidates immediately reach for parallelism: "partition the order book by instrument, run multiple matching threads, use a thread pool..." It sounds like good systems thinking. In this context, it's the wrong instinct.

The single-threaded deterministic engine is the standard architecture at major exchanges precisely because it eliminates the need for locks, avoids race conditions on shared book state, and produces a fully auditable, replayable event log. MiFID II requires best execution audit trails. A multi-threaded engine that interleaves operations non-deterministically makes replay and audit genuinely hard. Regulators don't love that.

The performance comes from elsewhere: kernel bypass networking (DPDK, Solarflare) to cut interrupt overhead, CPU pinning to eliminate scheduler jitter, a lock-free ring buffer (Disruptor pattern) between the network thread and the matching thread, and keeping the hot order book resident in L3 cache. If you need to go further, you offload the matching loop to an FPGA. None of that requires a second matching thread.

How to Talk About This in Your Interview

When to Bring It Up

The clearest signal is any question about exchange infrastructure, order processing, or "how trades actually happen." If the interviewer asks you to design a trading venue, an OMS, or a risk system, order book mechanics are the foundation you should establish before anything else.

Watch for these specific phrases: "how would you handle high-throughput order flow," "what data structure would you use for price levels," or "walk me through the lifecycle of an order." Each one is an invitation to show you understand the engine, not just the API sitting in front of it.

If the conversation touches on latency targets in the single-digit microsecond range, that's your cue to connect data structure choices to cache behavior. Most candidates wait to be asked. Don't.

Sample Dialogue

Follow-Up Questions to Expect

"How would you get matching latency below one microsecond?" Start with kernel bypass networking to eliminate syscall overhead, then CPU pinning and NUMA-aware memory allocation, then a lock-free ring buffer between the network thread and the matching thread, then confirm the hot order book fits in L3 cache. If they push further, mention FPGA offload for the matching loop itself, where the price comparison and fill generation happen in hardware pipeline stages.

"Why use a single-threaded engine instead of parallelizing across instruments?" Determinism and auditability. A single-threaded engine produces an identical sequence of outputs for any given input sequence, which is what regulators require for best execution audit trails under MiFID II. Parallelism introduces non-determinism that's very hard to reconstruct after the fact.

"How does the matching algorithm choice affect participant behavior?" FIFO rewards speed, which drives co-location demand and arms races around queue position. Pro-rata rewards size, which pushes participants to post large passive orders and reduces the value of raw latency. The algorithm shapes the microstructure of the market, not just the fill mechanics.

"What happens to queue priority when a participant amends their order?" Price amendments lose priority entirely; the engine treats it as a cancel and replace, and the order goes to the back of the queue at the new price. Quantity reductions are the exception: most venues preserve priority if you're only reducing size, because you're not gaining any advantage.

What Separates Good from Great

- A mid-level answer describes the matching walk correctly but stays at the algorithmic level. A senior answer connects every design choice back to a constraint: the flat array beats a tree because of cache locality, the single thread beats parallelism because of audit requirements, the sequencer exists because the engine must never reason about time.

- Mid-level candidates treat FIFO as the default without comment. Senior candidates proactively note that the algorithm choice is a market design decision with real consequences for who participates and how, and they can name where pro-rata is used and why (EURIBOR futures, options markets with large passive liquidity providers).

- The real separator is regulatory awareness. Connecting the single-threaded architecture to MiFID II best execution audit trails, or noting that the outbound event journal enables deterministic replay for compliance review, signals that you've thought about these systems in production, not just in theory.