Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

Design a Matching Engine

Requirements and Constraints

The Design Challenge

Design challenge: Design a high-performance order matching engine capable of processing 1M+ orders per second per instrument with P99 matching latency under 10 microseconds, strict price-time priority guarantees, and deterministic state recovery within 100ms of a process restart.

The matching engine is the heart of any exchange or internalization venue. Every time two orders cross, the matching engine decides who gets filled, at what price, and in what sequence. Get the logic wrong and you have a fairness violation. Get the performance wrong and you're the exchange that can't keep up during a flash crash.

At a trading firm, this component sits at the intersection of market microstructure and systems engineering. It's downstream of the order gateway (which handles connectivity and protocol parsing) and upstream of the trade reporting and risk systems. Nothing in the firm's P&L happens without it working correctly.

Clarifying Questions to Ask First

Before sketching anything, ask the interviewer these. The answers change the design substantially.

Is this an exchange-grade engine or a broker internalization engine? An exchange engine matches orders from many external participants and must publish a public market data feed. An internalization engine matches a broker's client flow against their own inventory, with different regulatory obligations and no public feed requirement.

What regulatory regime applies? Reg NMS in the US requires specific order protection rules. MiFID II in Europe mandates microsecond-precision timestamps and best execution reporting. Both have audit trail requirements that affect your persistence design.

Do we need iceberg or hidden order support? These add meaningful complexity to the matching loop. A hidden order rests in the book but doesn't appear in the public feed. An iceberg order shows only a slice of its full quantity. Both require special-casing in the matching algorithm.

What's the expected cancel-to-new ratio? In equities markets, cancels routinely outnumber new orders 10:1 or more. This matters for your order lifecycle design and your WAL write volume.

Scope this to a single-instrument engine first. Tell the interviewer you'll discuss multi-instrument scaling after you've nailed the single-instrument design.

Functional Requirements

- Order acceptance and processing. The engine accepts limit orders (rest at a specified price), market orders (fill immediately at best available price), and stop orders (convert to market or limit when a trigger price is reached).

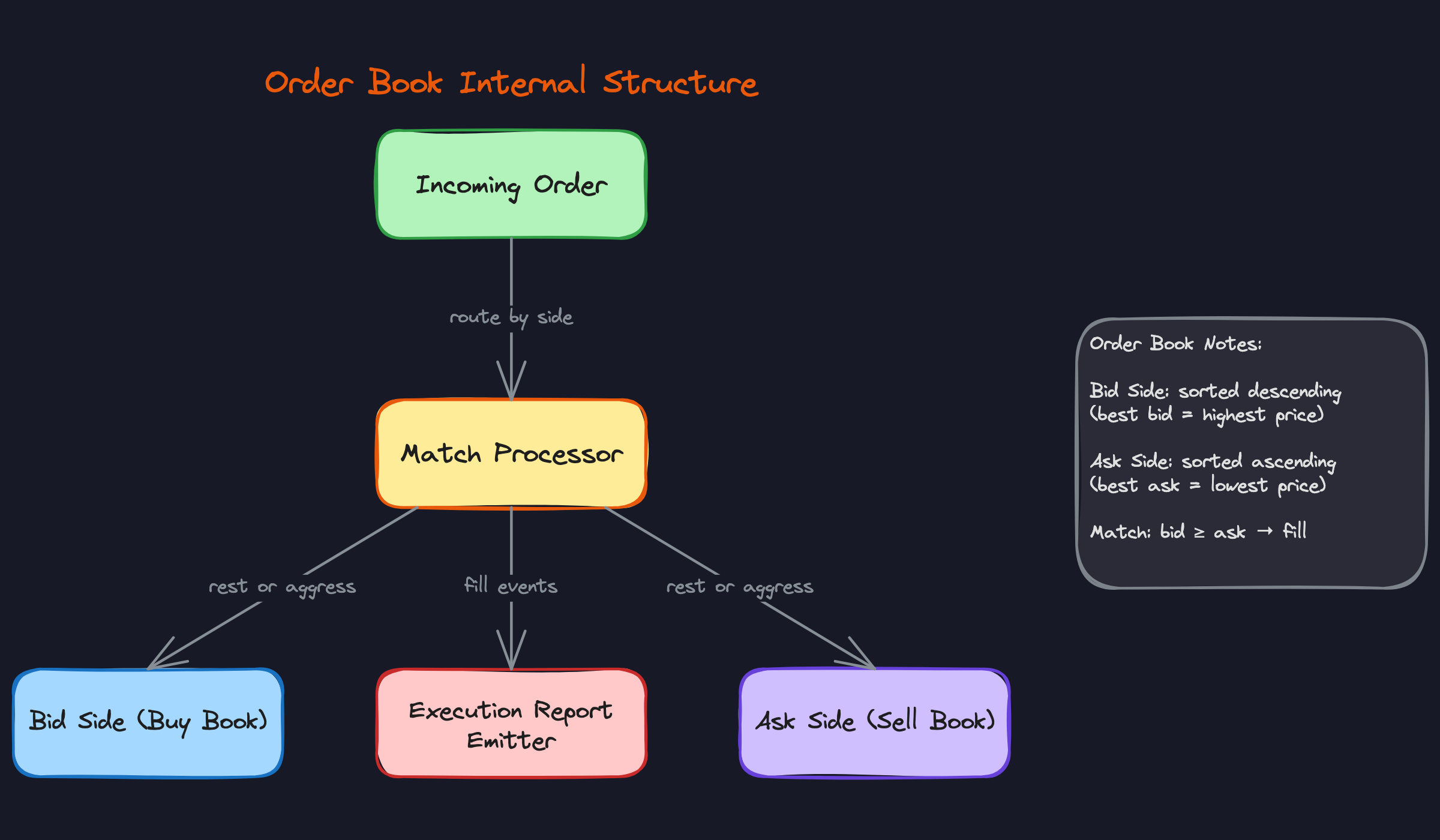

- Price-time priority order book. For each instrument, maintain a bid side and an ask side. Within each side, orders are sorted by price first, then by arrival time. A buy order at $100.05 has priority over one at $100.04. Two buy orders at $100.05 are filled in the order they arrived.

- Matching and execution. When an incoming order crosses the spread, walk the contra side from best price inward, generating fills until the order is fully executed or no more matches exist. Emit an execution report for both the aggressor (incoming) and each passive (resting) order that gets filled.

- Order book updates. After every match or resting order placement, publish an incremental order book delta. Downstream consumers reconstruct the full book from a snapshot plus a sequence of deltas.

- Cancel and modify operations. Support cancel-by-order-ID (remove a resting order from the book) and cancel-replace (atomically cancel and resubmit with new price or quantity). A modify that changes price loses time priority; a modify that reduces quantity does not.

- Sequence numbering. Every inbound order event and every outbound execution event carries a monotonically increasing sequence number. Gaps in sequence numbers signal a missed message to downstream consumers.

Non-Functional Requirements

Latency. P99 matching latency under 10 microseconds, measured from the moment a validated order enters the matching engine to the moment the execution report is handed to the output publisher. For context, a typical web API targets 10-50 milliseconds. You're operating three orders of magnitude faster.

Throughput. 1M+ orders per second per instrument. At peak (market open, major announcements), plan for 500K orders/sec as a sustained rate with burst headroom above that.

Determinism. No jitter. The matching engine must produce identical output given identical input, every time. This rules out garbage-collected runtimes, dynamic memory allocation on the hot path, and any operation with non-deterministic latency.

Fairness. Price-time priority must be enforced exactly. Any deviation is a regulatory and reputational problem. The engine's correctness guarantees are as important as its performance guarantees.

Availability. The engine must be available during all market hours with no scheduled downtime. State must survive a process restart within 100ms, which means you cannot rely on replaying a full WAL from scratch on startup.

Regulatory. MiFID II requires timestamps at microsecond precision using PTP-synchronized clocks. Both Reg NMS and MiFID II require a complete audit trail of every order state transition, queryable after the fact. These requirements must be satisfied without adding latency to the matching critical path.

Scale Estimates

Work through these numbers out loud in the interview. It signals you understand the operational reality.

Order rate. 500K orders/sec sustained per instrument. At 64 bytes per order message (a typical binary protocol frame), that's 32 MB/sec of inbound order flow per instrument. Manageable on a single NIC, but it means your parsing and validation pipeline can spend no more than 2 microseconds per message to keep up.

Order book depth. Up to 10,000 price levels per side. Each price level holds a queue of resting orders. At 64 bytes per order struct and an average of 5 resting orders per level, the entire order book fits comfortably in L3 cache (roughly 6.4 MB). This is intentional. Cache residency is a design goal, not a lucky coincidence.

Execution latency budget. Trade confirmations must be published within 1 microsecond of a match. That's your entire budget from match decision to execution report on the wire. Kernel bypass networking is not optional at this target.

Market data fan-out. 500+ downstream subscribers receive order book deltas via multicast UDP. At a delta rate of 1M updates/sec and 32 bytes per delta, that's 32 MB/sec of multicast traffic. A single multicast group handles this without per-subscriber unicast overhead.

WAL write volume. Every order state transition hits the write-ahead log. At 500K orders/sec with an average of 3 state transitions per order (new, acknowledged, filled/cancelled), you're writing 1.5M events/sec to NVMe. At 128 bytes per event, that's 192 MB/sec of sequential writes. Modern NVMe handles this comfortably; the constraint is write latency, not throughput.

System Architecture

The architecture of a matching engine isn't just a set of boxes and arrows. Every design decision, every thread boundary, every data structure choice directly determines whether you hit 1 microsecond or 100 microseconds. When an interviewer asks you to walk through the architecture, they're really asking: do you understand where latency comes from?

The Single-Threaded Critical Path

The most important thing to say early in your interview: the matching engine core runs on a single thread. Not because of laziness, but because locks are latency. The moment you introduce a mutex on the order book, you've added unpredictable scheduling delays that can spike your P99 into the milliseconds.

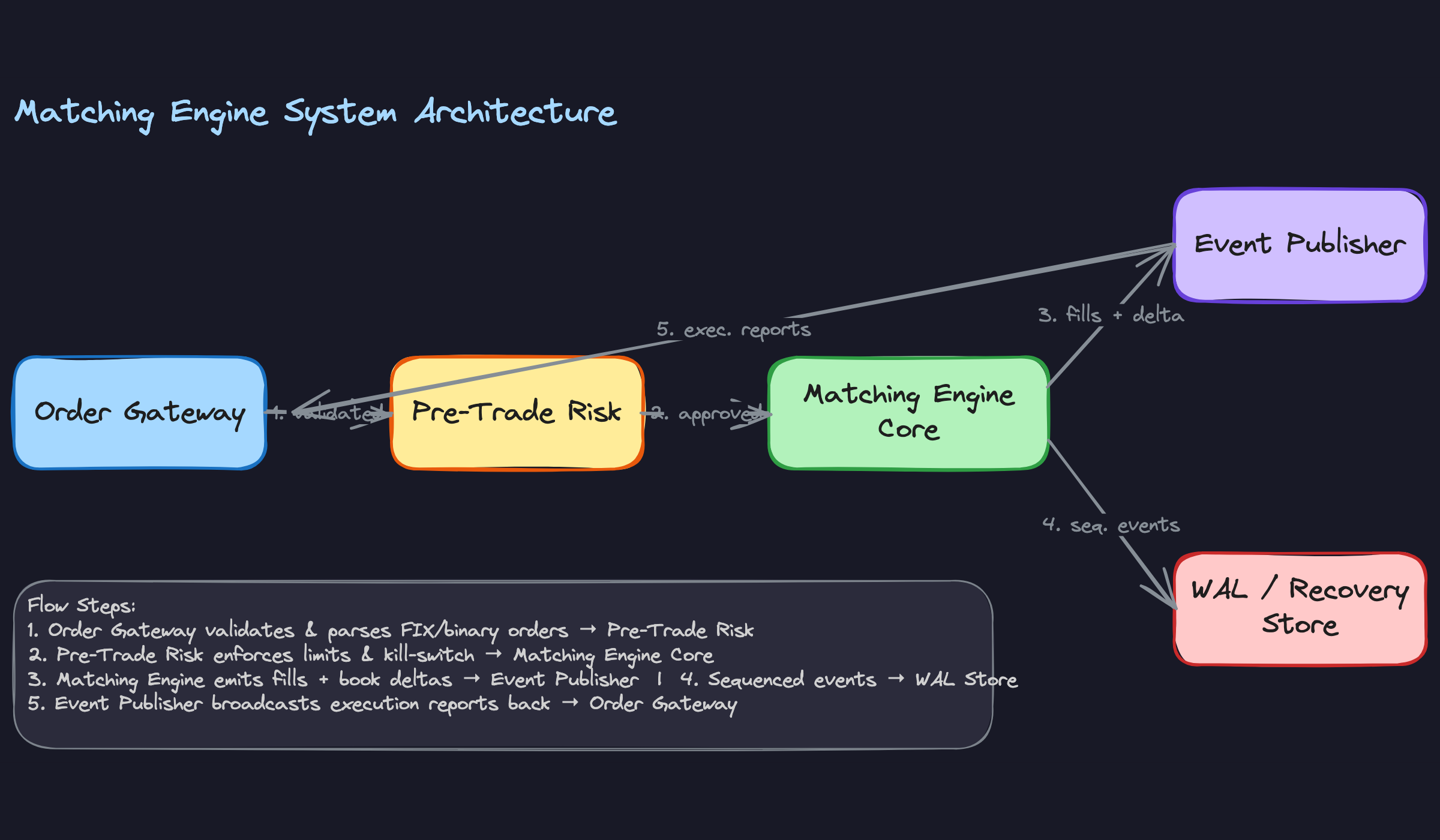

The critical path looks like this:

- Order Gateway receives a FIX or binary protocol message from a market participant over a kernel-bypass NIC. It parses the wire format and constructs an internal order struct.

- Pre-Trade Risk runs synchronous checks: position limits, fat-finger guards, order rate throttles. This happens before the order touches the book. If it fails, the order is rejected and never enters the matching thread.

- Matching Engine Core receives the validated order, runs price-time priority matching against the resting book, and emits fill events and book delta events.

- Event Publisher takes those fill events and broadcasts them downstream via multicast UDP. Execution reports go back to the originating participant; book updates go to market data subscribers.

Each hop adds latency. Your job in the interview is to show you know exactly where those nanoseconds go.

The Disruptor Pattern

Getting orders from the network I/O thread to the matching thread without a mutex is the core engineering challenge. The answer is the Disruptor pattern: a lock-free ring buffer where a single producer (the I/O thread) writes order events and a single consumer (the matching thread) reads them.

Three Disruptor rings connect the main components: one from I/O to Matching, one from Matching to Publisher, and one from Matching to WAL. You end up with three threads on the critical path (I/O, matching, and publish) plus a fourth WAL thread draining the persistence ring. Each is pinned to a dedicated physical core.

The Disruptor uses memory barriers instead of locks. The producer writes an event to the next slot and updates a sequence counter. The consumer spins on that counter, reading the event as soon as it appears. The Disruptor's communication mechanism avoids kernel involvement for data transfer and synchronization, minimizing context switches related to inter-thread communication. Handoff latency runs around 25 nanoseconds in practice.

std::mutex lock/unlock cycle costs roughly 100-300ns on a modern CPU, which is an eternity when your entire matching budget is 1 microsecond.The three hot threads on the critical path are each pinned to a dedicated physical core and never share CPU time with anything else. The WAL thread sits off the critical path entirely, which is why it gets its own ring rather than sharing the publisher's.

Market Data Distribution

Once a trade executes, the matching engine emits two things: an execution report (who filled, at what price, for how much) and an order book delta (what changed at which price level). These go to the Event Publisher, which fans them out via multicast UDP.

Multicast is the right choice here because you have 500+ downstream subscribers and you cannot afford to send 500 separate TCP streams from the critical path. With multicast, the publisher sends one packet and the network replicates it. Subscribers that miss a packet detect the gap via sequence numbers and request a retransmission from the WAL, not from the matching engine itself.

The matching engine never talks to downstream consumers directly. It writes to the ring buffer and moves on. This is intentional.

Persistence Without Blocking

The WAL runs on a separate thread. The matching engine writes sequenced events to the Matching-to-WAL Disruptor ring; the WAL thread drains that buffer and appends to an NVMe-backed log. The matching engine does not wait for the write to complete before processing the next order.

This means the WAL is slightly behind the live state, which is acceptable. On restart, you replay from the last checkpoint plus any WAL entries after it. The design requirement is full state recovery within 100ms, which is achievable with a recent checkpoint and a fast NVMe device.

1Checkpoint interval: every 10,000 events

2WAL write throughput: ~1M events/sec on NVMe (sequential writes)

3Recovery time: checkpoint load (~50ms) + WAL replay (~30ms) = ~80ms

4Separation of Concerns

The matching engine core is intentionally ignorant. It knows nothing about FIX protocol, network sockets, NVMe storage, or downstream risk systems. It consumes a stream of order events with monotonically increasing sequence numbers and emits a stream of execution events. That's it.

This design makes the engine testable in isolation. You can replay any sequence of historical orders through it and get deterministic output, which is essential for both debugging and regulatory audit. It also means you can swap out the network layer (say, moving from DPDK to an FPGA front-end) without touching the matching logic.

The interviewer will likely probe this boundary. If they ask "what happens if the risk system is slow?", the answer is: the risk system runs before the order reaches the engine, and it's synchronous. If it's slow, that's a risk system problem, not a matching engine problem. The engine itself never waits on anything external.

Deployment

The entire critical path runs co-located in the same data center rack as the exchange's matching infrastructure, connected via a direct cross-connect rather than a routed network. You're eliminating every unnecessary fiber hop.

CPU pinning is non-negotiable. The three hot threads (I/O, matching, publisher) each get a dedicated physical core with hyperthreading disabled on that core. The OS is configured with isolcpus so the scheduler never touches those cores. All hot data structures live on the same NUMA node as the NIC to avoid cross-socket memory access, which adds roughly 40-80ns per access.

Core Components Deep Dive

The three components that will make or break your design are the order book data structure, the matching algorithm that operates on it, and the pre-trade risk gate that sits upstream. Get these right and the rest of the system is plumbing. Get them wrong and no amount of kernel bypass networking will save you.

Component 1: The Order Book

Data Structure Choice

The order book has two sides: a bid side sorted descending by price, and an ask side sorted ascending. Each price level holds a FIFO queue of resting orders. The question interviewers love to ask is: what do you use for the sorted container?

The textbook answer is a red-black tree (std::map in C++). Insertion and deletion are O(log n), iteration to the best price is O(1) once you cache an iterator. That's fine for a broker internalization engine handling tens of thousands of orders per second. It's not fine for an exchange-grade engine at 500K orders/sec.

The real answer depends on the instrument. For equity markets where prices cluster tightly around a few hundred ticks, a flat array indexed by price tick is dramatically faster. You allocate a contiguous block of PriceLevel structs spanning the expected price range, and accessing any level is a single array dereference. No pointer chasing, no tree traversal, no cache misses from scattered heap nodes.

The tradeoff is memory. If your tick size is $0.01 and the instrument trades between $1 and $500, you need 50,000 slots. At ~64 bytes per PriceLevel, that's 3MB, which fits comfortably in L3 cache. For instruments with wide price ranges or tiny tick sizes (FX, crypto), you fall back to a sorted map or a hybrid: array for the top N levels near the spread, tree for the outliers.

Here's what the PriceLevel struct looks like with cache-line alignment:

1// Each PriceLevel is exactly 64 bytes (one cache line)

2struct alignas(64) PriceLevel {

3 int64_t price; // price in ticks (integer, never float)

4 uint64_t total_qty; // sum of all resting quantities at this level

5 uint32_t order_count; // number of resting orders

6 uint32_t _pad;

7 // Intrusive linked list of Order nodes at this level (FIFO)

8 Order* head; // oldest order (next to match)

9 Order* tail; // newest order (appended here)

10 uint8_t _reserved[24];

11};

12

13// The book itself: pre-allocated flat array

14struct OrderBook {

15 static constexpr int PRICE_LEVELS = 65536;

16 static constexpr int64_t BASE_PRICE = 0; // minimum price in ticks

17

18 PriceLevel bids[PRICE_LEVELS]; // indexed by (price - BASE_PRICE)

19 PriceLevel asks[PRICE_LEVELS];

20

21 int64_t best_bid_tick = -1;

22 int64_t best_ask_tick = INT64_MAX;

23

24 // One bit per price level: 1 = has resting orders, 0 = empty

25 // 65536 levels / 64 bits per word = 1024 uint64_t words = 8KB

26 uint64_t bid_bitmap[PRICE_LEVELS / 64] = {};

27 uint64_t ask_bitmap[PRICE_LEVELS / 64] = {};

28};

29The bitmaps are the key addition. When a level transitions from non-empty to empty, you clear the corresponding bit. Finding the next non-empty level is then a __builtin_ctzll (count trailing zeros) on the next bitmap word, which is a single CPU instruction. That's O(1) next-level lookup without any linear scan.

Never use floating point for prices in a matching engine. Represent prices as integers in tick units. Floating point comparison is non-deterministic across platforms and compiler flags. One rounding error in a price comparison is a regulatory incident.

Memory Management

Every Order object is pre-allocated from a pool at startup. No new, no malloc, no heap fragmentation on the critical path.

1template<typename T, size_t N>

2class ObjectPool {

3 alignas(64) T storage_[N];

4 T* free_list_[N];

5 size_t free_count_ = N;

6

7public:

8 ObjectPool() {

9 for (size_t i = 0; i < N; ++i)

10 free_list_[i] = &storage_[i];

11 }

12

13 T* acquire() noexcept {

14 // Called only from matching thread: no synchronization needed

15 return (free_count_ > 0) ? free_list_[--free_count_] : nullptr;

16 }

17

18 void release(T* obj) noexcept {

19 free_list_[free_count_++] = obj;

20 }

21};

22

23// At startup, allocate enough for peak order book depth

24ObjectPool<Order, 1'000'000> order_pool;

25If acquire() returns nullptr, the engine rejects the incoming order with a "system capacity" reject code. That's the correct behavior. You never want to fall back to heap allocation mid-match.

Component 2: The Matching Algorithm

The matching loop is the hottest code in the entire system. It runs on a single dedicated thread, pinned to an isolated core. Everything about its design is oriented around keeping the CPU's instruction cache warm and branch predictor happy.

The Inner Loop

void MatchingEngine::process_order(Order& incoming) {

// Stamp WAL entry BEFORE touching the book

wal_.append(EventType::ORDER_NEW, incoming);

if (incoming.side == Side::BUY) {

match_against(incoming, book_.asks, book_.ask_bitmap,

book_.best_ask_tick, +1);

if (incoming.remaining_qty > 0 && incoming.type == OrderType::LIMIT) {

rest_order(incoming, book_.bids, book_.bid_bitmap,

book_.best_bid_tick);

}

} else {

match_against(incoming, book_.bids, book_.bid_bitmap,

book_.best_bid_tick, -1);

if (incoming.remaining_qty > 0 && incoming.type == OrderType::LIMIT) {

rest_order(incoming, book_.asks, book_.ask_bitmap,

book_.best_ask_tick);

}

}

}

// Returns the index of the next set bit at or after `from_idx` in the given

// direction. Uses 64-bit word scan for O(1) amortized lookup.

static int64_t next_occupied_level(const uint64_t* bitmap,

int64_t from_idx,

int direction) {

int64_t idx = from_idx;

while (idx >= 0 && idx < OrderBook::PRICE_LEVELS) {

int word = idx / 64;

int bit = idx % 64;

if (direction > 0) {

// Scan upward: mask off bits below `bit`, then find lowest set bit

uint64_t masked = bitmap[word] >> bit;

if (masked) return word * 64 + bit + __builtin_ctzll(masked);

// No set bit in this word; jump to the start of the next word

idx = (word + 1) * 64;

} else {

// Scan downward: mask off bits above `bit`, then find highest set bit

uint64_t masked = bitmap[word] << (63 - bit);

if (masked) return word * 64 + 63 - __builtin_clzll(masked);

// No set bit in this word; jump to the end of the previous word

idx = word * 64 - 1;

}

}

return EMPTY_SENTINEL;

}

void MatchingEngine::match_against(Order& aggressor,

PriceLevel* contra_side,

uint64_t* bitmap,

int64_t& best_price_tick,

int direction) {

while (aggressor.remaining_qty > 0) {

if (best_price_tick == EMPTY_SENTINEL) break;

// Price check: does the aggressor's price cross the best resting level?

if (direction > 0 && aggressor.price_tick < best_price_tick) break;

if (direction < 0 && aggressor.price_tick > best_price_tick) break;

PriceLevel& level = contra_side[best_price_tick - OrderBook::BASE_PRICE];

Order* resting = level.head;

while (resting && aggressor.remaining_qty > 0) {

uint64_t fill_qty = std::min(aggressor.remaining_qty,

resting->remaining_qty);

// Emit execution reports for both sides

emit_fill(aggressor, *resting, fill_qty, best_price_tick);

aggressor.remaining_qty -= fill_qty;

resting->remaining_qty -= fill_qty;

if (resting->remaining_qty == 0) {

// Remove exhausted order from level

level.head = resting->next;

if (!level.head) level.tail = nullptr;

transition_order(*resting, OrderState::FILLED);

order_pool_.release(resting);

resting = level.head;

level.order_count--;

level.total_qty -= fill_qty;

} else {

level.total_qty -= fill_qty;

break; // resting order partially filled, stop walking

}

}

// If level is now empty, clear its bitmap bit and find the next

// occupied level in O(1) using the bitmap word scan above.

if (level.order_count == 0) {

int64_t idx = best_price_tick - OrderBook::BASE_PRICE;

bitmap[idx / 64] &= ~(1ULL << (idx % 64));

int64_t next = next_occupied_level(bitmap, idx + direction, direction);

best_price_tick = (next == EMPTY_SENTINEL)

? EMPTY_SENTINEL

: next + OrderBook::BASE_PRICE;

}

}

}

The common case in the inner loop is a partial fill of the resting order, which hits the break at the bottom of the inner while. Structure your code so the common case is the fall-through path, not the branch-taken path. This keeps the branch predictor in a happy state and avoids pipeline stalls.

The bitmap scan deserves a moment of attention. In the worst case, next_occupied_level scans one 64-bit word per 64 price levels. For a book with a 1000-tick spread, that's at most 16 word operations to find the next occupied level after draining a price. In practice, the spread is far tighter and the next occupied level is almost always in the same bitmap word, making it a single __builtin_ctzll instruction.

Order Lifecycle State Machine

Every state transition writes to the WAL before any downstream notification goes out. The sequence is always: write WAL, then notify. Never the reverse.

1NEW ──► ACKNOWLEDGED ──► PARTIALLY_FILLED ──► FILLED

2 │ │

3 ▼ ▼

4 REJECTED CANCELLED

51void MatchingEngine::transition_order(Order& order, OrderState new_state) {

2 order.state = new_state;

3 order.last_updated_ns = clock_.now_ns(); // PTP-synchronized hardware clock

4

5 // WAL write is synchronous from the matching thread's perspective,

6 // but the actual fsync happens on a separate I/O thread via the Disruptor

7 wal_.append(EventType::ORDER_STATE_CHANGE, order);

8

9 // Execution report goes to the output Disruptor ring buffer

10 exec_publisher_.publish(order);

11}

12The WAL write here is a memory write into a ring buffer. The I/O thread drains that ring buffer to NVMe asynchronously. From the matching thread's perspective, "writing to the WAL" costs roughly 10-20 nanoseconds (a cache-line write and a memory barrier). The actual disk write is someone else's problem.

Sequence Numbering

Every event that leaves the matching engine carries a monotonically increasing sequence number from a single atomic counter. Downstream consumers, including the standby engine, track this number and scream if they see a gap.

1class SequenceStamper {

2 uint64_t seq_ = 0; // Only touched by the matching thread

3

4public:

5 uint64_t next() noexcept { return ++seq_; }

6};

7No atomics, no memory fences. This counter is only ever touched by the single matching thread. That's the point of the single-threaded design: you get sequence number generation for free.

Component 3: Pre-Trade Risk

Pre-trade risk runs synchronously in the order gateway thread, before the order ever reaches the matching engine. This is the right place for it. If a fat-finger order gets into the book, you cannot un-match it.

1struct RiskCheckResult {

2 bool approved;

3 RejectReason reason;

4};

5

6RiskCheckResult PreTradeRisk::check(const Order& order) {

7 const auto& limits = limits_[order.participant_id];

8

9 // Fat-finger check: single order too large

10 if (order.quantity > limits.max_order_qty) {

11 return {false, RejectReason::ORDER_TOO_LARGE};

12 }

13

14 // Notional value check: price * qty exceeds threshold

15 if (order.price_tick * order.quantity > limits.max_notional_ticks) {

16 return {false, RejectReason::NOTIONAL_LIMIT};

17 }

18

19 // Order rate limit: token bucket per participant

20 if (!rate_limiters_[order.participant_id].try_consume(1)) {

21 return {false, RejectReason::RATE_LIMIT};

22 }

23

24 // Position limit: current position + this order would exceed limit

25 int64_t projected = positions_[order.participant_id] +

26 (order.side == Side::BUY ? order.quantity

27 : -order.quantity);

28 if (std::abs(projected) > limits.max_position) {

29 return {false, RejectReason::POSITION_LIMIT};

30 }

31

32 return {true, RejectReason::NONE};

33}

34The position counter is updated by the risk system after each fill, not before. This means there's a small window where two orders from the same participant could both pass the position check and together push them over the limit. That's an accepted tradeoff. The alternative is a lock between the risk thread and the matching thread, which destroys your latency.

Circuit Breakers

Circuit breakers are triggered by price movement, not by order volume. When the last trade price moves more than X% from the reference price within a rolling window, the engine transitions to auction mode.

1void MatchingEngine::on_trade_executed(int64_t trade_price_tick) {

2 double pct_move = std::abs(trade_price_tick - reference_price_tick_)

3 / static_cast<double>(reference_price_tick_);

4

5 if (pct_move > circuit_breaker_threshold_) {

6 mode_ = EngineMode::AUCTION;

7 // Stop processing new aggressive orders; accumulate into an auction book

8 // Schedule auction uncross after a cooling-off period

9 timer_.schedule(auction_uncross_ns_, [this]{ uncross_auction(); });

10 }

11}

12In auction mode, the engine still accepts orders but does not match them. It accumulates a crossed book and then uncrosses at a single price that maximizes volume. This is standard behavior for MiFID II volatility interruptions and Reg NMS clearly erroneous trade rules.

One more thing on memory under stress: your object pool has a fixed capacity. If a market dislocation causes 10x the normal number of resting orders (participants pulling liquidity and re-posting at wider spreads), you need enough pool capacity to handle it. Size your pool for the worst day you've ever seen, then double it.

Performance Optimization

Most engineers think latency is a networking problem. In a matching engine, it's everything: the OS scheduler, your memory allocator, how you wrote a conditional branch. Every layer has a tax, and your job in the interview is to name each one and explain how to avoid paying it.

The Latency Budget

Before optimizing anything, you need to know where time actually goes. Here's a realistic breakdown for a co-located engine targeting sub-10 microsecond tick-to-trade:

| Stage | Target | Technique |

|---|---|---|

| Network receive | <1μs | Kernel bypass (DPDK / Solarflare) |

| Message parse | <500ns | SIMD / FPGA offload |

| Pre-trade risk check | <1μs | Pre-computed limits, no I/O |

| Order matching | <2μs | Single-threaded, lock-free handoff |

| Network send | <1μs | Kernel bypass, zero-copy |

The interviewer will ask you to justify these numbers. Know them cold.

Network: Kernel Bypass

The Linux kernel's network stack adds roughly 10 to 15 microseconds of latency per packet. That's your entire budget, gone before you've even looked at the order.

DPDK and Solarflare OpenOnload solve this by moving packet processing entirely into userspace. Your application polls the NIC directly, no system calls, no context switches, no interrupt handling. Latency drops to under 1 microsecond. The tradeoff is that your application now owns the CPU core doing that polling, burning it at 100% even when traffic is light. That's an acceptable cost in a co-located trading environment where cores are cheap and microseconds are not.

For market data ingestion specifically, FPGA acceleration pushes even further. An FPGA can parse a raw UDP packet, decode a FIX or ITCH message, and update the top-of-book in under 100 nanoseconds, before a CPU core has even finished its cache lookup. You'd typically offload simple market order matching and top-of-book maintenance to the FPGA, leaving the CPU to handle complex order types, risk logic, and anything that requires branching on state. Bring this up proactively at senior and staff level; it signals you know where the real frontier is.

CPU and OS: Getting Out of Your Own Way

Kernel bypass buys you clean network latency. Then the OS scheduler hands it back.

Pin your matching thread to a dedicated physical core using CPU affinity (taskset or pthread_setaffinity_np). Disable hyperthreading on that core so you're not sharing execution resources with a sibling thread. Use isolcpus in the kernel boot parameters to prevent the OS from scheduling any other work there. Without isolation, a random kernel task can preempt your matching thread for 50 to 100 microseconds, which is catastrophic.

NUMA topology matters more than most candidates realize. If your NIC is on NUMA node 0 and your matching thread runs on NUMA node 1, every packet crossing that boundary pays a remote memory access penalty of roughly 100 nanoseconds. Keep the NIC, the matching thread, and all hot data structures on the same NUMA node. Use numactl to enforce this.

Huge pages (2MB instead of 4KB) reduce TLB pressure on your order book structures. With a dense price-level array covering thousands of ticks, you'll generate a lot of TLB misses on standard pages. Huge pages cut that overhead significantly and are straightforward to enable with mmap(MAP_HUGETLB).

Application Layer: Lock-Free Everything

The Disruptor ring buffer is the canonical solution for handing orders from the I/O thread to the matching thread without a mutex. It's a pre-allocated circular buffer where the producer and consumer coordinate using sequence numbers and memory barriers. No locks, no heap allocation, no kernel involvement. Handoff latency is around 25 nanoseconds.

The key property is single-producer, single-consumer. The moment you have multiple producers writing to the same ring buffer, you need a compare-and-swap to claim slots, which adds contention. The architecture section already established that the matching engine is single-threaded; that design decision is what makes the Disruptor work cleanly here.

Pre-allocate everything before the market opens. Object pools for Order and PriceLevel structs mean zero heap allocation on the critical path. malloc under load can take microseconds; a pool allocation is a pointer bump. Align your hot structs to 64-byte cache line boundaries to prevent false sharing between threads reading adjacent fields.

1// Cache-line aligned order struct prevents false sharing

2struct alignas(64) Order {

3 uint64_t order_id;

4 uint64_t timestamp_ns;

5 int64_t price; // in ticks

6 uint32_t quantity;

7 uint32_t remaining_qty;

8 uint8_t side; // 0 = bid, 1 = ask

9 uint8_t type; // 0 = limit, 1 = market

10 uint8_t status;

11 uint8_t _pad[29]; // pad to 64 bytes

12};

13The Matching Loop: Branch Prediction

The CPU's branch predictor is your silent partner. Help it.

Structure your matching loop so the common case, a partial fill where you continue walking the book, is the fall-through path. The rare case, a fully filled order or an empty book, takes the branch. In GCC and Clang you can make this explicit:

1while (remaining_qty > 0) {

2 if (__builtin_expect(best_level != nullptr, 1)) {

3 // common path: fill against resting order

4 execute_fill(best_level->front(), remaining_qty);

5 } else {

6 // rare path: book exhausted, rest the order

7 rest_order(incoming);

8 break;

9 }

10}

11Keep the entire matching function small enough to fit in L1 instruction cache, which is typically 32KB. If your matching logic is sprawling across multiple translation units with virtual dispatch and deep call stacks, you're evicting your own hot code. Inline aggressively, avoid virtual functions on the critical path, and profile with perf stat -e L1-icache-load-misses to verify.

Throughput: Batching and Parallelism

Single-instrument throughput is bounded by the single-threaded matching core. To scale across instruments, run a separate matching engine instance per instrument, each pinned to its own core. This is the standard exchange architecture: complete isolation, no shared state, linear scaling.

Batching helps on the output side. Rather than publishing each execution report individually over the network, batch fills into a single multicast packet when multiple fills occur in the same matching cycle. This amortizes the per-packet overhead across multiple events and reduces fan-out pressure on the event publisher.

For the input side, batching is more dangerous. Holding orders to batch them introduces artificial latency. The right approach is to drain the entire input ring buffer on each matching loop iteration rather than processing one order at a time, which gives you natural batching without adding wait time.

Measuring What You're Actually Optimizing

You cannot optimize what you cannot measure, and standard profiling tools lie about latency at this scale.

Use rdtsc for nanosecond-resolution timing within the process. It reads the CPU's timestamp counter directly with no system call overhead. The catch is that rdtsc is not synchronized across cores, so only use it for measurements on a single pinned thread.

1inline uint64_t rdtsc() {

2 uint32_t lo, hi;

3 __asm__ __volatile__ ("rdtsc" : "=a"(lo), "=d"(hi));

4 return ((uint64_t)hi << 32) | lo;

5}

6

7uint64_t t0 = rdtsc();

8process_order(order);

9uint64_t t1 = rdtsc();

10uint64_t cycles = t1 - t0;

11// divide by CPU frequency to get nanoseconds

12For end-to-end tick-to-trade measurement, you need PTP-synchronized hardware timestamps on both the inbound NIC (when the packet arrives) and the outbound NIC (when the execution report leaves). Software timestamps add jitter. Hardware timestamps are stamped by the NIC before the packet touches any software, giving you a clean measurement of the full pipeline.

Track P99 and P99.9, not averages. A matching engine with 2μs average latency and 500μs P99.9 is broken. Market makers care about tail latency because the worst-case fill is the one that costs them money.

perf and Intel VTune are your tools for finding where cycles go. Look specifically at cache miss rates (cache-misses, LLC-load-misses), branch misprediction rates (branch-misses), and instruction throughput. A high branch miss rate on the matching loop is a signal to restructure your conditionals.

Handling Market Stress

Flash crashes and major news events can spike order flow to 10x normal volume in under a second. Your engine needs a plan that isn't "fall over."

The ring buffer between the gateway and matching engine is your first line of defense. Size it to absorb at least 5 seconds of peak burst traffic. When the buffer fills, you have a choice: drop incoming orders (with a reject response) or apply backpressure to the gateway. Dropping is usually correct for a matching engine. Buffering indefinitely means participants get fills seconds after they expected them, which is worse than a clean reject.

Circuit breakers at the price level handle a different failure mode: a runaway algorithm sending orders at absurd prices. The matching engine should detect when the best bid or ask has moved more than a configurable threshold from the last traded price and halt matching, switching to an auction mode. This is both a market integrity mechanism and a protection against the engine itself being exploited.

The capacity planning answer interviewers want to hear is: size for 3x normal peak in steady state, design the ring buffers to absorb 10x burst for up to 30 seconds, and have explicit load-shedding logic with observable metrics so you know when you're approaching limits before you hit them.

Reliability and Risk Management

A matching engine that loses money faster than it makes it will be shut down before you finish explaining the architecture. Speed matters, but at trading firms, risk controls and fault tolerance are non-negotiable gates. Every design decision here has to answer two questions: what happens when something breaks, and what happens when a participant tries to do something dangerous?

Fault Tolerance

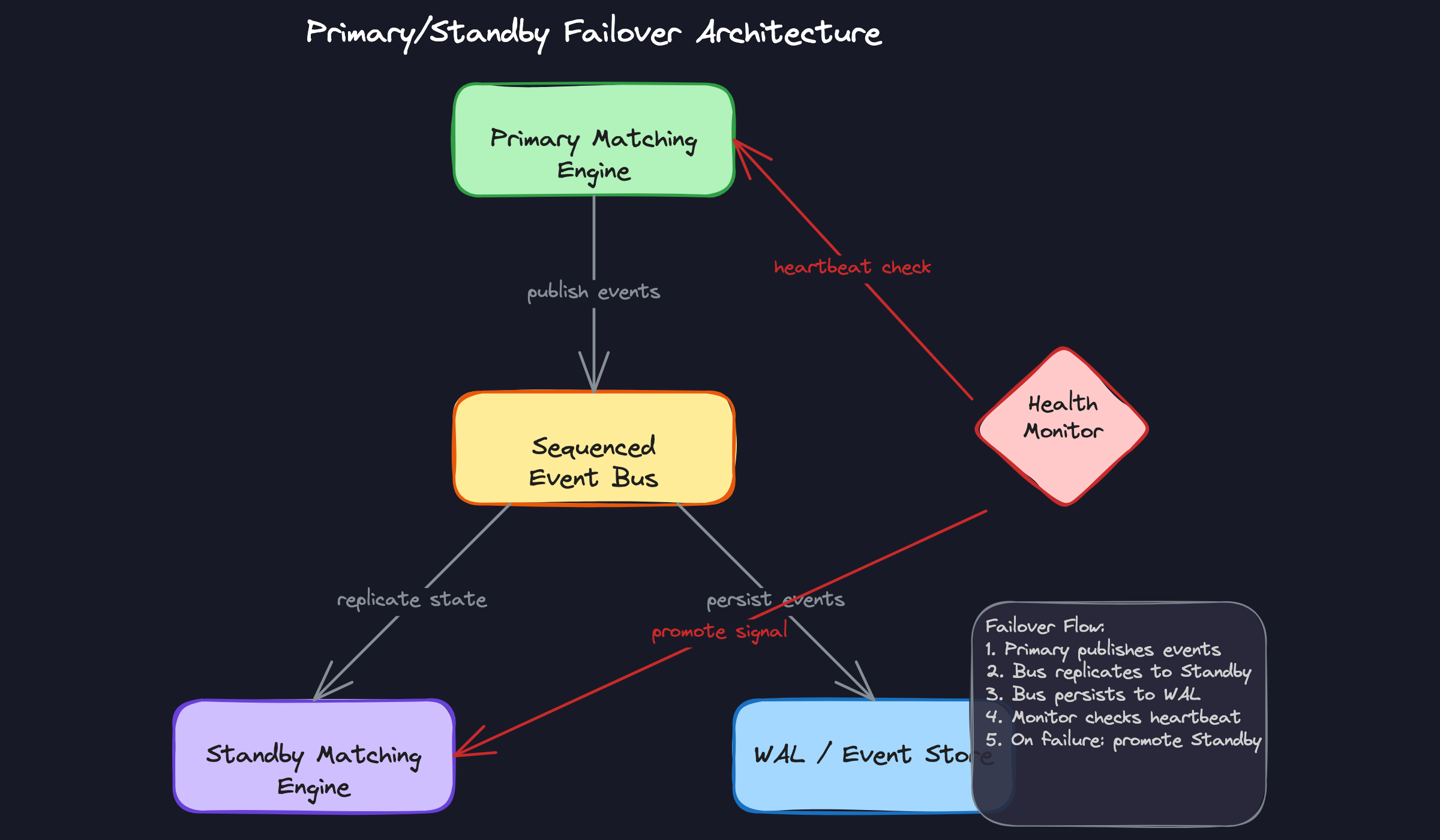

The standby instance is not a cold backup. It's a live shadow of the primary, consuming the exact same sequenced event stream in real time and maintaining an identical order book state. When the primary fails, promotion happens in under 100ms because the standby doesn't need to replay the WAL from scratch. It's already current.

This is the key insight interviewers want to hear: the standby's state is driven by the same ordered event stream that feeds the WAL. Both the standby and the durable log are consumers of the sequenced event bus. The standby gives you fast failover; the WAL gives you cold recovery and audit. They serve different purposes.

Split-brain is the failure mode where both primary and standby believe they are active and start accepting orders simultaneously. You prevent this with a hardware-level fencing mechanism, typically a token or lease held by the active engine. The health monitor revokes the primary's lease before signaling the standby to promote. The order gateway will not route to any instance that doesn't hold the active lease.

During market hours, your recovery time objective is measured in milliseconds, not minutes. A 100ms failover window is aggressive but achievable with a warm standby. Anything that requires WAL replay from a checkpoint is a disaster recovery path, not a high-availability path. Know the difference and say so explicitly.

Risk Controls

Pre-trade risk is a hard synchronous gate. No order touches the book until it has passed position limit checks, notional value limits, order rate throttles, and price collar validation. These checks run in the critical path, which means they must be fast, typically under 500 nanoseconds. You implement them as a series of integer comparisons against pre-loaded limit tables, not database queries.

A hardware kill switch sits above all of this. One signal from the risk desk halts all inbound order flow within a single network round trip. The kill switch doesn't wait for in-flight orders to complete. It drops them. This is intentional. When a firm needs to stop trading, they need it to stop now.

1// Simplified pre-trade risk check (runs before order enters the book)

2bool PreTradeRisk::check(const Order& order) {

3 const auto& limits = limits_table_[order.participant_id];

4

5 // Position limit check

6 int64_t projected_position = positions_[order.instrument_id]

7 + (order.side == Side::Buy ? order.quantity : -order.quantity);

8 if (std::abs(projected_position) > limits.max_position) return false;

9

10 // Notional limit check

11 if (order.price * order.quantity > limits.max_order_notional) return false;

12

13 // Order rate check (token bucket, updated atomically)

14 if (!rate_limiter_[order.participant_id].try_consume()) return false;

15

16 // Price collar: reject if price deviates > X% from last trade

17 double collar_pct = std::abs(order.price - last_trade_price_) / last_trade_price_;

18 if (collar_pct > limits.price_collar_pct) return false;

19

20 return true;

21}

22Circuit breakers operate at the market level, not the participant level. If the instrument's price moves more than a configured threshold within a rolling window, the engine pauses continuous matching and switches to auction mode. This is how exchanges handled the 2010 Flash Crash and why MiFID II mandates circuit breaker mechanisms for regulated venues.

Post-trade, you need reconciliation. Every fill emitted by the engine is compared against the participant's reported fills at end of day. Discrepancies trigger alerts before T+1 settlement. This runs entirely off the critical path, consuming the WAL as a source of truth.

Market Stress Handling

On a normal day, your engine might process 200K orders per second. On the day of a major macro announcement or a flash crash, that number can spike to 2M orders per second in under a second. Your system has to absorb this without dropping messages or introducing unbounded latency.

The Disruptor ring buffer between the gateway and the matching thread is your first line of defense. Size it to absorb several seconds of peak burst. If the ring fills completely, you have a choice: drop new orders (returning a reject to the sender) or apply backpressure to the gateway. Most exchange-grade engines choose explicit rejection over silent drops, because a rejected order is auditable. A dropped one isn't.

The matching thread itself must not slow down under load. This is why single-threaded design matters so much here. There's no lock contention to degrade under concurrency. The matching loop processes one order at a time, as fast as the CPU allows, and the throughput is a function of instruction count per order, not thread coordination overhead.

Sequence Gap Detection and Recovery

Every event leaving the matching engine carries a monotonically increasing sequence number. Downstream consumers, including the standby, the WAL writer, and market data subscribers, all track the last sequence number they've seen. A gap means a message was missed.

On detecting a gap, a consumer requests retransmission from the event store. The WAL supports point-in-time replay from any sequence number, so gap fills are cheap. This also means you can reconstruct the full order book state at any historical point in time, which is useful for both post-incident debugging and regulatory audit.

Audit and Compliance

MiFID II and Reg NMS both require timestamps at microsecond precision. You can't use gettimeofday() for this. You need a PTP-synchronized hardware clock, typically provided by the NIC itself via IEEE 1588 precision time protocol, with GPS as the grandmaster clock. The NIC timestamps packets at the hardware level before they even reach your application, which means the timestamp reflects when the order arrived at the wire, not when your software got around to processing it.

Every order state transition gets written to the audit log before any response is sent. New, acknowledged, partially filled, filled, cancelled, rejected: each transition is a durable record with a hardware timestamp, the participant identifier, and the full order details. This is not optional and it cannot be batched for performance. Regulators can and do request reconstruction of specific order sequences during investigations.

1CREATE TABLE order_audit_log (

2 seq_num BIGINT PRIMARY KEY, -- global sequence number

3 event_type VARCHAR(30) NOT NULL, -- 'NEW', 'ACK', 'PARTIAL_FILL', 'FILL', 'CANCEL', 'REJECT'

4 order_id UUID NOT NULL,

5 participant_id UUID NOT NULL,

6 instrument_id VARCHAR(20) NOT NULL,

7 side CHAR(1) NOT NULL, -- 'B' or 'S'

8 price BIGINT NOT NULL, -- price in ticks, avoids floating point

9 quantity BIGINT NOT NULL,

10 leaves_qty BIGINT NOT NULL, -- remaining quantity after this event

11 hardware_ts BIGINT NOT NULL, -- nanoseconds since epoch, NIC-stamped

12 software_ts BIGINT NOT NULL, -- nanoseconds since epoch, CPU TSC

13 created_at TIMESTAMP NOT NULL DEFAULT now()

14);

15

16CREATE INDEX idx_audit_participant ON order_audit_log(participant_id, hardware_ts);

17CREATE INDEX idx_audit_order ON order_audit_log(order_id, seq_num);

18Notice the dual timestamps. The hardware timestamp is the regulatory timestamp, the one you report to the regulator. The software timestamp is for internal latency measurement. The delta between them tells you your processing latency for every single order, which is exactly the data you need to prove best execution.

Record retention under MiFID II is five years. Under some jurisdictions it's seven. This data lives in cold storage, but it must be queryable. The WAL handles the hot path; an archival pipeline moves sealed log segments to object storage daily. The sequence number makes it trivially reconstructable in order.

What is Expected at Each Level

Interviewers at trading firms calibrate their expectations sharply. The gap between a mid-level and staff-level answer isn't just depth; it's whether you're reasoning about the system or reasoning about the constraints that shaped the system.

Mid-Level Engineer

- You can describe price-time priority correctly and implement a basic order book using a sorted map of price levels, each holding a FIFO queue of resting orders. You don't need to know the optimal data structure, but you need to know why ordering matters and what "time priority" actually enforces.

- You understand that the matching engine needs a write-ahead log for recovery and can articulate why: if the process crashes, you need to reconstruct order book state deterministically from a durable event sequence.

- You know FIX protocol exists and can explain its role as the industry-standard message format for order entry, even if you haven't implemented a FIX parser yourself.

- You identify the critical path (network in, risk check, match, publish out) and can explain why any blocking operation on that path is a problem.

Senior Engineer

- You design the full single-threaded critical path without being prompted. You explain why a single matching thread eliminates lock contention, and you know the Disruptor pattern by name. You can describe how the ring buffer hands off between the I/O thread and the matching thread with memory barriers instead of mutexes, and you can estimate the handoff cost (~25ns versus ~1 microsecond for a mutex).

- Kernel bypass isn't just a buzzword you drop. You can explain that the Linux network stack adds roughly 10 microseconds of latency, that DPDK or Solarflare OpenOnload eliminates that by processing packets entirely in userspace, and that this is non-negotiable for a sub-microsecond matching target.

- You place pre-trade risk checks correctly: synchronously before the order touches the book, never after. You can discuss the tradeoff between check thoroughness and latency, and you know that a hardware kill switch needs to halt order flow within one network round trip.

- You handle the failure modes. You know what happens when the primary engine dies mid-match, how a hot standby maintains mirror state via the sequenced event bus, and why replaying the full WAL on failover is too slow (which is why the standby stays warm).

Staff+ / Principal

- You proactively raise FPGA offload and frame it as a tradeoff, not a silver bullet. Offloading top-of-book maintenance and simple market order matching to FPGA can push matching latency below 100 nanoseconds, but it dramatically increases development complexity and limits the order types you can handle in hardware. You know when that tradeoff is worth making.

- NUMA topology and cache-line alignment are part of your baseline design, not afterthoughts. You pin the matching thread to a core on the same NUMA node as the NIC, you align hot structs to 64-byte cache lines to prevent false sharing, and you pre-allocate object pools to eliminate heap allocation on the critical path entirely.

- You bring up PTP clock synchronization unprompted when regulatory compliance comes up. MiFID II requires microsecond-precision timestamps on every order state transition. You know that software timestamps from

gettimeofday()aren't good enough, and you can explain how PTP-disciplined hardware timestamping satisfies the requirement without adding latency to the critical path. - When the interviewer asks about scaling beyond a single instrument, you reason through the tradeoffs between running a separate engine per instrument (clean isolation, simpler latency guarantees, higher infrastructure cost) versus a shared thread pool (better utilization, cross-instrument contention risk). You can defend a choice with numbers, not just intuition.