Join Data Science Interview MasterClass (May Cohort) led by FAANG Data Scientists | Just 2 seats remaining...

Data Science MasterClass (May) | 2 seats left

The Cloud Data Warehouse Interview Framework

The Cloud Data Warehouse Interview Framework

A candidate I coached last year could explain Snowflake's micro-partitioning in detail, walked through clustering keys without hesitation, and knew the difference between a virtual warehouse and a resource monitor. They didn't get the offer. When the interviewer asked "how would you design a cost-efficient ingestion pipeline for 50TB of event data?", they went straight to the technical solution and never once mentioned budget constraints, governance, or what happens when that table triples in size.

That's the trap. Cloud data warehouse interviews at Airbnb, Stripe, Netflix, Uber, and Snowflake itself aren't testing whether you've read the docs. They're testing whether you think like someone who has actually run these systems in production, where every architectural decision has a cost implication, every performance fix has a trade-off, and "it depends" is only a good answer if you can say what it depends on.

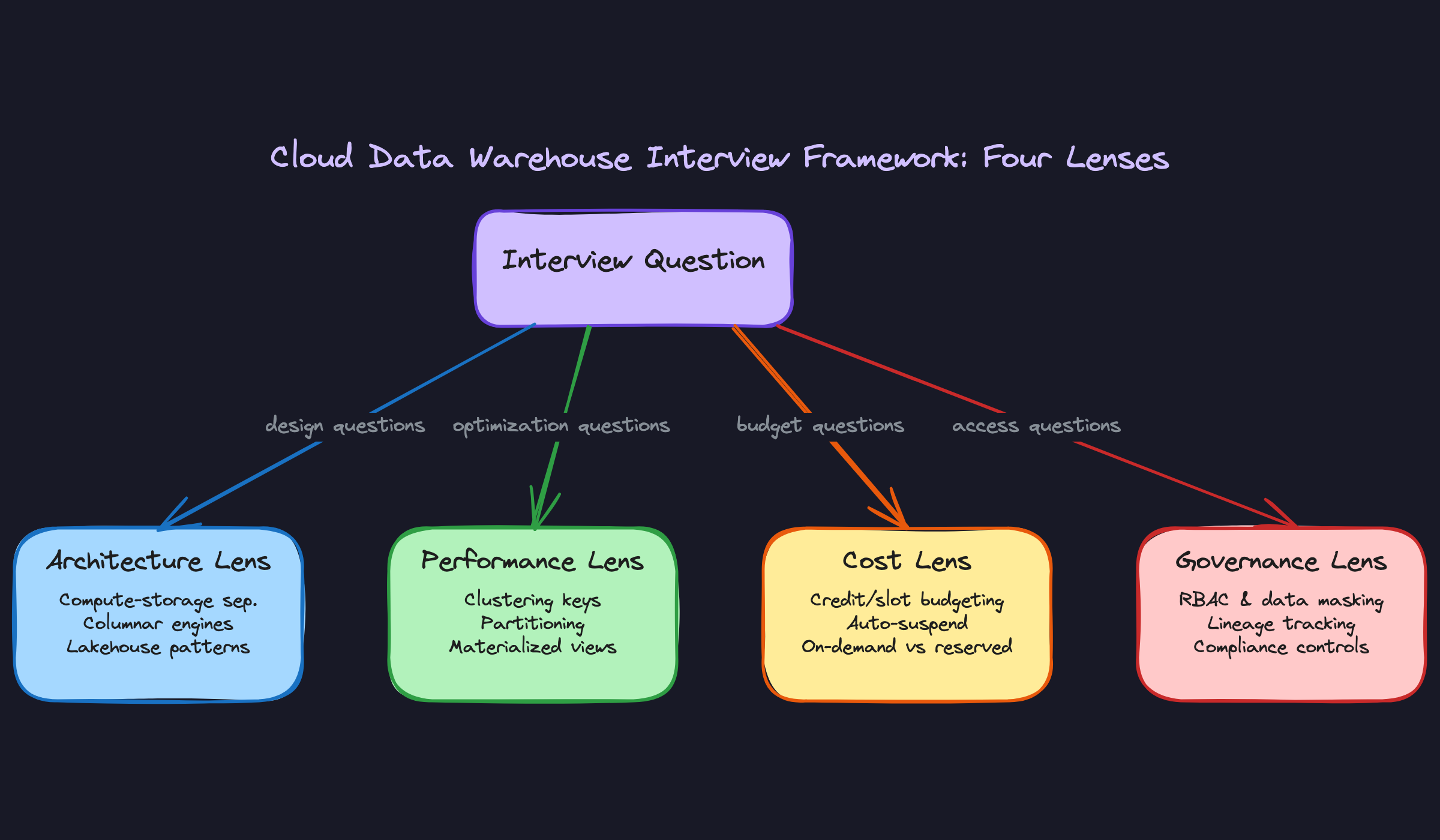

This framework gives you a repeatable structure for any warehouse question you'll face: design, optimization, migration, or cost. It works across Snowflake, BigQuery, Redshift, and Databricks. It's built around four lenses: Architecture, Performance, Cost, and Governance. Most candidates only engage one or two of them. Senior engineers engage all four, even when the question only asks about one.

The Framework

Every cloud data warehouse interview question, no matter how it's phrased, has the same underlying structure. Your job is to recognize which lens applies, anchor your answer in a platform mechanism, then surface the trade-offs. That's it. The four lenses are Architecture, Performance, Cost, and Governance.

Here's the full response structure you should internalize tonight:

| Phase | Time | Goal |

|---|---|---|

| 1. Identify the lens | 30–60 sec | Name the constraint you're optimizing for before proposing anything |

| 2. Anchor in the mechanism | 1–2 min | Connect your answer to a specific platform internal (partitioning, compute model, caching) |

| 3. Propose with trade-offs | 2–3 min | Give a solution AND the reason you'd choose it over the alternative |

| 4. Volunteer the adjacent lens | 30–60 sec | Raise the concern the interviewer hasn't asked about yet |

This four-phase structure is the one thing to take into the room. Everything else is application.

Phase 1: Identify the Lens (and Name Your Constraint)

The single most common mistake candidates make is jumping straight to a solution. Interviewers at Airbnb, Stripe, and Snowflake are explicitly trained to watch for this. A fast answer that skips constraint identification reads as shallow, not confident.

What to do:

- Listen for the question type: "design" maps to Architecture, "slow query" maps to Performance, "our bill is too high" maps to Cost, "who can see this data" maps to Governance.

- Ask one clarifying question to surface the real constraint. Not a vague "can you tell me more?" but a targeted question that names a trade-off axis.

- State the constraint out loud before you say anything else about the solution.

What to say:

- "Before I propose anything, I want to make sure I'm optimizing for the right thing. Is the primary concern here query latency, or is it the cost of running these queries at scale?"

- "Just to anchor us: are we optimizing for freshness, or is a 15-minute lag acceptable if it means lower compute spend?"

- "One thing I want to clarify: is this table queried by a handful of analysts or by a high-concurrency BI tool? That changes the answer significantly."

How the interviewer is evaluating you: They're checking whether you treat constraints as given or as something to surface. Senior engineers don't solve the problem they're handed; they verify it's the right problem first. One good clarifying question does more for your signal than two minutes of correct-but-unconstrained solution.

Do this: After your clarifying question, restate the constraint in your own words before moving on. "Okay, so we're optimizing for query latency on a high-concurrency workload, and cost is secondary. Got it." This shows you listened and gives the interviewer a chance to correct you.

Phase 2: Anchor in the Platform Mechanism

Once you've named the constraint, your next sentence should connect directly to a platform internal. Not "I'd optimize the query" but "Snowflake's micro-partitioning means the planner can prune partitions at scan time, so the first thing I'd check is whether the filter column aligns with how the data was loaded."

What to do:

- Name the specific mechanism that governs the behavior you're discussing. Compute-storage separation, columnar compression, micro-partitioning, slot-based concurrency, result caching: pick the one that's actually relevant to the constraint you just named.

- Explain what that mechanism does in one or two sentences. Don't assume the interviewer wants you to skip it; explaining it briefly shows you understand it, not just that you've heard the term.

- Connect the mechanism to the constraint. "Because Snowflake separates compute from storage, you can scale the virtual warehouse independently of the data volume, which directly addresses the concurrency problem."

What to say:

- "The core mechanism here is BigQuery's slot-based execution model. Every query competes for slots, and if you're on on-demand pricing, you're essentially competing with yourself during peak hours."

- "Redshift's sort keys work differently from Snowflake's clustering keys. With Redshift, sort order is physical and set at load time. With Snowflake, clustering is a background process that reorganizes micro-partitions over time. That distinction matters here because..."

- "The reason this table is slow probably has nothing to do with the query itself. Snowflake stores metadata about the min/max values in each micro-partition, so if your filter column has high cardinality and the data isn't clustered on it, the planner can't prune anything."

How the interviewer is evaluating you: This is where they separate candidates who've read the docs from candidates who've operated these systems. Anyone can say "use a clustering key." The question is whether you can explain the mechanism that makes it work and why it applies here specifically.

Example: "Okay, I think I understand the constraint. Let me anchor this in how Snowflake actually handles this under the hood before I propose a fix..."

Phase 3: Propose with Trade-offs

A single "right answer" is a red flag at senior levels. Interviewers at top companies are explicitly looking for candidates who can hold two valid options and reason between them. If you give one answer without acknowledging the alternative, you've signaled that you haven't thought past the first solution that came to mind.

What to do:

- State your recommended solution clearly. One sentence, no hedging.

- Name the alternative and explain why you're not choosing it in this context. "I'd use a materialized view here rather than a clustering key, because the query pattern is fixed and the table is append-only. If the query pattern were more ad hoc, I'd go the other direction."

- Quantify where you can. Rough numbers are fine and actually preferred over vague claims. "A well-chosen clustering key on a 10TB table can reduce bytes scanned by 60–80% for selective filters. That's the difference between a 30-second query and a 4-second one."

What to say:

- "My recommendation is to define a clustering key on the event_date column. The alternative would be a materialized view, but that only makes sense if the query shape is predictable. Here, analysts are running ad hoc filters, so clustering gives us broader coverage."

- "I'd go with BigQuery's partitioned tables over sharding here. Sharding is a legacy pattern that adds operational overhead without the query pruning benefits you get from native partitioning."

- "The trade-off with auto-clustering in Snowflake is cost. It's a background service that consumes credits. For a table this size, you're probably looking at $50–200/month in clustering overhead. Whether that's worth it depends on how often this table is queried."

How the interviewer is evaluating you: They want to see that your recommendation is conditional, not absolute. The best answer in a Snowflake context is often wrong in a BigQuery context. Showing that you know why your solution works here, and where it would break down, is the signal they're looking for.

Phase 4: Volunteer the Adjacent Lens

This is the move that separates senior candidates from mid-level ones. After you've answered the question you were asked, raise the lens the interviewer hasn't asked about yet.

What to do:

- Identify which lens you haven't touched. If you answered a Performance question, the adjacent lenses are Cost and Governance. Pick the one most relevant to the scenario.

- Introduce it briefly. One or two sentences. You're not pivoting the whole conversation; you're signaling that you think in systems, not in isolated problems.

- Let the interviewer decide whether to follow the thread. Sometimes they will. Sometimes they'll redirect. Either way, you've demonstrated the breadth.

What to say:

- "One thing I'd want to flag before we move on: adding a clustering key has a cost implication. Snowflake's automatic clustering service runs continuously and bills against your credit balance. For a table this size, that's worth budgeting explicitly."

- "On the governance side: if we're materializing this view and it contains PII, we need to make sure the masking policies on the source table carry through. Snowflake doesn't propagate dynamic data masking to materialized views automatically."

- "I'd also think about who owns the compute here. If this is a shared warehouse, a heavy clustering job running in the background will compete with analyst queries. You might want to isolate it on a dedicated warehouse with auto-suspend."

How the interviewer is evaluating you: They're checking whether you think about second-order effects. A performance fix that doubles your Snowflake bill is not a good fix. A governance gap that exposes PII through a materialized view is not a good fix. Raising these unprompted is exactly what a senior engineer does in a real design review, and interviewers know it.

Putting It Into Practice

The best way to see the framework work is to watch it in motion. Let's take one of the most common warehouse interview prompts and run it all the way through.

The prompt: "We have a 10TB Snowflake table that's slow to query. How do you fix it?"

This question is a trap for candidates who jump straight to solutions. The interviewer isn't testing whether you know what a clustering key is. They're testing whether you think before you act.

The Dialogue

Do this: Restating the constraint before proposing a solution is step one of the framework. You're not stalling. You're signaling that you know the fix depends on the workload, not just the table size.

event_date and region.event_date, Snowflake uses partition pruning to skip micro-partitions that don't match. But as the table grows, if the data isn't physically ordered by event_date, those micro-partitions become mixed, and pruning efficiency drops. That's almost certainly what's happening here.You (continuing): The primary fix is a clustering key on event_date, and probably a compound key on (event_date, region) given the filter patterns you described. That reorganizes the micro-partitions so Snowflake can skip far more of them at query time. For a 3x growth scenario, you'd typically see pruning efficiency go from something like 20-30% of partitions scanned down to under 5%, which maps pretty directly to that 5-second query time you mentioned.

Do this: The interviewer just pivoted to scale. Don't panic. This is an invitation to show you can hold two valid options and reason between them.

You (continuing): First, is the clustering key still the right shape? If query patterns have diversified, a compound key on (event_date, region) might not be pruning as efficiently as it should. Second, I'd look at whether search optimization service makes sense for the high-cardinality point lookups, or whether we should be thinking about a materialized view for the specific dashboard queries that are most latency-sensitive. Materialized views in Snowflake are precomputed and automatically maintained, so the dashboard queries hit cached results rather than scanning the base table at all.

event_date order (which is common for event streams), reclustering is cheap because the table is already mostly sorted. If you're backfilling historical data or doing lots of updates, reclustering is expensive because it has to do more work. That's why I'd always check the SYSTEM$CLUSTERING_INFORMATION function before committing to a clustering key. It tells you the current clustering depth and overlap, so you can see whether the table actually needs reclustering or whether the problem is something else entirely.Do this: Volunteering a specific diagnostic tool (and the cost nuance around write patterns) is exactly how you signal that you've operated this in production, not just read about it.

DATE or TIMESTAMP field, and clustering within those partitions on up to four columns. So for the same workload, I'd partition the table on event_date and cluster on region. The key difference is that BigQuery clustering is free to maintain. There's no background reclustering cost. The trade-off you're managing instead is slot consumption: BigQuery's on-demand pricing charges per byte scanned, so the goal is the same (reduce bytes scanned) but the cost model is per-query, not per-compute-hour. That changes how you think about materialized views too. In BigQuery, materialized views are billed when they refresh, not when they're queried, so the math is different.The 4-Step Template

Internalize this. It works for any warehouse question.

- Restate the constraint. Name what you're optimizing for before proposing anything. Latency? Cost? Concurrency? Freshness? Say it out loud.

- Anchor in the platform mechanism. Explain the internal behavior that makes the problem exist. Micro-partitions, columnar pruning, slot contention, result cache invalidation. Whatever it is, name it and explain it briefly.

- Propose the solution with trade-offs. Never give one answer. Give the primary recommendation and the condition under which you'd choose something different instead.

- Quantify where you can. "Pruning efficiency from 30% to under 5%" is more convincing than "it'll be faster." Even rough numbers signal production experience.

Signaling Seniority Without Being Asked

The moment in the dialogue where the cost question came up wasn't random. A senior candidate volunteers that lens before the interviewer asks. After proposing the clustering key, you could add: "One thing I'd flag proactively is the reclustering cost. For this table size it's probably fine, but I'd want to monitor it." That one sentence tells the interviewer you think about budget as a first-class constraint, not an afterthought.

Same principle applies to governance. If you're designing a table that holds PII, mention column-level masking policies before you're asked. If you're proposing a materialized view for a dashboard, mention who has access to the underlying table and whether the view needs different permissions. These aren't bonus points. At senior levels, they're expected.

Handling Platform-Agnostic Questions

Sometimes the interviewer won't name a platform. "How would you optimize a slow query against a large fact table?" is a real question you'll get.

Don't pick a platform arbitrarily. Ask: "Are we working within a specific platform, or should I walk through the general principles and then show how they apply differently across Snowflake, BigQuery, and Redshift?" That question itself signals maturity. Then, when you answer, lead with the mechanism that's universal (columnar pruning, partition elimination, precomputation) and layer in the platform-specific implementation details. The framework works the same way. The vocabulary just shifts.

Don't do this: Saying "it depends on the platform" and stopping there. That's not an answer. Name the dependency, explain what it changes, and give the answer for at least two platforms. Vagueness reads as uncertainty, not nuance.

Common Mistakes

Most candidates don't fail because they don't know the material. They fail because of how they present it. These patterns show up constantly, and interviewers at Airbnb, Stripe, and Snowflake know exactly what they signal.

Jumping Straight to a Solution

You hear "our Snowflake queries are slow" and immediately say "I'd add a clustering key on the timestamp column." The interviewer nods politely and marks you down.

Skipping the constraint diagnosis tells the interviewer you're pattern-matching, not problem-solving. A clustering key might be exactly right, or it might be completely irrelevant if the bottleneck is warehouse size, spill to disk, or a missing materialized view. They can't tell which you know, because you didn't show your reasoning.

Don't do this: "I'd cluster on the date column and that should fix the scan performance."

Do this: "Before I propose anything, can you tell me what the query pattern looks like and whether we're seeing full table scans or something else? The fix depends on whether this is a pruning problem or a compute problem."

Name the constraint first. Always. It takes ten seconds and it's the single clearest signal that you've operated these systems in production.

Reciting All Four Lenses When Two Would Do

The framework exists to help you think, not to give you a script. If the interviewer asks a narrow question about query pruning in BigQuery, walking them through architecture, performance, cost, and governance in sequence is not thorough. It's exhausting.

Interviewers penalize this because it signals you memorized a template instead of developing judgment. Senior engineers know which lens matters for which problem. That selectivity is the skill being tested.

Don't do this: Spending three minutes on governance when the question is about a slow partition scan.

Fix it by asking yourself, before you answer, which one or two lenses are actually load-bearing for this specific question. Cover those well. Mention the others only if they're genuinely relevant.

Platform Name-Dropping Without the Mechanism

"I'd use Snowflake's micro-partitioning for this." Great. What does that mean? How does it work? Why does it help here specifically?

If you can't answer those follow-ups, the interviewer already knows you can't. They've heard this exact sentence from dozens of candidates who read a blog post and stopped there. Name-dropping without mechanism reads as credential stuffing, and it invites the exact follow-up questions you're least prepared for.

event_date can skip 90% of partitions without a full scan" is a real answer. "I'd use micro-partitioning" is not.When you name a platform feature, follow it immediately with what it does and why it applies. No exceptions.

Treating Cost as Someone Else's Problem

You design a beautiful clustering strategy, propose a materialized view refresh schedule, and recommend scaling up the warehouse. The interviewer asks "what does that cost?" and you say "I'd need to check the pricing page."

That's a red flag at the senior level. Every architectural decision in a cloud warehouse is also a spend decision. Clustering keys trigger automatic reclustering credits. Materialized views consume storage and compute on refresh. A larger warehouse doubles your credit burn rate. Engineers who've actually run these systems in production know this because they've seen the bill.

Don't do this: Proposing solutions without any sense of their cost profile, then treating cost as a detail to figure out later.

You don't need exact numbers. You need to demonstrate awareness: "clustering will help pruning significantly, but it does trigger reclustering compute, so I'd want to confirm the query frequency justifies that ongoing credit spend before committing."

Giving One Right Answer Instead of a Trade-off

"The best approach here is to use a materialized view." Full stop.

Interviewers at top companies are explicitly not looking for the right answer. They're looking for candidates who can hold two valid options, articulate the conditions under which each wins, and make a reasoned recommendation given the specific constraints. A single confident answer with no alternatives signals that you haven't thought about the edges, or that you've only ever solved this problem one way.

Do this: "There are two reasonable paths here. A materialized view gives you fast reads but adds refresh latency and storage cost. A clustering key is cheaper to maintain but only helps if queries consistently filter on that column. Given that you mentioned freshness is critical, I'd lean toward clustering first and revisit materialized views if read latency becomes the bottleneck."

That's what senior thinking sounds like. Two options, explicit conditions, a recommendation with a rationale. Not a verdict.

Quick Reference

Interview Question Type → Lens → Mechanism → Trade-off

| Question Type | Primary Lens | Anchor Mechanism | Trade-off to Surface |

|---|---|---|---|

| "Design a warehouse for X" | Architecture | Compute-storage separation | Flexibility vs operational complexity |

| "This query is slow" | Performance | Clustering keys / micro-partitioning | Pruning efficiency vs write amplification |

| "We're overspending on compute" | Cost | Auto-suspend / credit budgeting | Idle savings vs cold-start latency |

| "Who can access this data?" | Governance | RBAC / column masking | Security granularity vs query performance |

| "Should we migrate to platform X?" | Architecture + Cost | Storage format, compute model | Vendor lock-in vs feature fit |

| "How do we handle concurrent users?" | Performance + Cost | Warehouse scaling / slot limits | Throughput vs credit burn |

Core Internals: One-Line Definitions

Know these cold. If you stumble on any of them, the interviewer notices.

- Micro-partitioning: Snowflake's automatic 50-500MB immutable storage units, each with min/max metadata that enables partition pruning without manual intervention.

- Columnar compression: Storing data column-by-column so analytics queries read only the columns they need, dramatically reducing I/O.

- Compute-storage separation: Compute and storage scale independently; multiple virtual warehouses can query the same data simultaneously without storage contention.

- Result cache: A cached copy of a query's output, served instantly on re-execution if the underlying data hasn't changed (Snowflake holds this for 24 hours).

- Materialized views: Pre-computed query results stored as a physical table, refreshed on a schedule or on data change, trading storage cost for query speed.

- Clustering keys: User-defined columns that control how data is physically co-located on disk, improving pruning on high-cardinality filter columns.

- Slots/Credits: The compute billing unit. BigQuery uses slots (query capacity), Snowflake uses credits (virtual warehouse uptime). Both reward efficient query writing.

The 4-Step Answer Template

Internalize this. Apply it to every warehouse question, in order.

- Name the constraint. "The primary constraint here is query latency for concurrent analysts, not storage cost."

- Anchor in the mechanism. "Snowflake handles this through micro-partitioning and automatic clustering, which means..."

- Propose with trade-offs. "I'd add a clustering key on

event_date, which improves pruning but adds write overhead on ingestion." - Quantify or flag the next decision. "That likely cuts scan volume by 60-80% for date-range queries. The follow-up question is whether ingestion latency is acceptable."

Platform Comparison

| Platform | Compute Model | Storage Format | Cost Unit | Key Differentiator |

|---|---|---|---|---|

| Snowflake | Virtual warehouses (per-second billing) | Proprietary columnar (FDN) | Credits | Multi-cluster auto-scaling; time travel |

| BigQuery | Serverless (on-demand or reserved) | Capacitor (columnar) | Slots / bytes scanned | Zero infrastructure; BI Engine for sub-second |

| Redshift | Node-based clusters or Serverless | Columnar + zone maps | Node-hours / RPU | Tight AWS ecosystem; Spectrum for S3 queries |

| Databricks | Clusters / SQL Warehouses | Delta Lake (Parquet + transaction log) | DBUs | Lakehouse unification; open format |

Phrases That Signal Senior Thinking

Use these at the right moment and you'll stand out.

- "Before I propose a solution, I want to name the constraint I'm optimizing for..."

- "That would improve query performance, but the cost implication is worth flagging: clustering keys increase write credits on every load."

- "This depends on the access pattern. If analysts are always filtering by date, clustering wins. If they're doing full scans for ML feature generation, it won't help much."

- "I'd want to check the query profile first before assuming it's a partitioning problem. It could be a spill-to-disk issue from an oversized join."

- "The trade-off between on-demand and reserved slots only makes sense once you know your utilization baseline. Below 70%, on-demand is usually cheaper."

Red Flags to Avoid

- Naming a platform feature without explaining what it actually does in this scenario.

- Proposing a solution before you've stated what you're optimizing for.

- Treating governance as an afterthought you mention only if asked.

- Giving one "correct" answer when the interviewer is waiting to hear you weigh two valid options.

- Skipping cost entirely on a design or performance question.