Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

Dimensional Modeling

Dimensional Modeling

Your transactional database was designed to write fast, not read fast. Every time an analyst runs "total revenue by product category by month" against a normalized 3NF schema, the database is grinding through six joins across millions of rows, reassembling data that was deliberately split apart to prevent update anomalies. At Airbnb's scale, that query doesn't just run slow. It doesn't finish.

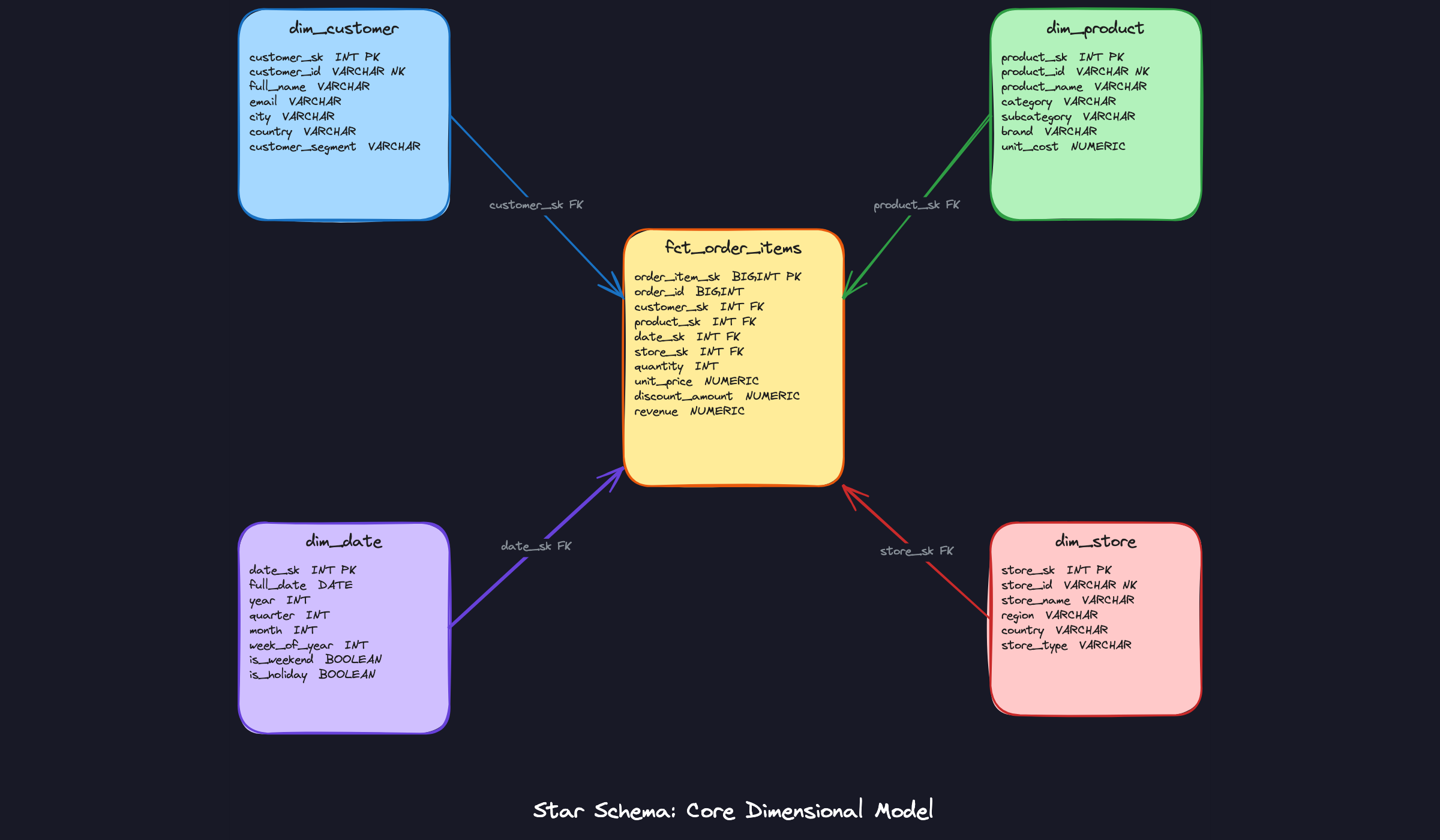

Dimensional modeling is the deliberate restructuring of that data for analytical reads. Instead of normalizing everything into its smallest pieces, you organize data around two types of tables: facts and dimensions. Fact tables capture what happened, things like orders placed, payments processed, or rides completed, expressed as numeric measures. Dimension tables capture the context around those events: who the customer was, what the product was, where the store was, when it happened.

The mental model that makes everything click: a fact table is tall and narrow, millions of rows of foreign keys and numbers. A dimension table is wide and short, thousands of rows packed with descriptive attributes. A query that would take 12 joins on your OLTP schema becomes 4 clean joins on a dimensional model, and BI tools like Looker, Tableau, and dbt can generate that SQL automatically. That's why data engineer and analytics engineer roles at Stripe, Shopify, and Meta expect you to design one from scratch, defend your normalization choices, and explain exactly how your schema holds up at scale.

How It Works

Start with a concrete scenario. Your company runs an e-commerce platform. Every day, analysts need to answer questions like "which product categories drove the most revenue last quarter in the Southeast region?" On a normalized OLTP schema, that query joins orders to order_items to products to categories to customers to addresses. It's slow, it's fragile, and it makes your BI tool cry.

Dimensional modeling flips the design goal. Instead of optimizing for write correctness, you optimize for read simplicity. You pick one central event you care about measuring, and you surround it with all the context needed to slice and dice it.

Declare Your Grain First

Before you touch a single table, you need to answer one question: what does one row represent?

This is called the grain declaration, and it's the most important decision in the entire model. Get it wrong and every aggregate downstream is wrong too. "One row per order" and "one row per order line item" sound similar, but they produce completely different schemas. At the order grain, you store one revenue figure per order. At the line-item grain, you store one row per product within each order, which means a single order with three products becomes three rows.

The line-item grain is almost always more useful. It lets you answer both "total revenue per order" and "revenue by product category" from the same table. The order grain can't answer the second question at all.

The Star Schema Structure

Once you've declared the grain, the schema almost designs itself. You have one central fact table surrounded by dimension tables, one per axis of analysis. That hub-and-spoke shape is why it's called a star schema.

Think of the fact table as a receipt. It records that something happened, with numbers attached. The dimension tables are the reference cards that explain who, what, where, and when.

Here's what that looks like for an e-commerce order line item:

The fact table, fct_order_items, sits in the center. Each row is one line item on one order. It holds foreign keys pointing out to dim_customer, dim_product, dim_date, and dim_store. The actual descriptive attributes, like the customer's city or the product's category, live entirely in those dimension tables. The fact table stays narrow: mostly foreign keys and numeric measures.

Inside the Fact Table

The numeric columns in a fact table aren't all equal, and interviewers will probe this distinction.

Additive measures can be summed across any dimension. Revenue and quantity are the classic examples. You can sum revenue across customers, across dates, across products, and the result always makes sense.

Semi-additive measures can be summed across some dimensions but not time. Account balance is the textbook case. Summing balances across all customers at a single point in time is valid. Summing a single customer's balance across twelve months gives you a meaningless number. When you see a balance or inventory count in a fact table, your aggregation logic needs to use snapshots, not sums over time.

Non-additive measures should never be summed at all. Ratios and percentages fall here. If your fact table stores a conversion_rate column, you cannot sum it across rows to get a total conversion rate. You always recompute it from its components: divide total conversions by total sessions after aggregating those separately.

Inside the Dimension Table

Dimension tables are wide and short. A dim_customer table might have 50 columns and a few million rows. A dim_product table might have 30 columns and a few hundred thousand rows. Width is fine here because columnar warehouses read only the columns a query touches.

Two things matter most about dimension table design.

First, use a surrogate key as the primary key, not the natural business key. The natural key is whatever the source system uses, like a customer_id string from your CRM. The surrogate key is a warehouse-generated integer. You need this separation because later, when you implement slowly changing dimensions, you'll have multiple rows for the same customer with different surrogate keys. If you used the natural key as your PK, that falls apart immediately.

Second, denormalize your hierarchies. Product category and subcategory both live directly in dim_product as flat columns, not in separate dim_category and dim_subcategory tables. Yes, this violates third normal form. That's intentional. The extra join to resolve a hierarchy adds latency and complexity with almost no benefit in a columnar warehouse where storage is cheap. When your interviewer asks why you didn't normalize the product hierarchy, the answer is: "I'm optimizing for query simplicity and read performance. The storage cost of repeating category names across product rows is negligible."

What the Query Looks Like

The payoff of all this design work is a query pattern that's almost embarrassingly clean. To get revenue by product category and customer region for Q4, you join fct_order_items to dim_product on product_sk, join to dim_customer on customer_sk, join to dim_date on date_sk, filter on quarter and year, then group by category and region. Four tables, four joins on integer surrogate keys, one GROUP BY. BI tools like Looker and Tableau generate this pattern automatically when you model it correctly.

Compare that to the equivalent query on a normalized OLTP schema, which might require six or seven joins through junction tables and lookup tables before you even get to the GROUP BY. The star schema wins on readability, on performance, and on how easy it is for a non-engineer analyst to understand what's happening.

Patterns You Need to Know

In an interview, you'll usually need to pick a specific approach. Here are the ones worth knowing.

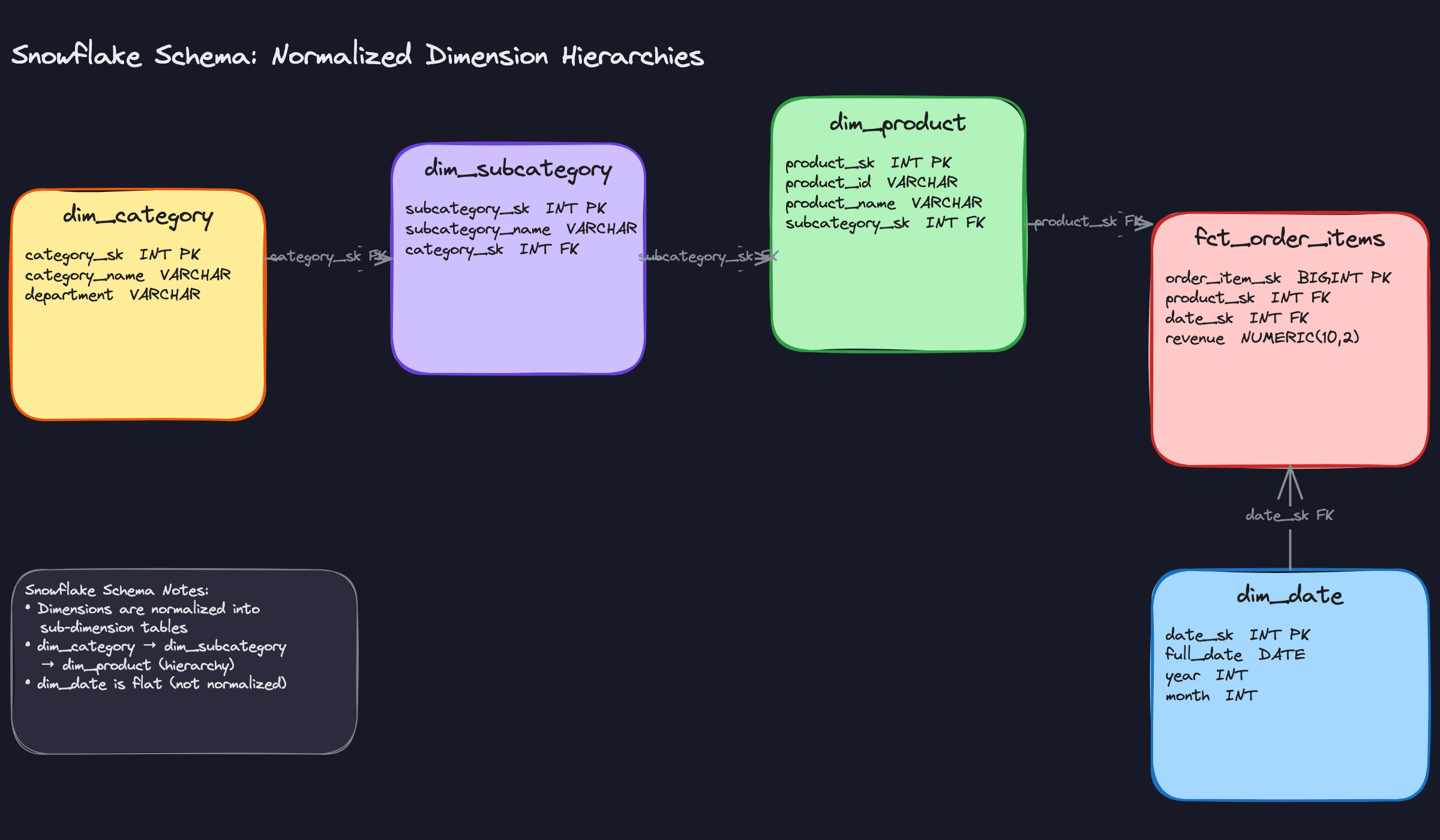

Snowflake Schema

The star schema you saw in the previous section keeps all product attributes, including category and subcategory, flat inside dim_product. A snowflake schema takes that hierarchy and breaks it out: dim_product gets a foreign key to dim_subcategory, which in turn points to dim_category. The dimension is now normalized.

The honest truth is that most modern cloud warehouses don't benefit much from this. Snowflake, BigQuery, and Redshift are columnar engines where storage is cheap and wide tables scan efficiently. Normalizing your dimensions adds joins, complicates your dbt models, and can confuse BI tools that expect a flat dimension. The cases where snowflake schema genuinely earns its place are narrow: a dimension table that's genuinely enormous (hundreds of millions of rows), a team with strict governance requirements around shared dimension hierarchies, or a legacy on-prem warehouse where storage costs actually matter.

When your interviewer asks why you didn't normalize the product hierarchy, the right answer isn't "I forgot." It's "I kept it flat because the query engine handles wide tables efficiently and the simpler join pattern reduces complexity for analysts. I'd normalize only if the dimension table size or governance requirements justified the trade-off."

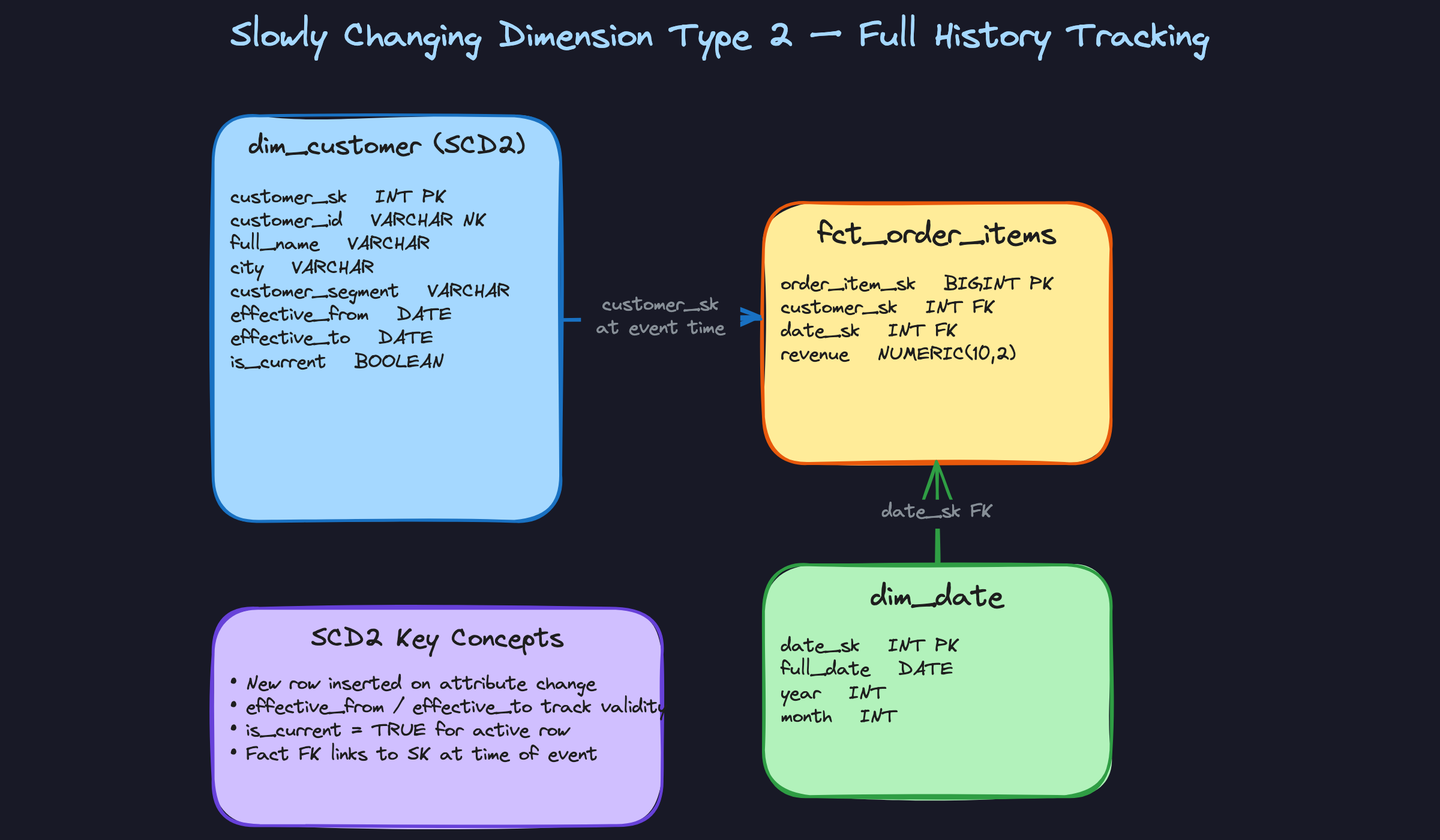

Slowly Changing Dimensions (SCDs)

This is the one interviewers love to probe. The question is simple: a customer moves from New York to London. What do you do with your dim_customer table?

Type 1 is the simplest answer: overwrite the old value. The customer's city is now London, full stop. No history preserved. Use this when historical accuracy doesn't matter for the attribute, like fixing a typo in a product name.

Type 2 is what you'll reach for in almost every real scenario. Instead of overwriting, you insert a new row with the updated attributes and stamp it with effective_from, effective_to, and is_current columns. The old row stays, its effective_to gets set to yesterday, and is_current flips to false. The fact table's foreign key points to the surrogate key that was active at the time of the event, so historical revenue reports always reflect where the customer actually lived when they placed the order. This is the pattern interviewers expect you to know cold.

Type 3 adds a previous_city column alongside current_city. It's a compromise: you get one step of history without the row proliferation of Type 2. In practice it's brittle (what if the customer moves three times?) and rarely used outside of very specific reporting requirements.

is_current = true rather than the surrogate key.

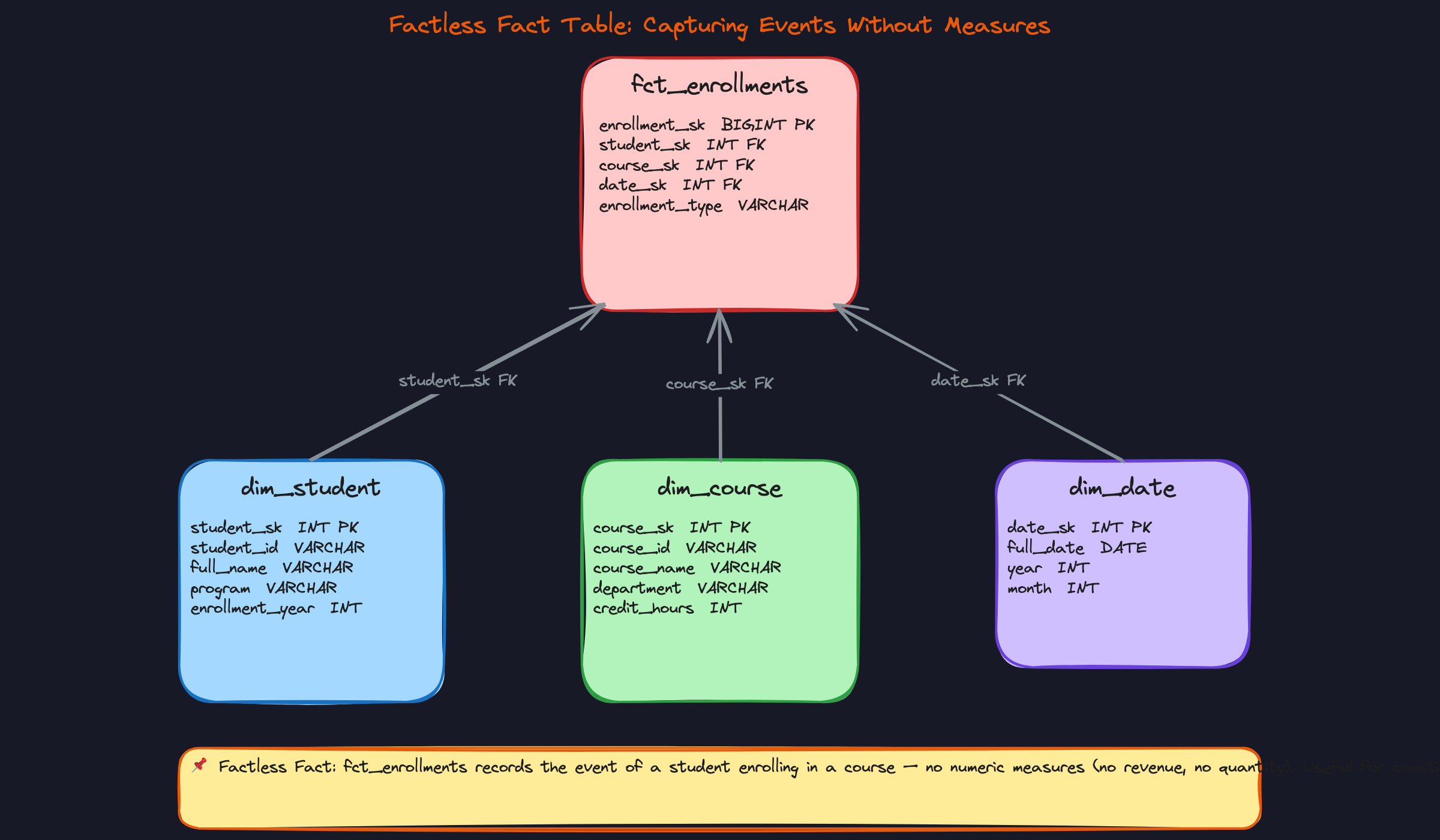

Factless Fact Tables

Most fact tables have obvious numeric measures: revenue, quantity, duration. But some of the most important business events carry no natural number at all. A student enrolling in a course. A user viewing a product page. A promotion being made available to a customer segment.

The factless fact table captures the event itself. The "measure" is just COUNT(*). Your fct_enrollments table has foreign keys to dim_student, dim_course, and dim_date, but no revenue column, no quantity column. To answer "how many students enrolled in each course per month," you group by dim_course.course_name and dim_date.month and count rows. That's it.

When to reach for this: any time you're modeling coverage, attendance, eligibility, or activity events where what matters is whether something happened, not a quantity associated with it.

Degenerate Dimensions and Junk Dimensions

These two patterns solve different problems, but both exist to keep your fact table clean.

A degenerate dimension is a transaction identifier, like an order number or invoice ID, that you store directly on the fact table with no corresponding dimension table. There's no useful descriptive attribute to hang off it; it's just a reference code analysts use to look up individual transactions. Pulling it into its own dimension table would add a join with no analytical benefit. It lives on the fact row, labeled clearly, and that's the right call.

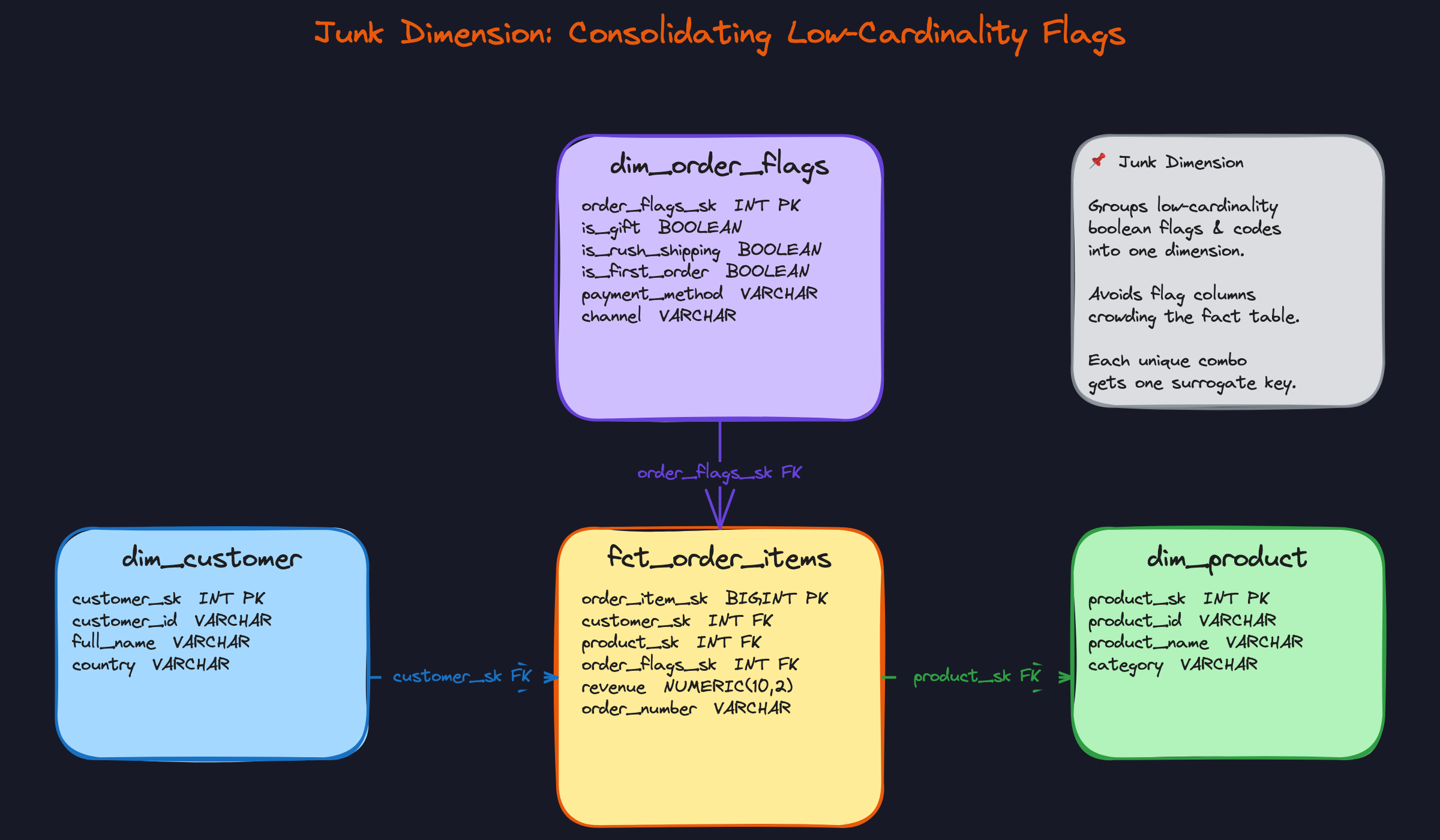

A junk dimension handles a different mess: a cluster of low-cardinality boolean flags and categorical codes that don't belong in any real dimension. is_gift, is_rush_shipping, is_first_order, payment_method, channel. If you leave these on the fact table, you end up with a wide, cluttered row full of flags that are annoying to filter and impossible to govern. Instead, you precompute every combination of those flags into a dim_order_flags table, assign each combination a surrogate key, and store just that key on the fact row. The fact table stays narrow; the flags stay queryable.

Comparing the Patterns

| Pattern | Core idea | Best fit | Watch out for |

|---|---|---|---|

| Snowflake Schema | Normalize dimension hierarchies into sub-tables | Large dimensions, strict governance, on-prem storage constraints | Extra joins slow queries; complicates BI tools and dbt models |

| SCD Type 2 | New row per attribute change with effective dates | Any dimension where history matters (customer, product) | Row proliferation; queries need is_current or date-range filters |

| Factless Fact Table | Capture events with no numeric measure | Enrollment, coverage, eligibility, page views | Easy to confuse with a modeling error; COUNT(*) is the measure |

| Junk Dimension | Bundle low-cardinality flags into one dimension | Order flags, channel codes, boolean attributes | Combination explosion if cardinality is higher than expected |

For most interview problems, you'll default to a star schema with SCD Type 2 on any dimension that changes over time. Reach for a factless fact table when the business question is about whether an event occurred, not how much of something happened. Junk dimensions are worth mentioning proactively when you notice several boolean or low-cardinality columns accumulating on your fact table; naming the pattern shows you've thought about schema hygiene, not just correctness.

What Trips People Up

Here's where candidates lose points — and it's almost always one of these.

The Mistake: Skipping Grain Declaration

The bad answer sounds like this: "I'll create a fact table for orders with columns for order total, shipping cost, and each line item's revenue." The interviewer watches you start sketching columns and immediately knows you haven't thought about grain.

What actually happens when you mix order-level and line-item-level data in one table: you either repeat the shipping cost on every line item row (now your SUM is wrong by a factor of N), or you collapse line items into aggregates and lose the detail you need for product-level reporting. Neither is recoverable without redesigning the whole table.

Before you write a single column, say out loud: "The grain of this fact table is one row per order line item. Order-level attributes like shipping cost will live in a separate order-level fact or be handled as a header fact." That one sentence signals more seniority than anything else you'll say in the interview.

The Mistake: Using Natural Keys as Surrogate Keys

You'll hear this as: "I'll join the fact table to dim_customer on customer_id." Sounds reasonable. It's wrong.

The moment you implement SCD Type 2, you have multiple rows in dim_customer for the same customer_id — one for when the customer lived in Austin, one for after they moved to Berlin. If your fact table stores customer_id (the natural key), you can't distinguish which version of the customer placed that order. The join becomes ambiguous, or you pull back multiple rows and silently double-count revenue.

Surrogate keys exist precisely to solve this. The fact table stores customer_sk, an integer generated by your warehouse pipeline. Each version of a customer gets a different customer_sk. The historical fact row points to the right one, permanently. There's also a real performance argument: joining on a 4-byte integer across a billion-row fact table is meaningfully faster than joining on a variable-length string.

The Mistake: Treating All Measures as Additive

This one gets candidates in analytics engineering interviews specifically. The bad answer: "I'll SUM account_balance across all months to get total balance over the year."

That number is meaningless. Account balance is semi-additive. You can SUM it across customers on a single date (total deposits held on March 1st), but summing across twelve monthly snapshots gives you a number that represents nothing in the real world. Interviewers at fintech companies like Stripe or Bloomberg will catch this immediately.

Non-additive measures are even trickier. conversion_rate is a ratio. If Region A converts at 4% and Region B converts at 6%, the combined rate is not 10% and it's not 5%. You have to go back to the underlying counts (sessions and conversions) and recompute. Never store a ratio as a measure you intend to aggregate.

When you define measures in an interview, classify them explicitly. Additive (revenue, quantity): SUM freely. Semi-additive (balances, headcount): SUM across some dimensions, use LAST_VALUE or point-in-time snapshots across time. Non-additive (rates, percentages): store the components, compute the ratio in the query layer.

The Mistake: Reflexively Snowflaking Everything

Engineers coming from backend or application development backgrounds do this constantly. The instinct is sound in OLTP: normalize to avoid anomalies, reduce redundancy, keep tables clean. In a dimensional model, it creates problems without solving any.

Say you split dim_product into dim_product, dim_subcategory, and dim_category. Now every query that filters by category requires two extra joins. In Snowflake, BigQuery, or Redshift, those joins aren't free. The columnar engine is optimized for scanning wide tables, not resolving deep join chains. Your dbt models get harder to read, harder to test, and slower to run. And the storage you saved is negligible when you're paying for compute by the second.

The star schema's denormalization is intentional. Category and subcategory live in dim_product because that's where analysts expect to find them, and because the warehouse handles wide scans efficiently. If an interviewer pushes back and asks "isn't that redundant?", the right answer is: "Yes, deliberately. The redundancy buys query simplicity and performance, and in a columnar warehouse the storage cost is trivial."

The Mistake: No Plan for Late-Arriving Data

Most candidates design the happy path. The interviewer asks: "What happens if an order arrives in your pipeline before the customer record has been loaded into dim_customer?" Silence. Or worse: "I'd just wait and reprocess it."

In production pipelines, late-arriving dimensions are routine. A new customer signs up and places an order in the same batch window. The ETL loads the fact before the dimension. Now you have a foreign key with no matching row.

The standard answer is a sentinel row: customer_sk = -1 with values like 'Unknown' for all attributes. Late-arriving facts get assigned customer_sk = -1 at load time. When the dimension row eventually arrives, you run a backfill job that updates the fact table to point to the correct surrogate key. Some teams skip the backfill and accept that a small percentage of historical rows will always show "Unknown" — that's a trade-off worth naming explicitly.

Interviewers at Airbnb and Stripe probe this because it's the difference between a schema that works in a notebook and one that survives a real pipeline.

How to Talk About This in Your Interview

When to Bring It Up

Dimensional modeling isn't always the explicit topic. Sometimes the interviewer frames it differently, and you need to recognize the signal.

When you hear any of these, this is your moment to go there:

- "Design an analytics schema for our e-commerce platform" (go straight to grain declaration)

- "Our analysts are complaining that reports are slow" (star schema vs normalized OLTP)

- "How would you track changes to customer data over time?" (SCD Type 2)

- "We need to report revenue by customer segment, region, and product category" (dimension table design)

- "How do you handle historical reporting?" (SCD + surrogate key strategy)

If the interviewer mentions Looker, Tableau, dbt, or any BI tool, that's an implicit signal they want dimensional modeling, even if they don't use the term.

Sample Dialogue

This first exchange shows what grain declaration looks like in practice. Most candidates skip it. Don't.

fct_order_items has one row per order line item. Each row carries foreign keys to dim_customer, dim_product, dim_date, and dim_store, plus additive measures like quantity, unit_price, and revenue. I'd keep order_number as a degenerate dimension directly on the fact table since it's a transaction identifier with no attributes worth breaking out."dim_customer with the updated city and new effective dates. The old row gets effective_to set to yesterday and is_current = false. The new row gets effective_from set to today and is_current = true. The fact table's foreign key points to the surrogate key that was current at the time of purchase, so historical revenue always reflects where the customer was when they bought, not where they are now."dim_customer on customer_id where is_current = true, rather than joining on the surrogate key. You get current-state reporting. Both queries are valid; it just depends on the business question. That's actually why SCD Type 2 is so powerful: you can answer both point-in-time and current-state questions from the same dimension table."Follow-Up Questions to Expect

"Why not just use a snowflake schema and normalize your dimensions?" On a modern columnar warehouse like BigQuery or Snowflake, wide denormalized dimensions are cheap to store and fast to scan; the extra joins in a snowflake schema slow queries and complicate your dbt models without meaningful benefit.

"How do you handle a fact row arriving before the dimension record exists?" You insert a default "unknown" dimension row with a surrogate key of -1, point the fact row at it, and reprocess when the dimension record arrives; this keeps your pipeline running without dropping data.

"What's the difference between additive and semi-additive measures, and why does it matter?" Additive measures like revenue can be summed across any dimension; semi-additive measures like account balance can be summed across customers at a point in time but not across time periods, so you use LAST_VALUE or snapshot logic instead of SUM.

"How would you partition or index this fact table?" In a columnar warehouse, partition by date_sk and cluster on your most common filter dimension like customer_id or product_id; in Redshift, set the sort key to date_sk and the distribution key to the dimension you join most frequently.

What Separates Good from Great

- A mid-level candidate designs the tables and gets the foreign keys right. A senior candidate declares the grain out loud before touching the schema, and explains why the wrong grain produces wrong query results, not just a messy schema.

- A mid-level candidate knows SCD Type 2 exists. A senior candidate explains the surrogate key mechanism, handles the "current vs historical" reporting question without prompting, and mentions what happens to in-flight ETL when a dimension row changes mid-day.

- A mid-level candidate says "I'd use a star schema because it's simpler." A senior candidate ties the choice to the query engine, the BI tooling, and the team's dbt workflow, and names the specific condition under which they'd reconsider.