Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

Normalization & Denormalization

Normalization & Denormalization

Most candidates can recite the normal forms. Third normal form, no transitive dependencies, Boyce-Codd, sure. But interviewers at Airbnb, Stripe, and Meta aren't testing your ability to recite definitions. They're testing whether you know when to break the rules on purpose.

Normalization is the practice of organizing a schema to eliminate redundant data. You store each fact exactly once, and everything else points to it. Denormalization goes the other direction: you deliberately duplicate data, flatten tables, and pre-compute results so your queries don't have to work as hard. Neither approach is correct in the abstract. The right answer depends entirely on what the schema needs to do.

Here's the tension in concrete terms. An e-commerce company like Shopify runs two very different workloads on the same underlying data. Their transactional system (OLTP) processes thousands of orders per minute; it needs fast writes, strong consistency, and a schema where updating a customer's email address means changing exactly one row. Their analytics system (OLAP) runs queries that aggregate millions of orders across months of history; it needs fast reads, and a five-table JOIN on every dashboard query is a real cost. The schema that serves one system well will actively hurt the other. That's the trade-off you need to be able to articulate, defend, and back up with SQL.

From One Messy Table to a Schema That Holds Together

Start with the worst-case scenario. Someone built an orders table and stuffed everything into it: order_id, customer_name, customer_email, product_name, product_category, quantity, unit_price. One row per line item. It works, until it doesn't.

Update a customer's email and you're touching hundreds of rows. Try to add a new product before anyone orders it and you can't, there's nowhere to put it without a fake order. Delete the last order for a customer and you've just erased that customer from your database entirely. These are the three anomalies normalization exists to kill: update, insert, and delete.

The normal forms are a checklist for eliminating those anomalies, one dependency problem at a time.

1NF is the floor. Every column holds a single atomic value, no comma-separated lists, no repeating column groups like product_1, product_2, product_3. If you see an array crammed into a VARCHAR, you're not in 1NF.

2NF only applies when you have a composite primary key. The rule: every non-key column must depend on the whole key, not just part of it. In an order_items table keyed on (order_id, product_id), if product_category only depends on product_id, that's a partial dependency and a 2NF violation. The fix is to move product_category into a products table where product_id is the full key.

3NF goes one step further. Even with a single-column PK, you can have a problem: a non-key column depending on another non-key column. If orders stores customer_email and customer_country, and customer_country is determined by customer_email rather than order_id, that's a transitive dependency. Split it out into a customers table.

BCNF is the stricter sibling of 3NF. It handles edge cases where a table has multiple overlapping candidate keys. In practice, if you've reached 3NF, you're in BCNF for most schemas you'll encounter in interviews. Mention BCNF if the interviewer pushes, but don't volunteer it unprompted.

Think of the progression like peeling layers off a dependency onion. Each normal form removes one class of problematic dependency until every fact lives in exactly one place.

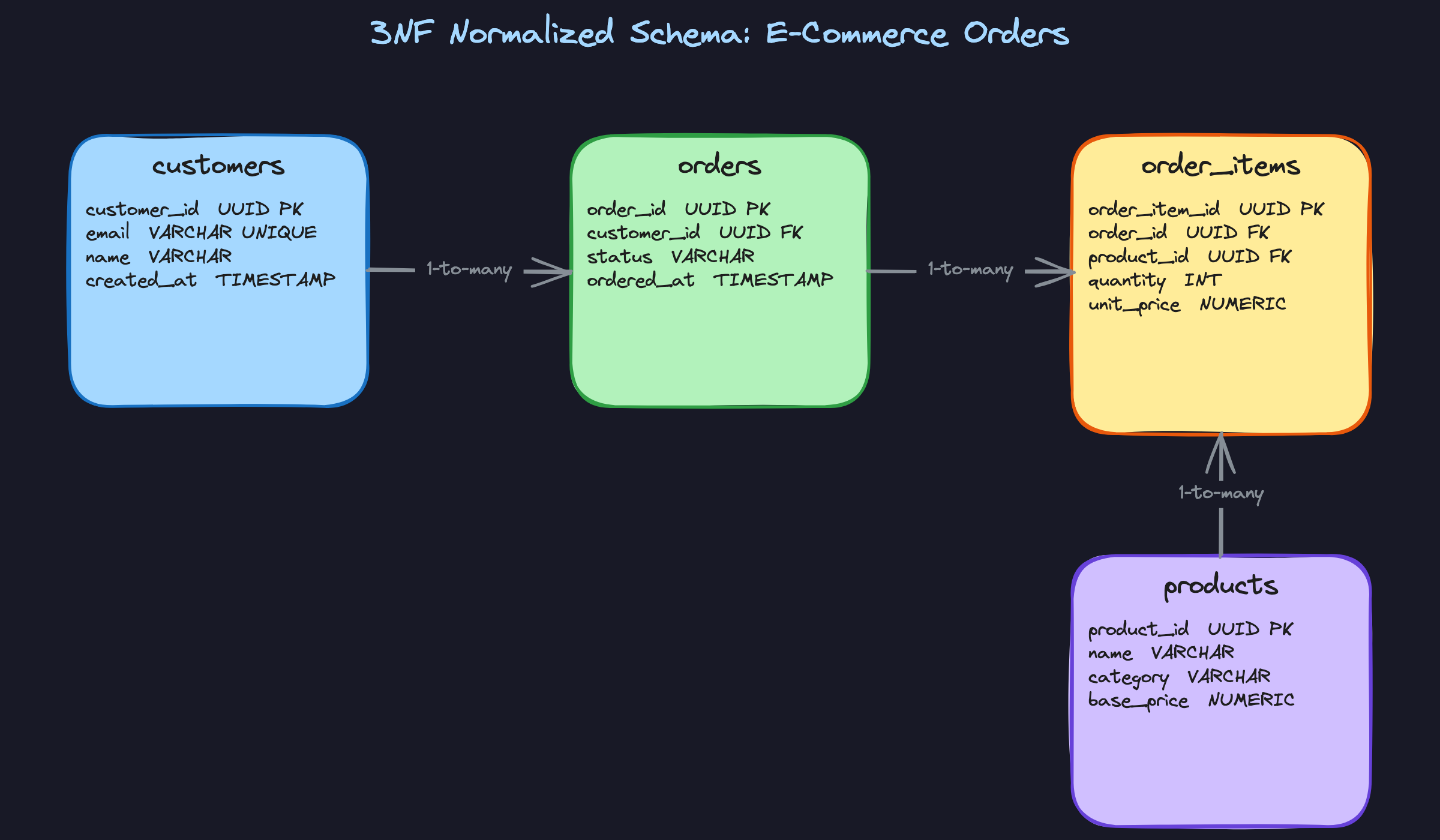

Here's what that decomposition looks like as a finished schema:

Three properties come out of this that your interviewer will care about.

Single source of truth. Each fact exists in exactly one table. When a customer changes their email, one UPDATE on the customers table propagates everywhere. Interviewers at companies like Stripe or Airbnb will probe whether you understand this, because the alternative is a data consistency nightmare at scale.

No orphaned or phantom data. You can insert a product into products without any orders. You can delete an order without losing the customer record. The schema models reality accurately, not just the happy path. This matters because interviewers will often ask "what happens if X is deleted?" and a normalized schema gives you a clean answer.

Predictable query structure. Every read that needs data from multiple entities requires a JOIN. That's the cost. The schema is honest about it. At low to moderate scale, this is fine. At very high read volume, those JOINs become the bottleneck, and that's exactly when you start reaching for denormalization. Being able to name that inflection point is what separates a candidate who memorized the definitions from one who has thought about real systems.

Patterns You Need to Know

In an interview, you'll usually need to pick a specific approach. Here are the ones worth knowing.

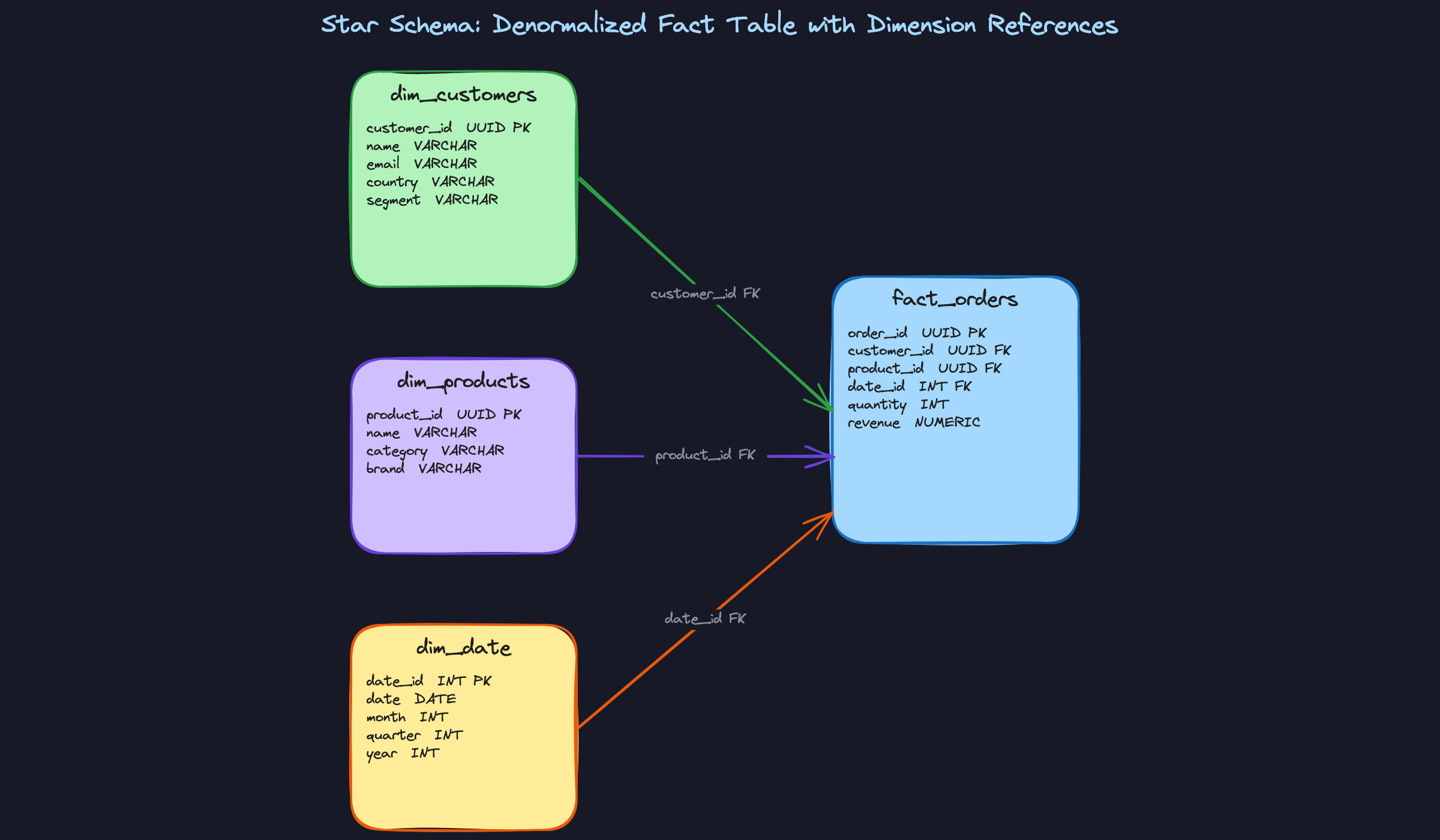

Star Schema

The star schema is the default pattern for analytical systems. You keep one central fact table (orders, events, transactions) surrounded by dimension tables (customers, products, dates). The fact table is intentionally denormalized: it holds foreign keys to every dimension plus your numeric measures like revenue and quantity. The dimensions themselves stay relatively normalized, though you'll often flatten a few attributes into them for convenience.

What makes this pattern powerful is that your analytical queries almost always follow the same shape: filter on dimensions, aggregate on facts. A query like "total revenue by product category for Q3" touches fact_orders, joins to dim_products for category, and joins to dim_date for the quarter filter. That join pattern is predictable, and columnar engines like Snowflake and BigQuery are specifically optimized for it. If you're interviewing at a company with a data warehouse, expect to sketch this from memory.

When to reach for this: any time the interviewer describes an analytics or reporting use case, especially one involving a data warehouse.

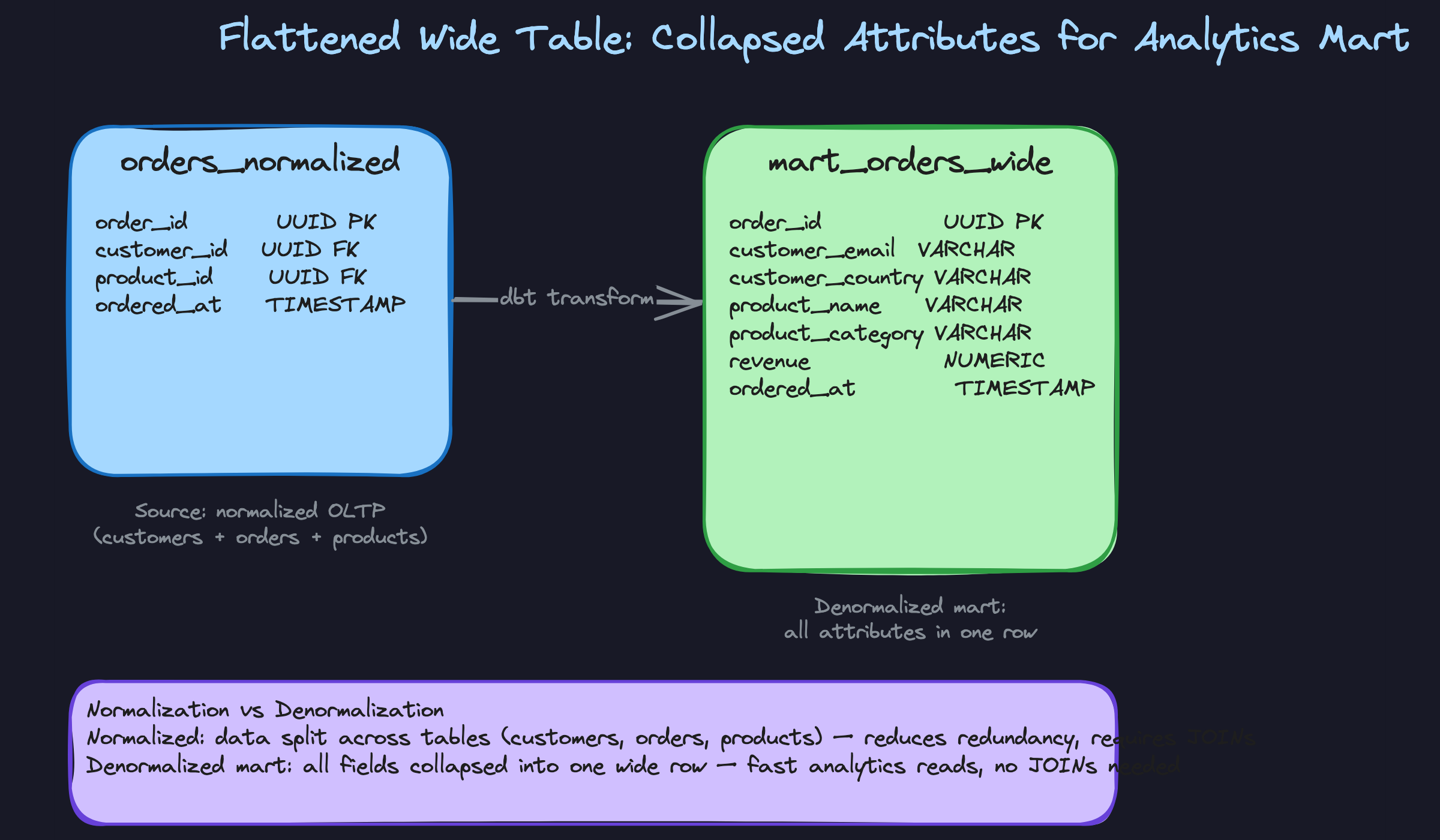

Flattened Wide Table

Where the star schema still requires joins at query time, a flattened wide table eliminates them entirely. Every attribute you might want to filter or group by gets collapsed into a single row. Customer email, product category, order status, country, all sitting next to the revenue figure in one table. This is what dbt mart layers typically produce, and it's the format ML feature stores expect.

The cost is real. When a customer changes their email, every historical row that embedded that email is now stale. You either accept that staleness or you run a full refresh. Schema changes are also painful: adding a new attribute means an ALTER TABLE on a potentially enormous table. But for read-heavy workloads where query latency matters more than write-time correctness, a single-table scan beats a five-way join every time.

When to reach for this: BI dashboards, ML feature pipelines, or any scenario where the interviewer emphasizes query simplicity and read performance over write flexibility.

JSONB Embedding

Some entities have attributes that genuinely vary by instance. A product in a furniture catalog has dimensions and material. The same product type in an electronics catalog has voltage, connectivity, and battery life. Forcing all of these into typed columns means either a sparse table with hundreds of NULLs or a separate entity-attribute-value table that's miserable to query.

JSONB in Postgres gives you a middle path. You store the variable attributes as a JSON blob on the parent row, and Postgres lets you index into it with a GIN index so key lookups stay fast. The critical constraint is that this only works well when the nested data is almost always read alongside the parent and rarely queried in isolation. If you find yourself writing complex JSONB path queries across millions of rows to answer common questions, that's a signal the data should have been a proper table.

When to reach for this: product catalogs with variable attributes, user preference blobs, configuration data, or any case where the schema genuinely varies per row rather than being poorly modeled.

Materialized Aggregates

Some queries are expensive not because of joins but because of aggregation over large tables. Computing daily revenue per product by scanning millions of order line items on every dashboard load is wasteful when the underlying data doesn't change that often. Materialized aggregates solve this by pre-computing the rollup and storing it in a summary table that dashboards read directly.

The update mechanism is where the real design decision lives. You can refresh on a schedule (every hour, every night), use a database trigger that increments counters on each insert, or run an event-driven pipeline that updates the summary whenever source data changes. Each approach has a different staleness profile. Trigger-based updates are nearly real-time but add write overhead and can become a bottleneck. Scheduled refreshes are simple but introduce a lag window. In an interview, naming that trade-off explicitly is what separates a strong answer from a generic one.

When to reach for this: reporting dashboards, leaderboards, billing summaries, or any scenario where the interviewer mentions that the same aggregation query runs thousands of times per day.

Comparing the Patterns

| Pattern | Best for | Main trade-off | Typical environment |

|---|---|---|---|

| Star schema | Analytics, BI reporting | Join cost at query time | Snowflake, Redshift, BigQuery |

| Flattened wide table | ML features, mart layers | Write amplification, staleness | dbt, ClickHouse, BigQuery |

| JSONB embedding | Variable-attribute entities | Query complexity on nested fields | PostgreSQL |

| Materialized aggregates | Dashboards, repeated rollups | Stale data, sync complexity | Any RDBMS or warehouse |

For most interview problems involving analytics, you'll default to the star schema. It's the pattern interviewers at data-heavy companies expect, and it signals you understand the OLAP design space. Reach for a flattened wide table when the interviewer emphasizes read simplicity or mentions ML pipelines, and switch to materialized aggregates when the conversation turns to dashboard latency or query cost on large fact tables. JSONB is a targeted tool; bring it up only when the schema genuinely has variable structure, and be ready to defend why a proper relational model wouldn't serve better.

What Trips People Up

Here's where candidates lose points — and it's almost always one of these.

The Mistake: Treating OLTP/OLAP as a Hard Rule

The answer that tanks candidates: "I'd normalize it because it's a transactional system" or "I'd denormalize because it's analytics." Said without any further reasoning, that's a pattern-match, not a design decision.

Interviewers at Airbnb and Stripe will immediately probe the edges. What if your OLTP product page endpoint is read 50,000 times a day and written to once? A denormalized read model might be exactly right there. What if your OLAP dimension table gets updated constantly because customers change their subscription tier mid-month? Blindly applying a star schema without thinking about update frequency will bite you.

The framing that works: "My default for OLTP is normalized because it protects write integrity, but I'd revisit that if the read pattern is dominant and the data is relatively stable." That one sentence shows you understand the trade-off instead of just the label.

The Mistake: Conflating 2NF and 3NF Violations

This one is surprisingly common, even among candidates who've studied. They'll look at a table with a single-column primary key and say something like: "This violates 2NF because the category column depends on product_name, not on the order_id."

That's a 3NF violation (a transitive dependency), not a 2NF violation. 2NF only applies when you have a composite primary key. If your PK is a single column, partial dependency is impossible by definition, so you can't have a 2NF problem. Conflating these tells the interviewer you've memorized the names but not the mechanics.

When you're walking through a schema out loud, slow down and check: is the PK composite? If not, skip 2NF entirely and go straight to checking for transitive dependencies.

The Mistake: Over-Normalizing an Analytics Schema

Some candidates, proud of their 3NF knowledge, will decompose an analytics schema into six or seven tables and call it clean. In a Postgres OLTP context, that's often fine. In Snowflake or BigQuery scanning billions of rows, it's a real problem.

A five-way JOIN across large fact and dimension tables can turn a two-second query into a two-minute one. Columnar engines are optimized for wide table scans, not for resolving foreign keys across many relations. When you're designing for analytics, 3NF is frequently the wrong goal.

The interviewer wants to hear that you know 3NF and are consciously choosing not to apply it, not that you forgot it existed.

The Mistake: Proposing Denormalization Without a Sync Strategy

This is the one that gets candidates in the final rounds. Someone will confidently propose a denormalized orders_wide table that duplicates customer email and product category. The interviewer nods, then asks: "What happens when a customer updates their email?"

Silence. Or worse: "We'd just update it."

Every denormalized copy of data is a write-time contract. You're promising that when the source changes, all copies stay consistent. If you can't explain how that happens, you haven't finished the design. The interviewer isn't being difficult; they're asking the most important question about your schema.

Before you finish pitching any denormalization, have an answer ready: a dbt model that refreshes on a schedule, a Postgres trigger, an event-driven pipeline from a CDC stream. And be prepared to state the acceptable staleness window. "This mart refreshes every hour, so dashboards may lag by up to 60 minutes" is a complete answer. "We'd keep it in sync" is not.

The Mistake: Using JSONB as a Schema Escape Hatch

JSONB is genuinely useful. It's also the place candidates hide when they don't want to think through a data model.

The red flag answer sounds like: "I'd just store the extra attributes in a JSONB column so we don't have to worry about schema changes." When an interviewer hears that, they're wondering whether you'd do the same thing in production and leave the next engineer with an unqueryable blob of nested keys.

JSONB earns its place when attributes are genuinely variable and schema-less: think product metadata where a laptop has {"ram_gb": 16, "screen_size": 15} and a t-shirt has {"color": "navy", "material": "cotton"}. You can't model that cleanly in fixed columns. But if you're storing a user's billing address in JSONB because "addresses are complicated," that's avoidance, not design.

If you reach for JSONB, say why the data is genuinely variable, show the GIN index, and demonstrate you know how to query it. That's the difference between a deliberate choice and a shortcut.

How to Talk About This in Your Interview

When to Bring It Up

You don't need to wait for the interviewer to say "normalization." The real signals are subtler.

If you hear "our analysts are running slow queries" or "the dashboard takes 30 seconds to load," that's your cue to talk about denormalization, pre-aggregation, or wide mart tables. If you hear "we're getting data inconsistencies" or "a customer's email is wrong in some places but not others," that's your cue to talk about update anomalies and why normalization fixes them.

The most important trigger: the interviewer hands you a flat table or a CSV schema as a starting point. The moment you see customer name, customer email, product name, and product category all living in an orders table, you should be flagging anomalies within 30 seconds. That instinct is exactly what they're testing.

Also watch for "we're building a reporting layer" or "this feeds our BI tool." That's an invitation to bring up star schemas and dimensional modeling without being asked.

Sample Dialogue

This exchange starts with the classic pivot: the interviewer challenges your normalized design.

Follow-Up Questions to Expect

"How do you decide which normal form to target?" Start with 3NF for any OLTP schema; then ask what the read patterns look like before deciding whether to denormalize a layer on top.

"What's the difference between a star schema and a snowflake schema?" In a snowflake schema, dimension tables are themselves normalized (a products dimension might reference a separate categories table); in a star schema, dimensions are fully denormalized flat tables, which makes queries simpler at the cost of some redundancy.

"How would you handle a dimension that changes over time, like a customer's country?" That's a slowly changing dimension (SCD), and you'd choose between overwriting (Type 1), adding a new row with effective dates (Type 2), or storing both old and new values in the same row (Type 3) depending on whether historical accuracy matters.

"When would you use JSONB instead of a separate table?" When the nested data is always read with the parent, has a genuinely variable schema across rows, and is rarely the target of complex filtering; otherwise, model it as a proper table.

What Separates Good from Great

- A mid-level answer says "denormalization improves performance." A senior answer names the specific query pattern, estimates the JOIN cost in context (billions of rows, columnar storage, cross-region latency), and explains which layer gets denormalized and why the source layer stays normalized.

- Mid-level candidates propose denormalization and move on. Senior candidates immediately address the sync strategy: who owns the refresh, what the staleness window is, and what breaks if the refresh job fails.

- The single sentence that signals seniority, worth practicing out loud: "My starting point is always the access patterns. Once I know what queries this schema needs to serve and how often, the normalization decision usually becomes obvious." It reframes the whole conversation from definitions to design thinking, which is exactly where you want to be.