Join ML Engineer Interview MasterClass (June Cohort) led by FAANG Data Scientists | Just 6 seats remaining...

ML Engineer MasterClass (June) | 6 seats left

The Data Modeling Interview Framework

The Data Modeling Interview Framework

A candidate I coached last year could write a perfect third-normal-form schema from memory. She knew her indexes, her foreign keys, her constraint syntax. She failed her Airbnb data engineering interview because she spent 40 minutes designing the wrong thing. The prompt was about analytics. She built an OLTP schema.

That's the real failure mode. Not missing syntax. Not forgetting an index. It's skipping the conversation that should happen before you write a single line of SQL, and the interviewer watches you do it in real time.

What the interviewer is actually grading: can you clarify requirements before committing to a design, reason through trade-offs out loud, and show that your schema choices connect back to the access patterns you identified? Whether you're interviewing for a Data Engineer role at Stripe, an Analytics Engineer position at Shopify, or a Senior Backend Engineer seat at Uber, those three things are what separates a hire from a no-hire. The SQL is almost secondary.

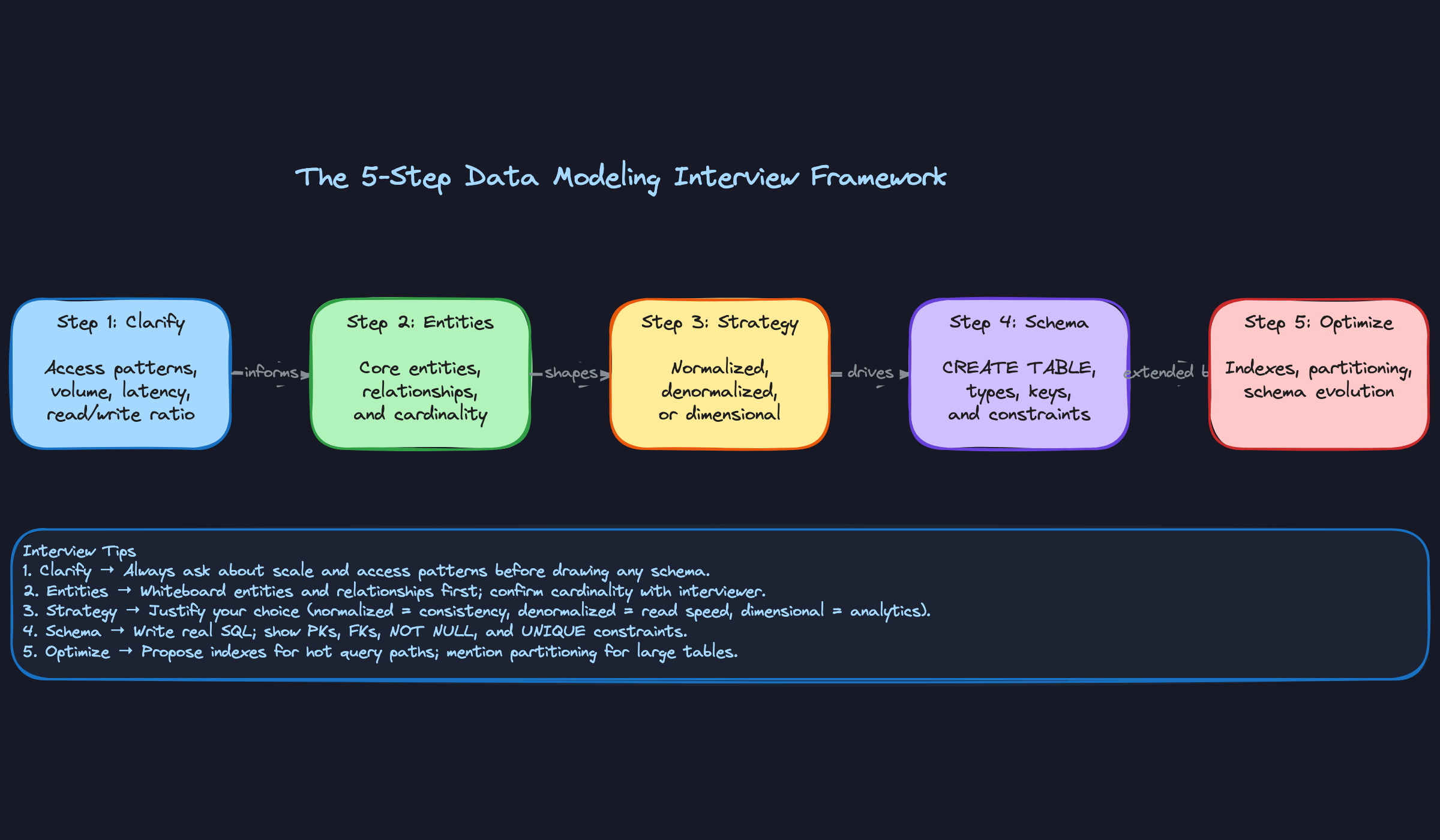

This guide gives you a five-step framework you can run on any prompt: clarify requirements, map entities and relationships, choose your modeling strategy, write the schema, then justify your indexes and plan for evolution. Each step has a concrete output. Each step signals something specific to the interviewer. Work through them in order and you'll never find yourself 30 minutes in, staring at a half-built schema, realizing you never asked whether this was for real-time queries or historical reporting.

The Framework

Five steps. Every data modeling interview, every prompt, every role. Memorize this table before you walk in the door.

| Phase | Time | Goal |

|---|---|---|

| 1. Clarify Requirements | 3-5 min | Understand access patterns, volume, and latency before touching a schema |

| 2. Identify Entities | 3-5 min | Map core entities, relationships, and cardinality in plain English |

| 3. Choose Modeling Strategy | 2-3 min | Commit to normalized, denormalized, or dimensional based on what you just learned |

| 4. Define the Schema | 10-15 min | Write actual SQL: CREATE TABLE, keys, constraints, data types |

| 5. Optimize and Evolve | 3-5 min | Add indexes, partitioning, and explain how the schema handles growth |

The total is roughly 25-35 minutes. That maps cleanly to a 45-minute interview with time left for questions. Don't rush Step 1. Candidates who skip it spend the last ten minutes backtracking.

Step 1: Clarify Requirements

The interviewer gave you a one-sentence prompt on purpose. They want to see if you ask the right questions before designing anything.

Ask exactly these three questions, in this order:

- "Who is reading this data, and how?" You're fishing for query patterns: point lookups vs. aggregations, single-user vs. cross-user analytics, real-time vs. batch.

- "What's the expected data volume and growth rate?" Millions of rows per day changes your partitioning strategy. Billions changes your storage engine choice.

- "What are the latency requirements?" Sub-100ms reads point toward OLTP and indexes. Seconds-acceptable reads open the door to OLAP and columnar storage.

A fourth question worth asking if the domain suggests it: "Do we need to track historical changes, or is current state enough?" This surfaces SCD and audit requirements before you've written a single column.

What to say:

"Before I start sketching anything, I want to make sure I understand the access patterns. Is this schema primarily serving real-time application reads, or is it feeding an analytics pipeline? And roughly what volume are we talking about, rows per day?"

How the interviewer is evaluating you: They're checking whether you treat schema design as a conversation or a solo exercise. Candidates who ask zero clarifying questions and immediately write tables signal that they don't understand that design decisions are context-dependent. One good question is better than five generic ones, so make each question count.

Do this: Write down the answers as you get them. Refer back to them explicitly in later steps. "You mentioned this is analytics-heavy, so I'm going to lean toward a denormalized model" shows you actually listened.

Don't do this: Ask "what are the non-functional requirements?" That's too abstract. Interviewers want specific, domain-aware questions, not consulting boilerplate.

Example: "Okay, so reads are primarily aggregate queries over the last 90 days, write volume is around 500K rows per day, and latency of a few seconds is acceptable. That tells me this is an analytics workload. Let me think about the entities involved before I commit to a modeling approach."

Step 2: Identify Entities and Relationships

Don't open a code block yet. Write a list first.

Name every core entity in plain English, then annotate each relationship with its cardinality. This takes three minutes and saves you from redesigning your schema halfway through.

What to do:

- List entities as nouns: User, Trip, Driver, Payment, Pricing Rule.

- For each pair that has a relationship, write the cardinality: "One User has many Trips (1:N). One Trip has one Payment (1:1). One Trip can have many Pricing Rules applied, and one Pricing Rule can apply to many Trips (M:N)."

- Flag any M:N relationship immediately. Those need a junction table. That's not optional.

What to say:

"Let me map out the entities before I write any SQL. I'm seeing Users, Trips, Drivers, and Payments as the core objects. The relationship between Trips and Pricing Rules looks many-to-many to me, so I'll need a junction table there. Does that match your mental model of the domain?"

That last question is deliberate. Asking the interviewer to validate your entity map keeps them engaged and catches misunderstandings early.

How the interviewer is evaluating you: They want to see structured thinking, not stream-of-consciousness SQL. Candidates who can articulate cardinality out loud demonstrate they understand relational theory, not just syntax. If you say "one-to-many" and then model it wrong in Step 4, that's a red flag. If you say it and model it correctly, that's a green flag.

Example: "Alright, I've got five entities and I know the Trips-to-PricingRules relationship is M:N. I'm going to need a trip_pricing_rules table for that. Now I need to decide on the overall modeling strategy before I start writing DDL."

Step 3: Choose Your Modeling Strategy

This is the decision that shapes everything downstream. You have three real options.

Normalized (3NF/OLTP): Every fact lives in one place. Foreign keys everywhere. Optimized for writes and point lookups. Use this when the prompt mentions "application database," "transactional," or "real-time."

Denormalized (OLAP/flat): Redundant data is fine. Wide tables. Fewer joins. Optimized for analytical reads. Use this when the prompt mentions "data warehouse," "reporting," "analytics," or "BI tool."

Dimensional (star schema): A fact table surrounded by dimension tables. The standard for data warehouses. Use this when the prompt is clearly about metrics, aggregations, or historical analysis across multiple dimensions.

What to say:

"Given that you told me this is primarily an analytics workload with batch writes and aggregate reads, I'm going to go with a star schema. I'll have a central fact table for trips and dimension tables for drivers, users, and time. That avoids expensive joins at query time and plays well with columnar storage engines like Redshift or BigQuery."

If the prompt is ambiguous, say so out loud and make a call: "This could go either way, but I'm going to optimize for reads since you mentioned the dashboard latency requirement. I'll denormalize and note where we'd pay the cost on writes."

How the interviewer is evaluating you: They want a justified decision, not a correct one. There's rarely a single right answer. What they're watching for is whether you can connect your strategy choice back to the requirements you gathered in Step 1. "I'm normalizing because..." followed by a reason tied to access patterns is exactly what passes this phase.

Example: "Okay, I'm committing to a star schema. Let me start writing the actual table definitions. I'll do the fact table first, then the dimensions."

Step 4: Define the Schema

Now you write SQL. Concrete, specific, runnable SQL. Not pseudocode.

What to do:

- Start with your primary fact or core entity table. Write the full

CREATE TABLEstatement including column names, data types, primary key, and foreign keys. - Use real types:

BIGSERIALorUUIDfor PKs,TIMESTAMPTZ(notTIMESTAMP) for any time column,NUMERIC(10,2)for money,TEXToverVARCHAR(255)in PostgreSQL unless you have a real length constraint. - Add

NOT NULLwhere the column should never be empty. AddUNIQUEconstraints where duplicates would be a data integrity bug. Skip neither.

1CREATE TABLE trips (

2 trip_id BIGSERIAL PRIMARY KEY,

3 driver_id BIGINT NOT NULL REFERENCES drivers(driver_id),

4 rider_id BIGINT NOT NULL REFERENCES users(user_id),

5 started_at TIMESTAMPTZ NOT NULL,

6 ended_at TIMESTAMPTZ,

7 status TEXT NOT NULL CHECK (status IN ('active', 'completed', 'cancelled')),

8 fare_amount NUMERIC(10,2),

9 created_at TIMESTAMPTZ NOT NULL DEFAULT NOW()

10);

11Talk while you type. Narrate every decision: "I'm using TIMESTAMPTZ instead of TIMESTAMP because we'll have drivers in multiple time zones and I want all times stored in UTC." That narration is half your score.

What to say when you make a type choice:

"I'm usingNUMERIC(10,2)for fare_amount rather thanFLOATbecause floating-point arithmetic on currency is a silent bug waiting to happen. Fixed precision is the right call here."

How the interviewer is evaluating you: Data type choices, constraint choices, and key design are all signals of production experience. Candidates who use VARCHAR(255) for everything, skip NOT NULL, and use FLOAT for money have clearly never debugged a data quality issue in production. Candidates who make deliberate, explained choices signal they have.

Do this: After writing each table, pause and say "Does this look right to you before I move on?" It keeps the interview collaborative and catches misunderstandings before you've written five more tables on top of a wrong assumption.

Example: "I've got the core tables defined. Before I move on, let me talk about how I'd index this and handle the growth you mentioned."

Step 5: Justify Indexes, Partitioning, and Evolution

Most candidates treat this as a formality. Don't. This is where senior-level candidates separate themselves.

What to do:

- Add indexes tied directly to the query patterns from Step 1. Not generic indexes. Specific ones: "You said the most common query is looking up all trips for a driver in a date range, so I want a composite index on

(driver_id, started_at)." - If volume is high (hundreds of millions of rows or more), add partitioning. Range partition on a time column is almost always the right default for event-style data.

- Address schema evolution explicitly. What happens when a new column needs to be added? What happens if a relationship changes from 1:N to M:N?

1-- Composite index for the primary query pattern

2CREATE INDEX idx_trips_driver_date

3 ON trips (driver_id, started_at DESC);

4

5-- Partition by month for large-volume tables

6CREATE TABLE trips (

7 trip_id BIGSERIAL,

8 started_at TIMESTAMPTZ NOT NULL,

9 ...

10) PARTITION BY RANGE (started_at);

11

12CREATE TABLE trips_2024_01

13 PARTITION OF trips

14 FOR VALUES FROM ('2024-01-01') TO ('2024-02-01');

15What to say:

"For schema evolution, adding nullable columns is safe in PostgreSQL and won't require a table rewrite. But if we ever need to change a column type or add a NOT NULL column to an existing table with data, that's a migration that needs a multi-step strategy: add nullable, backfill, add constraint. I'd document that in the migration plan."

How the interviewer is evaluating you: They want to know you think beyond the happy path. A schema that works today but can't be evolved without downtime is a liability. Mentioning pg_repack, online index builds, or blue-green migration strategies (even briefly) signals you've shipped schemas to production, not just designed them in interviews.

Putting It Into Practice

The prompt lands: "Design a schema for a ride-sharing app's trip and pricing history."

Most candidates start typing CREATE TABLE trips. Don't. Here's what the conversation actually looks like when you do it right.

The Interview Dialogue

Do this: Notice the candidate asked two distinct questions: access pattern (analytics vs. ops) and temporal fidelity (can we reconstruct history?). The answer to the first determines normalized vs. dimensional modeling. The answer to the second forces a pricing snapshot pattern. Both answers completely reshape the schema before a single column is written.

Mapping Entities Before Writing SQL

[You narrate or jot down:]

1Core entities:

2- Trip (the ride itself)

3- Driver (FK reference, probably lives in another service)

4- Rider (same)

5- Fare (the total charge for a trip, 1:1 with Trip)

6- Fare Component (line items: base, surge, toll, promo — 1:N with Fare)

7- Pricing Snapshot (the surge multiplier and rules active at trip time, 1:1 with Trip)

8Cardinality notes: - Trip to Fare: 1:1 (every trip has exactly one fare record) - Fare to Fare Component: 1:N (one fare, multiple line items) - Trip to Pricing Snapshot: 1:1 (each trip gets its own immutable snapshot of the rules that were active at booking time, so the audit trail can never be corrupted by a later rule change)

Do this: Saying "let me map entities before writing SQL" signals seniority. It shows you're not just pattern-matching to a schema you've seen before. Interviewers at Stripe and Airbnb specifically watch for this pause.

total_fare column on trips makes that impossible to reconstruct. Separate Fare and FareComponent tables give you that granularity without denormalizing into a mess of nullable columns on the trip row.Writing the Schema

-- Trips fact table, partitioned by month for query efficiency

CREATE TABLE trips (

trip_id UUID NOT NULL DEFAULT gen_random_uuid(),

driver_id BIGINT NOT NULL,

rider_id BIGINT NOT NULL,

status TEXT NOT NULL CHECK (status IN ('completed', 'cancelled', 'disputed')),

started_at TIMESTAMPTZ NOT NULL,

ended_at TIMESTAMPTZ,

pickup_lat NUMERIC(9, 6) NOT NULL,

pickup_lng NUMERIC(9, 6) NOT NULL,

dropoff_lat NUMERIC(9, 6),

dropoff_lng NUMERIC(9, 6),

city_id INT NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT now(),

PRIMARY KEY (trip_id, started_at) -- partition key must be in PK

) PARTITION BY RANGE (started_at);

CREATE TABLE trips_2024_q1 PARTITION OF trips

FOR VALUES FROM ('2024-01-01') TO ('2024-04-01');

-- Composite index for driver-level analytics queries

CREATE INDEX idx_trips_driver_time

ON trips (driver_id, started_at DESC);

-- Fares: one per trip, stores the final computed total

CREATE TABLE fares (

fare_id UUID NOT NULL DEFAULT gen_random_uuid() PRIMARY KEY,

trip_id UUID NOT NULL UNIQUE,

currency CHAR(3) NOT NULL DEFAULT 'USD',

total_amount NUMERIC(10, 2) NOT NULL,

rider_charged NUMERIC(10, 2) NOT NULL,

driver_payout NUMERIC(10, 2) NOT NULL,

calculated_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

-- Fare components: the line items that sum to total_amount

CREATE TABLE fare_components (

component_id UUID NOT NULL DEFAULT gen_random_uuid() PRIMARY KEY,

fare_id UUID NOT NULL REFERENCES fares(fare_id),

component_type TEXT NOT NULL CHECK (

component_type IN ('base_fare', 'surge', 'toll', 'promo_discount', 'platform_fee')

),

amount NUMERIC(10, 2) NOT NULL,

metadata JSONB

);

CREATE INDEX idx_fare_components_fare

ON fare_components (fare_id);

-- Pricing snapshots: one per trip, immutable record of what rules were active at booking time

CREATE TABLE pricing_snapshots (

snapshot_id UUID NOT NULL DEFAULT gen_random_uuid() PRIMARY KEY,

trip_id UUID NOT NULL UNIQUE,

surge_multiplier NUMERIC(4, 2) NOT NULL DEFAULT 1.0,

base_rate_cents INT NOT NULL,

pricing_model TEXT NOT NULL,

effective_at TIMESTAMPTZ NOT NULL,

snapshot_data JSONB NOT NULL -- full rule blob for audit

);

Do this: UseTIMESTAMPTZeverywhere, notTIMESTAMP. Ride-sharing data crosses time zones constantly. UsingTIMESTAMPwithout timezone is a silent correctness bug that senior engineers catch immediately.

Handling the Scale Challenge

city_id-based partition key as a secondary dimension, or at least a partial index per major city. Driver analytics queries almost always filter by city first.pricing_rule_id foreign key and keeping a pricing_rules table with versioned rows. But then every fare audit query needs a join to reconstruct what rule was active, and if someone ever updates a pricing rule row incorrectly, your audit trail is corrupted. The snapshot approach trades storage for immutability. For a finance audit use case, I'd take that trade every time.What Made This Work

The candidate never wrote a single line of SQL until entities and cardinality were mapped. The schema that came out of the analytics-focused requirements, partitioned facts, line-item components, immutable snapshots, looks nothing like what you'd build for a real-time dispatch system. That's the whole point of Step 1.

When challenged on volume and duplication, the candidate didn't panic. They named the trade-off, picked a side, and explained why. That's what senior-level data modeling sounds like.

Common Mistakes

Most candidates don't fail because they don't know SQL. They fail because they reveal, through small habits and omissions, that they don't actually think about data the way a senior engineer does. Here's what that looks like in practice.

Jumping Straight to Tables

The interviewer says "design a schema for a ride-sharing app" and within 30 seconds you're writing CREATE TABLE trips. You feel productive. The interviewer is already marking you down.

Skipping requirements is the single fastest way to signal junior thinking. The interviewer doesn't know if you'd design the same schema for a real-time dispatch system as you would for a historical analytics warehouse. Neither do you, because you didn't ask.

Don't do this: Opening with "Okay, so I'll have atripstable withid,driver_id,rider_id..." before asking a single question.

Do this: Spend the first few minutes asking about read/write ratio, query patterns, and data volume. The schema falls out of those answers naturally.

The fix: treat the first five minutes as non-negotiable requirements time, even if the interviewer seems impatient to see SQL.

Over-Normalizing an Analytics Schema

You hear "design a schema for our reporting dashboard" and you produce a pristine 3NF schema with six tables, four junction tables, and foreign keys everywhere. Technically correct. Completely wrong for the context.

Analytics workloads are read-heavy and column-scan-heavy. Joins are expensive at scale. When the prompt signals OLAP (words like "reporting," "dashboard," "historical," "aggregation"), the interviewer expects a star schema or a denormalized wide table, not a normalized OLTP design.

1-- What over-normalized looks like in an analytics context:

2SELECT

3 f.amount,

4 d.driver_name,

5 r.rider_name,

6 v.vehicle_type,

7 ci.city_name,

8 co.country_name

9FROM fact_trips f

10JOIN dim_drivers d ON f.driver_id = d.driver_id

11JOIN dim_riders r ON f.rider_id = r.rider_id

12JOIN dim_vehicles v ON f.vehicle_id = v.vehicle_id

13JOIN dim_cities ci ON f.city_id = ci.city_id

14JOIN dim_countries co ON ci.country_id = co.country_id;

15-- Six joins on a billion-row fact table. Snowflake schema. Painful.

16A star schema collapses dim_cities and dim_countries into one dim_location table. Fewer joins, faster scans, easier for analysts to query.

The fix: when you hear analytics or reporting, explicitly say "I'm going to denormalize here because this is a read-heavy workload" and explain the trade-off.

Ignoring Time

This one is subtle, which is why it catches so many people. The interviewer asks you to model pricing history for a ride-sharing app and you create a prices table with a single amount column and no temporal tracking. What happens when the price changes? You overwrite it. Now you've destroyed your audit trail.

Any domain involving history, compliance, or "what was true at time T" requires temporal modeling. That means effective dates, SCD patterns, or bitemporal columns. Skipping this signals you've never had to debug a data discrepancy in production.

1-- Without temporal tracking (wrong for pricing history):

2CREATE TABLE driver_rates (

3 driver_id BIGINT PRIMARY KEY,

4 rate_per_mile NUMERIC(10, 4)

5);

6

7-- With temporal tracking (right):

8CREATE TABLE driver_rates (

9 driver_id BIGINT NOT NULL,

10 rate_per_mile NUMERIC(10, 4) NOT NULL,

11 effective_from TIMESTAMPTZ NOT NULL,

12 effective_to TIMESTAMPTZ, -- NULL means currently active

13 PRIMARY KEY (driver_id, effective_from)

14);

15Do this: Any time the domain involves prices, user attributes, statuses, or anything that changes over time, proactively say "I'll need to track history here" before the interviewer has to prompt you.

Skipping Constraints Like They Don't Matter

A candidate who writes CREATE TABLE statements with no NOT NULL, no UNIQUE, no CHECK constraints is telling the interviewer they think data integrity is someone else's problem.

Constraints aren't just defensive programming. They're documentation. A NOT NULL on started_at tells every future engineer that a trip without a start time is invalid by definition. A CHECK (status IN ('pending', 'active', 'completed', 'cancelled')) makes the valid state space explicit in the schema itself.

1-- What lazy schema design looks like:

2CREATE TABLE trips (

3 id BIGINT,

4 driver_id BIGINT,

5 rider_id BIGINT,

6 status TEXT,

7 fare NUMERIC

8);

9

10-- What a senior engineer writes:

11CREATE TABLE trips (

12 id BIGSERIAL PRIMARY KEY,

13 driver_id BIGINT NOT NULL REFERENCES drivers(id),

14 rider_id BIGINT NOT NULL REFERENCES riders(id),

15 status TEXT NOT NULL CHECK (status IN ('pending', 'active', 'completed', 'cancelled')),

16 fare NUMERIC(10, 2) NOT NULL CHECK (fare >= 0),

17 started_at TIMESTAMPTZ NOT NULL,

18 ended_at TIMESTAMPTZ,

19 created_at TIMESTAMPTZ NOT NULL DEFAULT now()

20);

21Don't do this: Saying "I'll add constraints later" or treating the schema as a rough sketch. In an interview, later never comes.

Bolting Indexes On at the End

The interviewer asks "how would you optimize this?" and only then do you mention indexes. That's backwards, and they know it.

Indexes aren't an optimization layer you add after the fact. They're a direct consequence of your access patterns, which you identified in Step 1. If you said "the most common query is finding all trips for a driver in the last 30 days," then a composite index on (driver_id, started_at DESC) should appear in your schema, not as an afterthought when prompted.

1-- This index should come up when you're writing the schema, not when asked:

2CREATE INDEX idx_trips_driver_started

3 ON trips (driver_id, started_at DESC);

4

5-- And if 90% of queries filter on active trips only:

6CREATE INDEX idx_trips_active

7 ON trips (driver_id, started_at DESC)

8 WHERE status = 'active'; -- partial index, smaller and faster

9The fix is simple: as soon as you write a table, say out loud which queries will hit it most and what index structure supports those queries.

Quick Reference

Read the Prompt, Pick Your Strategy

| Signal word or phrase | What it implies | Modeling approach |

|---|---|---|

| "analytics", "reporting", "dashboard" | Read-heavy, aggregation queries | Dimensional (star schema, denormalized) |

| "real-time", "low latency", "transactional" | High write throughput, point lookups | Normalized OLTP (3NF) |

| "audit trail", "history", "as-of queries" | Temporal tracking required | SCD Type 2 or bitemporal |

| "flexible attributes", "user-defined fields" | Schema varies per row | JSONB column or EAV hybrid |

| "10 billion rows", "petabyte scale" | Partition or shard early | Partitioned tables, columnar store |

| "event stream", "clickstream", "logs" | Append-only, time-ordered | Time-partitioned, ClickHouse or TimescaleDB |

Five Questions to Ask Before Writing a Single Table

These are ranked by how much the answer changes your design.

- "Is this for operational queries or analytics?" This single question determines whether you normalize or denormalize.

- "What are the two or three most important queries this schema needs to serve?" Drives index and partition choices.

- "What's the expected data volume, and how fast does it grow?" Tells you whether partitioning is optional or mandatory.

- "Does anything here need historical tracking or point-in-time queries?" Unlocks the temporal design conversation.

- "What's the read/write ratio?" A 99:1 read-heavy system and a write-heavy event pipeline need completely different trade-offs.

Five-Step Framework at a Glance

| Step | Time (30-min interview) | What you're doing |

|---|---|---|

| 1. Clarify | 0-5 min | Ask the five questions above. Don't skip this. |

| 2. Entities | 5-10 min | List entities, note cardinality (1:N, M:N), identify junction tables |

| 3. Strategy | 10-12 min | State your modeling choice and why, out loud |

| 4. Schema | 12-22 min | Write CREATE TABLE with types, PKs, FKs, constraints |

| 5. Optimize | 22-30 min | Add indexes, partitioning, discuss evolution and trade-offs |

Go-To PostgreSQL Patterns

| Decision | Default choice | When to deviate |

|---|---|---|

| Primary key type | BIGSERIAL | Use UUID when rows merge across systems or need globally unique IDs |

| Timestamps | TIMESTAMPTZ always | Never TIMESTAMP if your system spans time zones |

| Flexible attributes | JSONB with a GIN index | Fall back to EAV only if you need to query individual keys at massive scale |

| Soft deletes | deleted_at TIMESTAMPTZ + partial index on WHERE deleted_at IS NULL | Hard delete only when regulation requires it |

| Audit columns | created_at, updated_at on every table | Add created_by, updated_by when the prompt mentions compliance |

| Large tables | Partition by RANGE on a date column | Hash partition when there's no natural time dimension |

Phrases to Use

These are exact sentences you can drop into the interview at the right moment.

- When starting: "Before I sketch any tables, I want to make sure I understand the access patterns. Can I ask a few quick questions?"

- When stating your strategy: "Given that this is primarily read-heavy analytics, I'm going to lean toward a denormalized star schema rather than 3NF, because join cost at query time matters more than write overhead here."

- When writing the schema: "I'll add

NOT NULLandCHECKconstraints explicitly, because these signal to the team what the data contract actually is." - When adding indexes: "I'm tying this index back to the query pattern we identified in step one, specifically the lookup by

user_idfiltered on recent dates." - When challenged on scale: "Good question. If volume grows 10x, I'd partition this table by month on

created_atand push the aggregation layer into a materialized view or a columnar store like Redshift." - When wrapping up: "The main trade-off I made here was denormalizing the pricing snapshot into the trips table. That hurts write flexibility but makes the 95th-percentile query significantly cheaper."

Red Flags to Avoid

- Writing

CREATE TABLEbefore asking a single clarifying question. - Designing a fully normalized 3NF schema for a prompt that says "analytics dashboard."

- Leaving out

NOT NULL,UNIQUE, orCHECKconstraints entirely, it signals you're not thinking about data integrity. - Mentioning indexes only after the interviewer asks, rather than connecting them to your stated query patterns.

- Ignoring time. If the domain involves bookings, payments, or user state, and you have no temporal columns or history strategy, that's a gap the interviewer will notice.